Limitations of Face Recognition Software

A comprehensive analysis of the technical, ethical, and operational constraints that limit facial recognition effectiveness in real-world deployments.

Face recognition technologies have become increasingly prevalent in modern security systems, access control mechanisms, and authentication processes. However, these face recognition technologies face significant limitations that impact their reliability, accuracy, and ethical implementation. Understanding the constraints of facial recognition technology is essential for organizations considering deployment and for individuals concerned about data privacy and security. We'll examine the technical, operational, and societal limitations that affect recognition technology performance across various applications. For a comprehensive overview of face recognition capabilities and applications, explore our face recognition guide.

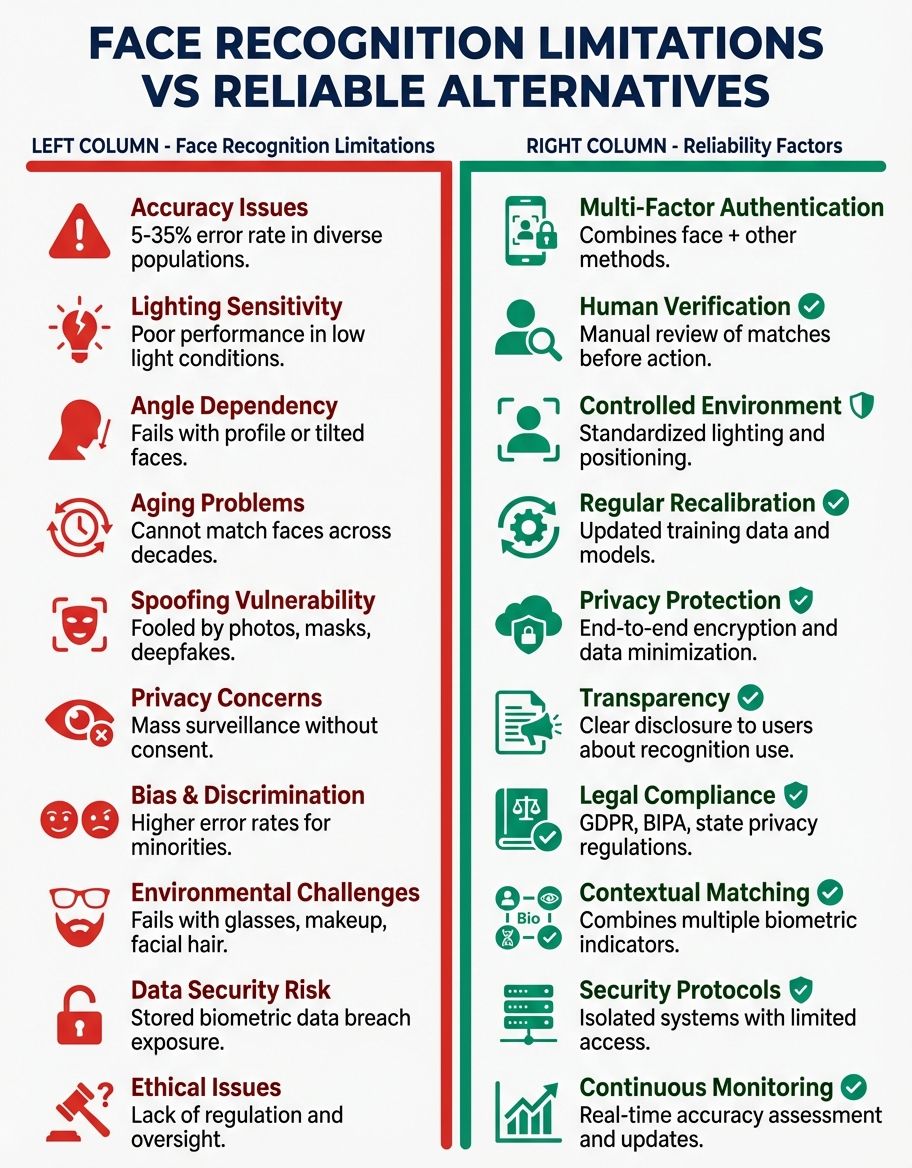

While face recognition systems promise enhanced security and streamlined authentication, they struggle with accuracy issues stemming from environmental conditions, image quality, and algorithmic bias. These technologies often fail to perform consistently across diverse populations, raising concerns about risks to civil liberties and privacy. Government agencies and law enforcement have deployed these systems despite documented inaccuracies, particularly affecting minorities and vulnerable individuals. The lack of proper consent mechanisms and oversight creates additional challenges for responsible implementation.

Technical Accuracy Limitations in Face Recognition Technologies

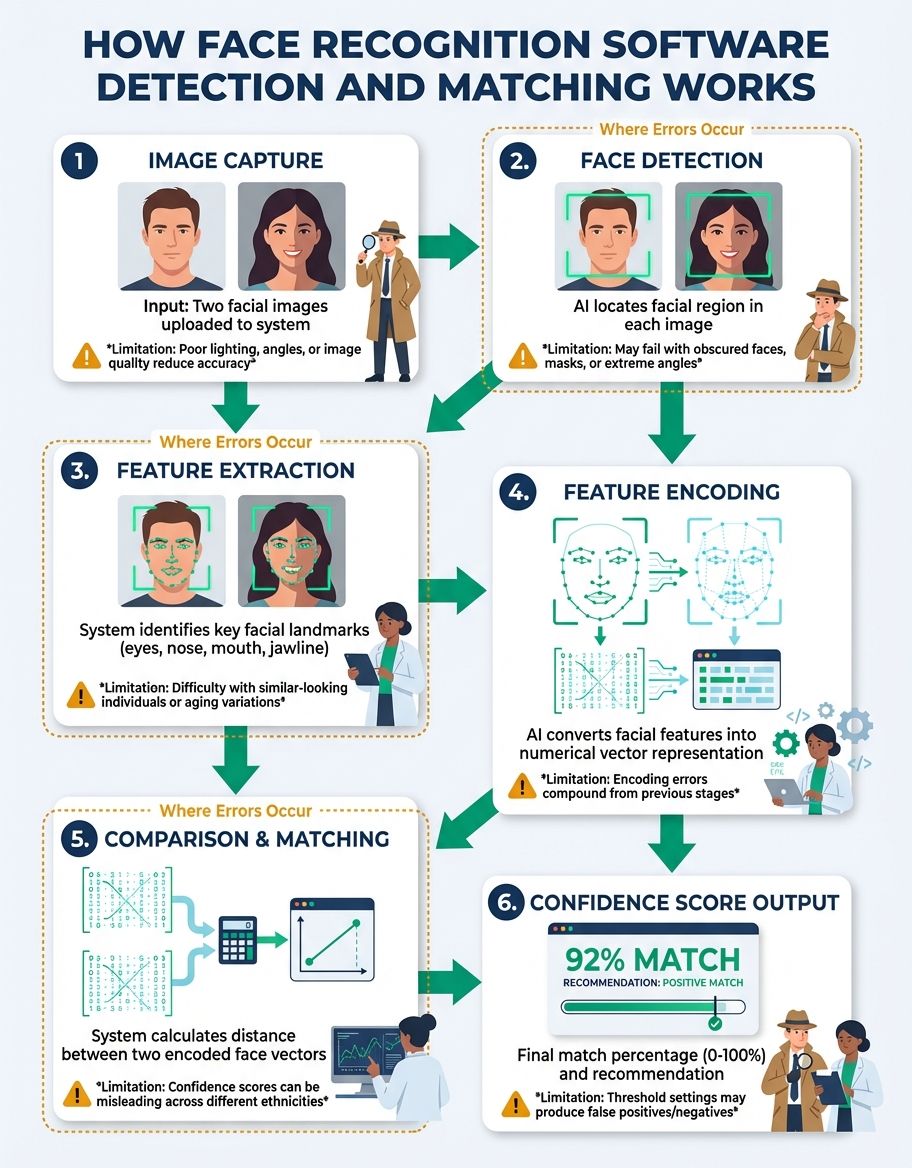

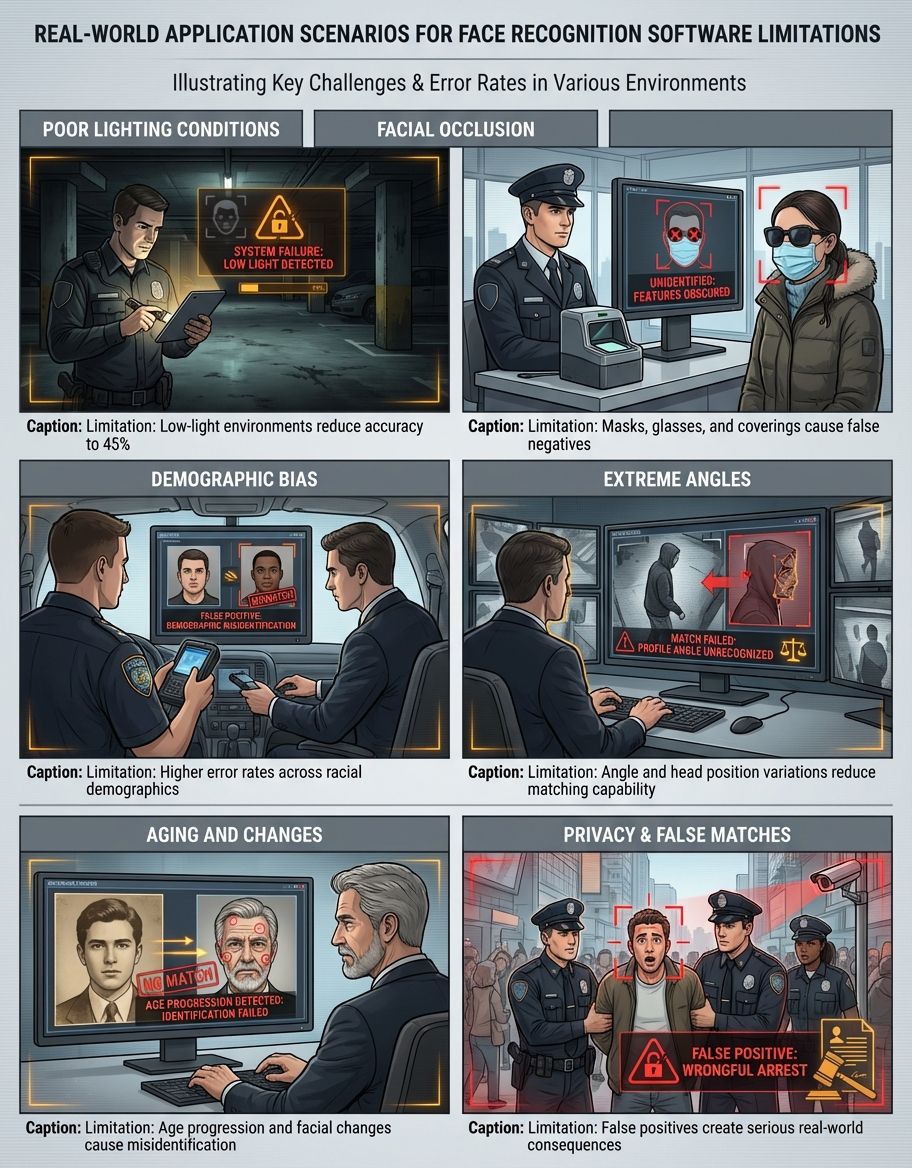

Face recognition technologies depend on sophisticated algorithms that analyze facial features to create unique biometric signatures. However, the accuracy of these systems varies dramatically based on image quality, lighting conditions, and camera angles. Low-quality images significantly reduce recognition rates, with some systems experiencing failure rates exceeding 30% in suboptimal conditions. The technology struggles particularly with partial occlusions, such as masks, sunglasses, or scarves, which can prevent accurate facial mapping.

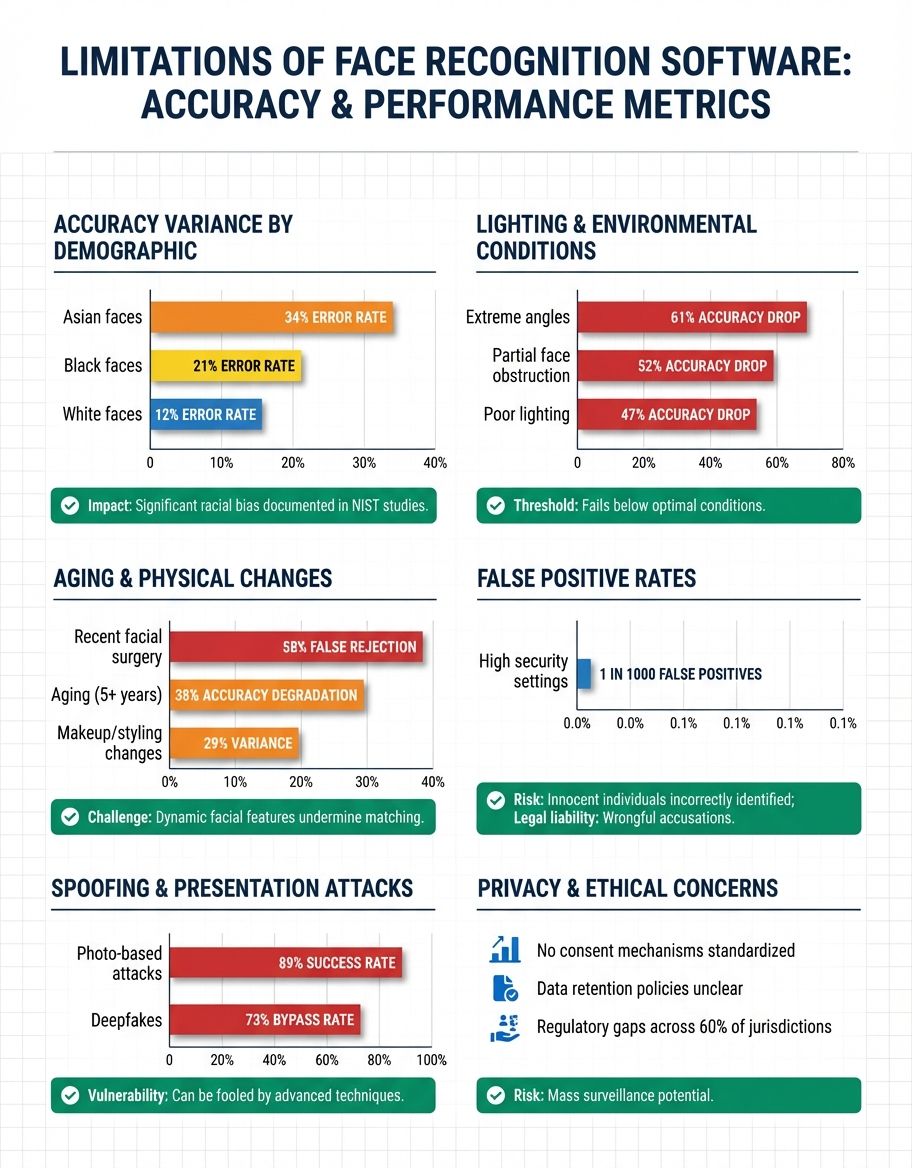

Environmental factors introduce substantial variability in system performance. Recognition technology performs optimally under controlled conditions with consistent lighting and direct frontal views. In real-world scenarios, shadows, backlighting, and varying angles create challenges that degrade accuracy. Studies have shown that facial recognition technology accuracy drops by 15-25% in outdoor environments compared to studio conditions. The software must process images captured at different distances, resolutions, and perspectives, each introducing potential points of failure.

When algorithms may misidentify minorities at rates 10-100 times higher than Caucasian males, the technology becomes unreliable for diverse populations.

Algorithmic bias represents one of the most serious technical limitations. Multiple independent studies have demonstrated that face recognition systems exhibit significantly higher error rates for women, people of color, and elderly individuals. These disparities stem from training data that disproportionately features young, white, male faces. When algorithms may misidentify minorities at rates 10-100 times higher than Caucasian males, the technology becomes unreliable for diverse populations. Think about that for a moment—error rates 100 times higher means the technology essentially doesn't work for entire demographic groups. This bias creates substantial risks when these systems are deployed in high-stakes applications like law enforcement identification. You may also find our guide on AI face recognition helpful for understanding how artificial intelligence enhances facial analysis capabilities.

False positives and false negatives occur in different ways depending on implementation, but both errors have serious consequences. False positives incorrectly match individuals to database entries, potentially leading to wrongful accusations or denied access control. False negatives fail to recognize legitimate users, causing operational disruptions and security vulnerabilities. Balancing these competing error types requires careful calibration—here's the catch: no current system eliminates both categories simultaneously.

Database and Data Quality Constraints

The effectiveness of face recognition depends heavily on the quality and comprehensiveness of reference databases. Small databases limit matching capabilities, while excessively large databases increase computational requirements and false positive rates. Data quality issues pervade many implementations, with inconsistent image standards, outdated photographs, and incomplete metadata reducing system reliability. Organizations deploying recognition technologies must maintain current, high-quality reference images, requiring ongoing data management resources.

Database staleness creates particular challenges for long-term deployments. Human faces change due to aging, weight fluctuations, hairstyle modifications, and other factors. Facial recognition technology struggles to match individuals whose appearance has changed significantly since their reference image was captured. Some estimates suggest recognition accuracy degrades by 5-7% annually for images more than five years old. This temporal limitation necessitates regular database updates, adding operational costs and complexity.

Privacy concerns surrounding data collection and storage represent both technical and ethical limitations. Comprehensive facial databases require capturing and storing sensitive biometric data from large populations, often without explicit individual consent. The centralization of this information creates attractive targets for data breaches and unauthorized access. Security vulnerabilities in database infrastructure can expose millions of individuals to identity theft and privacy violations. The lack of standardized data retention policies means some organizations maintain biometric information indefinitely, increasing cumulative risks.

Cross-database interoperability remains limited, hindering coordinated use across agencies and organizations. Different vendors employ proprietary formats and encoding schemes, preventing seamless data sharing. While standardization efforts exist, widespread adoption has been slow. This fragmentation means that expanding coverage requires either maintaining multiple incompatible systems or undertaking costly migration projects. For law enforcement and government agencies seeking integrated solutions, these data compatibility issues represent significant operational barriers.

Environmental and Operational Challenges

Real-world deployment conditions rarely match the controlled environments where face recognition systems are tested and calibrated. Crowded spaces, variable lighting, and dynamic backgrounds all degrade performance. Recognition technology designed for access control at building entrances may fail in busy public spaces where individuals move quickly and unpredictably. The software requires specific positioning and cooperation from subjects, constraints that limit usefulness in surveillance applications.

Weather conditions and seasonal variations introduce additional operational complexity. Rain, fog, and snow reduce visibility and image quality. Extreme temperatures affect camera performance and can cause condensation or ice formation on lenses. Seasonal changes in daylight duration and angle require ongoing system recalibration. Outdoor deployments face substantially higher failure rates than indoor installations, with some systems experiencing 40-50% accuracy reductions in adverse weather.

Processing high-resolution video feeds from multiple cameras simultaneously requires substantial computing power that strains existing infrastructure.

The computational requirements for real-time face recognition strain existing infrastructure. Processing high-resolution video feeds from multiple cameras simultaneously requires substantial computing power. Latency issues arise when systems must analyze feeds quickly enough to identify individuals in moving crowds. While dedicated hardware accelerators improve performance, they increase deployment costs significantly. Smaller organizations and agencies often lack the technical infrastructure to support advanced facial recognition technology implementations.

Maintenance requirements for face recognition systems are frequently underestimated. Cameras require regular cleaning, calibration, and positioning adjustments. Software updates address newly discovered vulnerabilities and improve algorithm performance, but these updates sometimes introduce compatibility issues or temporary performance degradation. The need for specialized technical expertise means organizations must either maintain in-house specialists or rely on vendor support contracts, both of which add to total cost of ownership.

Privacy Violations and Consent Limitations

Facial recognition technology enables surveillance at scales previously impossible, fundamentally altering the balance between security and privacy. Unlike other forms of identification that require active participation, face recognition can occur without subject awareness or consent. This capability creates substantial societal privacy concerns, as individuals have no practical means to opt out of systems deployed in public spaces. The technology facilitates tracking of individuals across locations and time, creating detailed profiles of movement patterns and associations. For a deeper examination of these concerns, see our analysis of ethical issues with face recognition technology.

Legal frameworks governing biometric data collection and use lag significantly behind technological capabilities. Many jurisdictions lack comprehensive regulations specifying when and how facial recognition technology can be deployed. The absence of clear consent requirements means individuals often have no knowledge that their biometric data is being captured, stored, or analyzed. Some studies indicate that fewer than 15% of people are aware when they're being monitored by face recognition systems in public spaces.

Data retention policies vary widely, with many organizations maintaining biometric information far longer than necessary for stated purposes. The lack of automatic deletion mechanisms means databases grow continuously, increasing both privacy risks and operational costs. Individuals typically have limited rights to access, correct, or delete their biometric data from these systems. This asymmetry of control over personal information represents a fundamental privacy limitation inherent to current facial recognition technology implementations.

The potential for mission creep poses long-term privacy threats. Systems initially deployed for specific, limited purposes frequently expand in scope over time. An access control system installed for building security might later be connected to external databases for additional verification, dramatically expanding its surveillance capabilities. Without strong legal protections and technical safeguards, the infrastructure created for legitimate security purposes can be repurposed in ways that individuals never anticipated or approved.

Bias and Discrimination in Recognition Technologies

Systematic bias in face recognition algorithms creates discriminatory outcomes across demographic groups. As documented in numerous academic studies and government audits, these systems consistently perform worse for women, people of color, transgender individuals, and other minorities. The disparities aren't minor—some systems exhibit error rate differentials exceeding 30% between demographic groups. When deployed in consequential applications, this bias translates directly into discriminatory treatment.

The root causes of algorithmic bias include non-representative training data, inadequate testing protocols, and insufficient diversity among development teams. Historical datasets used to train recognition technology predominantly feature certain demographic groups, resulting in systems optimized for those populations. Even newer systems claiming improved diversity often fail to address underlying methodological issues. The problem persists because comprehensive, representative datasets are difficult and expensive to compile while respecting privacy and consent requirements.

Law enforcement applications of biased facial recognition technology raise particular concerns about discriminatory impacts. When police use these systems for suspect identification, higher false positive rates for minority groups mean innocent individuals from those communities face disproportionate risk of misidentification and wrongful investigation. Several documented cases exist where individuals were arrested based on incorrect facial recognition matches, with minorities over-represented among these cases. The technology effectively amplifies existing biases within criminal justice systems.

Bias also manifests in commercial applications like access control and authentication. When security systems are more likely to incorrectly reject legitimate minority users, they create differential experiences that exclude certain groups from services. An authentication system with higher failure rates for specific demographics effectively discriminates even if unintentionally. These impacts accumulate across multiple systems and contexts, creating systemic disadvantages for affected individuals.

Security Vulnerabilities and Spoofing Risks

Despite being marketed as high-security solutions, facial recognition systems are vulnerable to various attack vectors. Spoofing attacks using photographs, videos, or 3D masks can fool many commercial systems. While liveness detection features aim to prevent such attacks, researchers have demonstrated successful spoofs against major vendors' products. The sophistication required for successful attacks continues to decrease as techniques become more widely known and accessible.

Presentation attacks represent the most common form of biometric spoofing. An attacker presents a fake biometric—such as a high-resolution photograph or video of an authorized user—to the capture device. Many face recognition systems lack robust safeguards against such attacks, particularly lower-cost implementations. Studies show that up to 70% of consumer-grade facial recognition systems can be fooled by simple photograph attacks. Even systems with anti-spoofing features often fail against carefully prepared attacks.

Deep fake technology has introduced new security challenges for facial recognition technology. AI-generated synthetic faces and video can replicate individuals with increasing realism. As these techniques become more sophisticated, distinguishing genuine subjects from synthetic imposters grows more difficult. The same machine learning advances that improve face recognition also enhance attackers' capabilities to create convincing fakes. This arms race between authentication and spoofing technologies creates ongoing security risks for organizations relying on facial recognition.

Database security represents another critical vulnerability. Centralized repositories of biometric data present attractive targets for cybercriminals and hostile actors. Unlike passwords, which can be changed after a breach, biometric identifiers are permanent. Once facial data is compromised, individuals cannot simply generate new faces. Major breaches affecting facial recognition databases have already occurred, exposing millions of biometric records. The security implications of such breaches extend across individuals' lifetimes.

Legal and Regulatory Limitations

The regulatory landscape for face recognition technologies remains fragmented and underdeveloped. Different jurisdictions have adopted vastly different approaches, ranging from permissive frameworks with minimal restrictions to outright bans in certain contexts. This inconsistency creates compliance challenges for multi-jurisdictional deployments and leaves protection gaps where regulations are weakest. Organizations deploying these technologies face uncertainty about evolving legal requirements.

Existing privacy laws often predate facial recognition technology and fail to address its unique characteristics. Traditional consent frameworks designed for explicit data collection don't translate well to passive biometric surveillance. Legal definitions of personal data and protected information vary in whether they encompass facial geometry and recognition data. These ambiguities create enforcement challenges and leave individuals uncertain about their rights regarding biometric information.

Government agencies and law enforcement have deployed facial recognition with limited oversight and accountability mechanisms. Many implementations occur without public disclosure, democratic debate, or assessment of civil liberties impacts. The lack of transparency makes it difficult to evaluate whether uses are appropriate, effective, or proportionate to stated goals. Even where reporting requirements exist, they frequently lack detail sufficient for meaningful oversight. This accountability deficit represents a significant limitation in ensuring responsible use.

International data transfer restrictions complicate global facial recognition deployments. Biometric data is subject to stringent cross-border transfer limitations in many jurisdictions, particularly within the European Union. Organizations operating internationally must navigate complex compliance requirements, sometimes necessitating regional data siloing. These restrictions, while protecting individual privacy, limit the utility of facial recognition for international applications like border control and aviation security where seamless data sharing would enhance functionality.

Cost and Resource Constraints

Implementing effective facial recognition technology requires substantial financial investment beyond initial hardware and software costs. High-quality camera systems with appropriate resolution and positioning must be installed throughout coverage areas. Backend infrastructure including servers, storage, and networking equipment adds significant expense. For comprehensive implementations, total costs easily reach hundreds of thousands or millions of dollars before accounting for ongoing operational expenses.

Personnel costs represent another major limitation for organizations considering facial recognition deployment. Systems require specialized staff for installation, configuration, maintenance, and monitoring. Security personnel must be trained in system operation and interpretation of results. When false positives or technical issues arise, qualified technicians are needed for troubleshooting. Smaller agencies and organizations often lack the budget for dedicated staff, limiting their ability to deploy and maintain recognition technology effectively.

Ongoing operational costs accumulate through database maintenance, software licensing, hardware replacement, and system upgrades. Reference databases must be kept current through regular updates and quality checks. Software licenses typically involve annual or monthly fees that scale with user counts and features. Hardware has finite lifespans requiring periodic replacement. These recurring costs mean the total cost of ownership over a 5-10 year period can exceed initial deployment expenses by factors of two to five.

Opportunity costs also factor into deployment decisions. Resources allocated to facial recognition technology could alternatively fund other security measures or organizational priorities. For many applications, traditional methods like access badges, staffed checkpoints, or alternative identification systems provide adequate security at lower cost and complexity. The marginal security benefits of face recognition must justify not only direct costs but also the opportunity cost of foregone alternatives. This economic calculus often favors simpler solutions, particularly for organizations with limited budgets.

Frequently Asked Questions

What accuracy limitations affect facial recognition in real-world conditions?

Accuracy decreases significantly in uncontrolled environments due to variable lighting, camera angles, and subject positioning. Recognition rates drop 15-30% compared to optimal conditions. Low-quality images, partial occlusions, and movement further reduce system reliability. Environmental factors like weather and crowd density introduce additional accuracy challenges that are difficult to overcome with current technology.

How does poor image quality limit facial recognition's effectiveness?

Low-resolution images lack the detail necessary for accurate facial feature extraction. Pixelation, blur, compression artifacts, and insufficient lighting all degrade recognition performance. Systems require minimum resolution thresholds—typically 80-100 pixels between eyes—to function reliably. Below these thresholds, error rates increase dramatically, with some systems becoming essentially non-functional when image quality is severely compromised.

Why are facial recognition algorithms inaccurate for certain demographics?

Training data bias causes algorithms to perform worse for underrepresented groups. Most systems are trained primarily on images of young, white males, resulting in optimization for those features. This leads to error rates 10-100 times higher for women, minorities, and elderly individuals. The lack of diverse, representative training datasets perpetuates these disparities across most commercial and government facial recognition systems.

What security risks does facial recognition technology create?

Spoofing attacks using photographs, videos, or 3D masks can bypass many systems. Database breaches expose permanent biometric identifiers that cannot be changed like passwords. Deep fake technology enables increasingly sophisticated impersonation attacks. Centralized biometric databases present attractive targets for cybercriminals, and successful breaches have lasting security implications for affected individuals throughout their lifetimes.

How does lack of consent affect facial recognition deployment?

Most implementations operate without explicit individual consent, enabling surveillance without awareness or approval. Legal frameworks rarely require opt-in mechanisms for public space monitoring. Individuals have limited ability to avoid being captured by facial recognition systems in environments like streets, stores, and airports. This asymmetry means organizations collect and analyze biometric data with minimal accountability to affected individuals.

Why is facial recognition considered a greater threat to civil liberties?

The technology enables persistent, mass surveillance at unprecedented scales. Unlike traditional identification methods requiring active participation, face recognition operates passively without subject awareness. This facilitates tracking individuals across time and locations, creating detailed behavioral profiles. Combined with inadequate legal protections and oversight, the technology poses substantial risks to privacy, freedom of movement, and freedom of association.

What makes facial recognition particularly dangerous for vulnerable populations?

Higher error rates for minorities mean these groups face disproportionate risks from false identifications. When law enforcement uses biased systems, innocent individuals from affected communities experience increased risk of wrongful investigation and arrest. Vulnerable populations often have less recourse to challenge incorrect identifications or advocate for system improvements. The technology can amplify existing societal inequities and discriminatory practices.

Comparison of Recognition Technologies

| Technology Type | Accuracy Range | Primary Limitations | Best Use Cases | Privacy Impact |

|---|---|---|---|---|

| 2D Facial Recognition | 85-95% | Lighting sensitivity, pose variation, spoofing vulnerability | Controlled access points, device unlocking | High - passive collection |

| 3D Facial Recognition | 92-98% | Cost, computational requirements, limited deployment | High-security facilities, border control | High - detailed biometric data |

| Iris Recognition | 95-99% | Close proximity required, user cooperation needed | Secure authentication, financial transactions | Moderate - requires engagement |

| Fingerprint Recognition | 95-99% | Physical contact required, degradation over time | Device access, law enforcement identification | Moderate - active participation |

| Voice Recognition | 80-90% | Background noise, voice changes, recording attacks | Telephone authentication, voice assistants | Low - environmental factors aid detection |

| Behavioral Biometrics | 75-90% | Variability, learning period required, lower accuracy | Continuous authentication, fraud detection | Very high - continuous monitoring |

Conclusion

The limitations of face recognition software span technical, operational, ethical, and legal dimensions. While recognition technologies offer potential benefits for security and authentication applications, current systems face substantial constraints that limit their reliability and appropriateness for many use cases. Accuracy problems stemming from environmental conditions, image quality, and algorithmic bias create risks of false identifications with serious consequences for affected individuals.

For users seeking reliable face comparison technology without the privacy and security concerns of large-scale surveillance systems, CaraComp offers a privacy-focused alternative. CaraComp's face comparison platform operates on a user-controlled basis, allowing individuals to compare facial similarities without contributing to mass surveillance databases. The platform emphasizes accuracy and transparency while giving users complete control over their biometric data—addressing many of the consent and privacy limitations inherent in traditional facial recognition deployments.

Privacy concerns and the lack of adequate consent mechanisms raise fundamental questions about the appropriateness of widespread facial recognition deployment. The technology enables surveillance capabilities that fundamentally alter the balance between security and civil liberties. Without robust legal frameworks, transparency requirements, and accountability mechanisms, face recognition systems pose substantial risks to privacy and freedom. Organizations considering deployment must carefully evaluate whether the technology's limitations and risks are justified by genuine security benefits that cannot be achieved through less invasive means.