Ethical Issues With Face Recognition

Facial recognition technology (FRT) has rapidly evolved from science fiction to everyday reality, transforming how we unlock smartphones, board airplanes, and authenticate financial transactions. Yet beneath this technological marvel lies a complex web of ethical issues with face recognition that society must urgently address. As FRT technologies proliferate across public and private sectors, concerns about privacy violations, racial discrimination, and the erosion of individual liberties have intensified. These ethical challenges demand careful examination of how facial recognition technology impacts individual freedoms, data security, and the fundamental balance between security and personal freedom.

Understanding the ethical implications of facial recognition requires examining multiple dimensions: the technology's inherent biases, its surveillance capabilities, consent mechanisms, and regulatory frameworks. When police deploys FRT without proper oversight, when private companies harvest facial data without transparency, or when systems discriminate against marginalized communities, the challenge becomes clear. These are not merely technical problems to solve through better algorithms—they represent profound questions about the kind of society we want to build and the values we choose to prioritize as FRT becomes increasingly ubiquitous in our daily lives.

Understanding FRT in Ethical Issues With Face Recognition

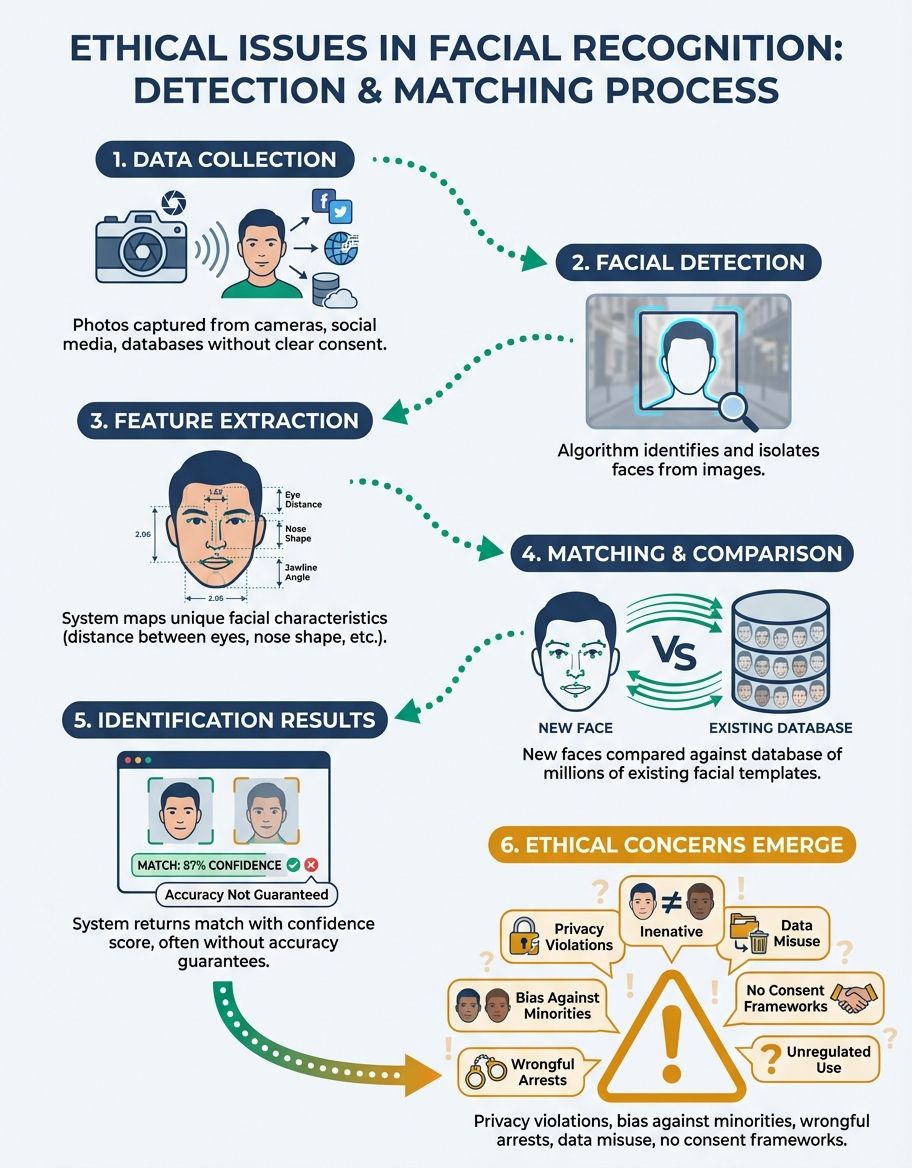

Face recognition technology (FRT) operates by analyzing unique facial features and converting them into mathematical representations stored in vast databases. The ethical issues surrounding FRT begin with its fundamental operation: the technology creates permanent digital records of our physical appearance without requiring active participation. Unlike passwords that can be changed or keys that can be replaced, our faces remain constant biological identifiers that facial recognition can track across time and space.

The deployment of the technology by police agencies, private corporations, and government entities has accelerated dramatically, often outpacing the development of ethical guidelines and legal protections. these technologies now monitor public spaces, analyze social media images, and verify identities at airports and border crossings. This widespread adoption raises critical questions about consent—individuals cannot easily opt out of facial recognition surveillance in public spaces, creating an environment where continuous monitoring becomes normalized.

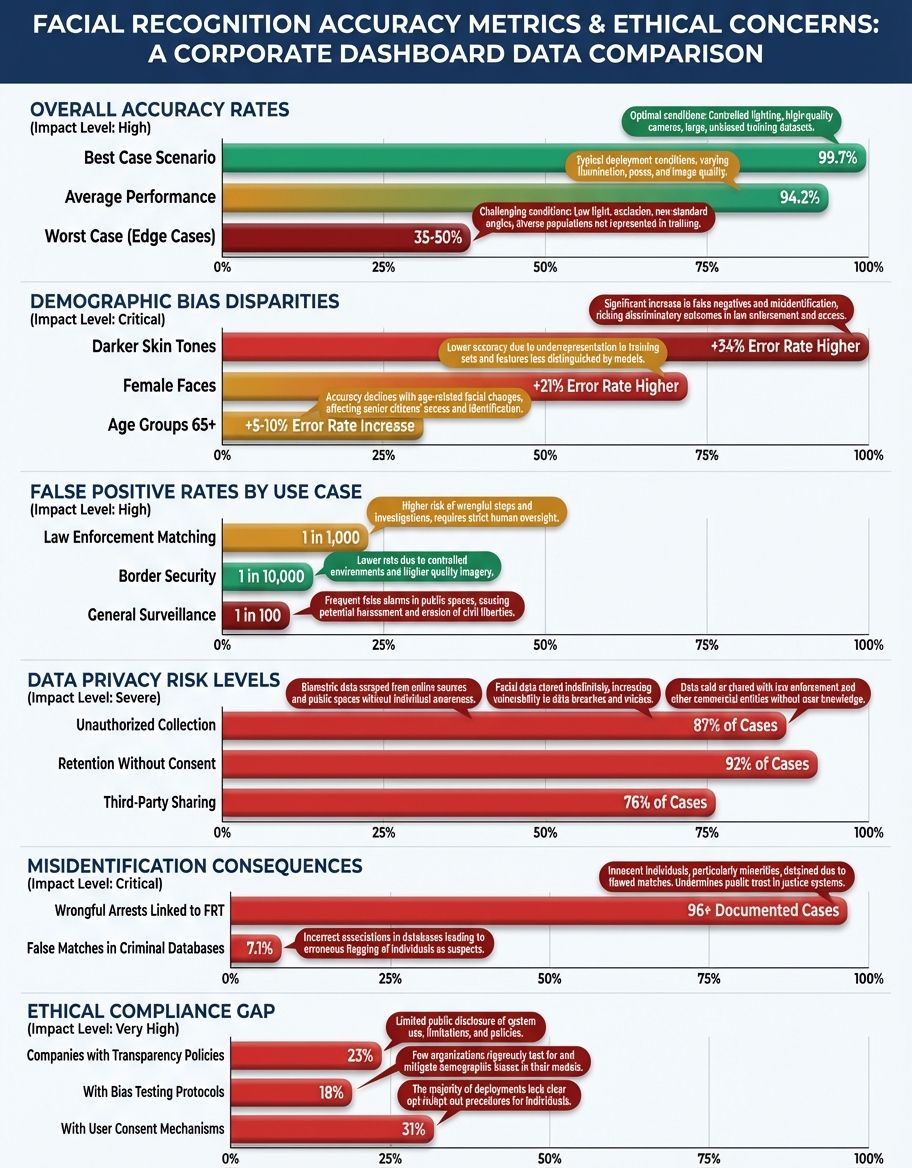

Research reveals that facial recognition face recognition may be particularly prone to accuracy disparities across demographic groups. Studies have documented higher error rates when FRT technologies attempt to identify women, people with darker skin tones, and elderly individuals. These technical limitations translate into real-world harm when facial recognition is used for critical decisions in police, border control, or access to services. The technology's imperfect nature means that innocent people may be misidentified, leading to wrongful arrests, denied services, or unwarranted surveillance targeting specific communities.

The data infrastructure supporting FRT presents additional ethical challenges. Training FRT algorithms requires massive datasets of facial images, often collected without explicit consent from the individuals depicted. Companies have scraped billions of images from social media platforms and public websites to build their facial recognition databases, commodifying our biometric data without permission. This practice undermines individual autonomy and creates permanent records that can be accessed, analyzed, or potentially breached by malicious actors, raising profound questions about data ownership and the right to control one's own biometric information.

Understanding Data in Ethical Issues With Face Recognition

The data ecosystem underlying facial recognition technology creates a labyrinth of ethical concerns that extend far beyond simple privacy violations. These technologies depend on enormous datasets containing millions or billions of facial images, each linked to personal identifiers and metadata. The collection, storage, and utilization of this biometric data occurs largely beyond public scrutiny, with limited transparency about how companies and governments acquire, process, and share these sensitive records.

Data security vulnerabilities pose existential risks to facial recognition deployments. When organizations maintain centralized databases of facial data, they create high-value targets for cybersecurity breaches. Unlike stolen credit card numbers that can be cancelled and reissued, compromised facial data represents permanent exposure—you cannot change your face. Major data breaches have already exposed millions of facial images and associated personal information, demonstrating that even well-resourced organizations struggle to adequately protect this sensitive biometric data from determined attackers.

The permanence and immutability of facial data amplifies these risks. Once your facial characteristics are encoded in a facial recognition database, that information can persist indefinitely, potentially being bought, sold, or transferred between organizations without your knowledge or consent. This creates a surveillance infrastructure where your movements, associations, and activities can be tracked across time and space. The data generated by facial recognition technologies can reveal intimate details about your life—where you go, whom you meet, what events you attend—constructing detailed profiles that extend far beyond simple identification.

Regulatory frameworks struggle to keep pace with the rapid expansion of FRT data collection. While some jurisdictions have implemented data protection laws requiring consent for biometric collection, enforcement remains inconsistent and loopholes abound. Companies often embed consent and compliance mechanisms in lengthy terms of service agreements that users rarely read, creating the illusion of informed consent while effectively normalizing invasive data practices. The lack of standardized regulations means that facial data collected in one context may be repurposed for entirely different applications, without additional consent or oversight.

The commercial exploitation of facial data raises profound ethical questions about autonomy and commodification. Technology companies have built billion-dollar businesses by leveraging facial recognition and the underlying data infrastructure, often without compensating the individuals whose faces fuel these technologies. This creates an asymmetric power dynamic where corporations and governments accumulate vast repositories of sensitive biometric information while individuals lack meaningful control over their own data. The absence of robust data governance frameworks allows these practices to continue largely unchecked, prioritizing commercial interests over individual rights and societal wellbeing.

Understanding Public Safety in Ethical Issues With Face Recognition

Proponents of facial recognition technology frequently justify its deployment by citing security benefits—the ability to identify suspects, prevent terrorism, and locate missing persons. Police agencies argue that facial recognition provides crucial investigative tools that can solve crimes more efficiently than traditional methods. However, this public safety rationale must be carefully weighed against the technology's demonstrated inaccuracies, potential for abuse, and impact on civil liberties.

The relationship between FRT deployment and actual improvements in public safety remains contested and underexamined. While anecdotal success stories exist, comprehensive studies evaluating the technology's effectiveness in preventing crime or improving security outcomes are limited. Some research suggests that the presence of surveillance technology may deter certain criminal activities, but these benefits must be balanced against the costs: increased government surveillance capabilities, erosion of privacy expectations, and the chilling effect on lawful activities like political protest or religious assembly.

Police's use of facial recognition creates particular tensions between security objectives and individual rights. When police departments deploy real-time facial recognition in public spaces, they create dragnet surveillance technologies that monitor entire populations rather than targeting specific suspects based on probable cause. This approach inverts traditional policing principles, treating everyone as potential suspects subject to continuous monitoring. The implications for democratic society are profound—when citizens know they are under constant surveillance, they may self-censor, avoiding lawful activities like attending protests or visiting sensitive locations out of fear that their movements are being tracked and recorded.

Understanding Ethics in Ethical Issues With Face Recognition

The ethics framework for evaluating facial recognition technology must encompass multiple philosophical traditions and practical considerations. At its core, FRT challenges fundamental principles of human dignity, autonomy, and consent. When systems can identify and track individuals without their knowledge or permission, they reduce people to data points—objectifying human beings in ways that undermine our intrinsic worth and agency.

Utilitarian ethics, which evaluate actions based on their consequences, offer one lens for examining FRT deployment. From this perspective, the technology's value depends on whether its benefits align with ethics principles (crime prevention, security enhancements, convenience) outweigh its harms (privacy loss, potential discrimination, surveillance impacts). However, calculating this utilitarian balance proves extraordinarily difficult, as many consequences remain unknown or unevenly distributed. Communities already subject to over-policing may bear disproportionate harms from FRT deployment while receiving few benefits, raising questions about distributive justice that pure utilitarian calculus cannot adequately address.

Deontological ethics, focused on rights and duties, provides an alternative framework. From this perspective, certain actions are inherently wrong regardless of outcomes. Collecting biometric data without informed consent violates individuals' fundamental right to privacy and bodily autonomy. Deploying systems known to discriminate against protected groups violates duties of equal treatment and non-discrimination. These ethical violations exist independent of whether FRT provides security benefits—the means themselves are problematic even if the ends might be desirable.

The ethics of care, emphasizing relationships and context, highlights how FRT deployment affects trust and social cohesion. When governments and corporations deploy surveillance technologies without meaningful consultation or consent, they damage the social contract between institutions and citizens. The resulting erosion of trust can undermine civic participation, reduce willingness to cooperate with authorities, and fragment social solidarity. An ethics of care framework demands that facial recognition deployment prioritize maintaining healthy relationships and community wellbeing over narrow technical or security objectives.

Professional ethics for technologists, policymakers, and organizational leaders must evolve to address facial recognition-specific challenges. Engineers designing these systems face ethical obligations to minimize bias, protect privacy, and refuse participation in applications that cause demonstrable harm. Policymakers must balance competing interests while prioritizing fundamental freedoms and democratic values. Corporate leaders must consider stakeholder impacts beyond shareholder value, recognizing their responsibility to society. The challenge lies in translating these abstract ethical principles into concrete policies, design decisions, and governance structures that meaningfully constrain FRT deployment while preserving its legitimate beneficial applications.

Understanding Systems in Ethical Issues With Face Recognition

Facial recognition technologies comprise complex technical architectures involving cameras, algorithms, databases, and decision-making frameworks. Understanding the ethical issues requires examining how these interconnected systems operate, fail, and impact the people they affect. these technologies are not neutral tools—they embody the values, assumptions, and biases of their creators, training data, and deployment contexts.

You may also find our guide on Limitations Of Face Recognition Software helpful for deeper context on this topic.

The algorithms powering facial recognition rely on machine learning models trained on historical data. When training datasets lack diversity or contain embedded biases, the resulting systems perpetuate and amplify these problems. Research has repeatedly demonstrated that FRT technologies exhibit higher error rates for women and people of color, a direct consequence of training datasets that overrepresent white male faces. These systemic biases cannot be fixed through simple technical adjustments—they require fundamental reconsideration of data collection practices, model evaluation criteria, and deployment contexts.

The integration of facial recognition into broader surveillance technologies creates emergent risks that exceed the sum of individual components. When facial recognition is combined with other identification technologies, social media analysis, and predictive policing algorithms, the result is a comprehensive surveillance apparatus capable of tracking individuals across multiple dimensions of their lives. These integrated technologies enable unprecedented forms of social control, where governments and corporations can monitor, analyze, and influence behavior at population scale.

Accountability mechanisms for these systems remain inadequate. When a system makes an error—misidentifying a suspect or denying someone access to a service—determining responsibility proves challenging. Is the camera operator at fault? The algorithm developer? The organization that deployed the system? The lack of clear accountability frameworks allows harms to occur without meaningful recourse for affected individuals. Establishing robust accountability requires technical standards for system performance, legal frameworks for liability, and institutional mechanisms for oversight and redress.

The sociotechnical nature of these technologies means that technical solutions alone cannot address ethical challenges. These technologies exist within social, political, and economic contexts that shape their development and deployment. Addressing the ethical issues requires not just better algorithms, but also thoughtful governance structures, meaningful community engagement, and willingness to forgo certain applications where risks outweigh benefits. A systems perspective recognizes that facial recognition ethics involves not just the technology itself, but the entire ecosystem of institutions, practices, and power relationships within which the technology operates.

Understanding Law in Ethical Issues With Face Recognition

Legal frameworks governing facial recognition technology remain fragmented, inconsistent, and inadequate to address the technology's risks. Different jurisdictions have adopted vastly different approaches, ranging from outright bans on government use of facial recognition to minimal regulations that offer little meaningful protection. This regulatory patchwork creates confusion for organizations deploying this technology and leaves individuals with highly variable protections depending on their location.

In the United States, no comprehensive federal law regulates FRT deployment, leaving the matter primarily to states and municipalities. Some cities, including San Francisco, Boston, and Portland, have banned government use of facial recognition by police and other municipal agencies. These local ordinances recognize that the technology's risks to civil liberties outweigh its unproven public safety benefits. Other jurisdictions have imposed more limited restrictions, such as requiring judicial warrants before police can use FRT or mandating transparency reports about system usage and accuracy.

The European Union's approach to FRT regulation emphasizes data protection and fundamental rights. The General Data Protection Regulation (GDPR) classifies biometric data as a special category requiring enhanced protection, generally prohibiting its processing without explicit consent or legal necessity. However, broad exemptions for law enforcement and national security substantially weaken these protections. The proposed EU Artificial Intelligence Act attempts to regulate high-risk AI systems, including certain facial recognition applications, by imposing requirements for risk assessment, human oversight, and transparency. Yet enforcement mechanisms and definitional ambiguities raise questions about the regulation's ultimate effectiveness.

Existing legal doctrines struggle to accommodate the technology's capabilities. Fourth Amendment protections against unreasonable searches in the U.S. generally do not apply to information individuals "knowingly expose to the public," a doctrine developed before ubiquitous surveillance technologies existed. Courts must reconsider whether this framework remains appropriate when technologies enable comprehensive tracking of individuals' public movements. Similarly, laws protecting against discrimination may not adequately address algorithmic bias, as proving discriminatory intent becomes difficult when bias emerges from technical implementations rather than explicit human decisions. For a technical deep-dive into this technology, see our face recognition overview.

For further reading on related concepts, explore our Face Recognition For Photos guide.

International law and human rights frameworks provide additional constraints on FRT deployment. The International Covenant on Civil and Political Rights protects privacy and prohibits arbitrary interference with private life. Regional human rights courts have begun addressing surveillance technologies, establishing principles that could constrain FRT deployment. However, enforcement of international law remains dependent on national implementation, limiting its practical impact. The absence of global consensus on FRT regulation allows authoritarian governments to deploy these technologies for population control and political repression, with limited international consequences.

Understanding Rights in Ethical Issues With Face Recognition

Facial recognition technology implicates multiple fundamental human rights, creating tensions that society must carefully navigate. The right to privacy stands most obviously at stake—FRT enables pervasive surveillance that can track individuals' movements, associations, and activities throughout public and semi-public spaces. This surveillance capacity fundamentally alters the nature of public space, transforming areas that were functionally private (despite being technically public) into zones of comprehensive monitoring.

The right to freedom of expression and assembly faces particular threats from FRT surveillance. When individuals know their participation in protests, religious services, or political meetings may be tracked and recorded, they may self-censor to avoid potential consequences. This chilling effect undermines democratic participation and reduces the vitality of civic society. Historical examples demonstrate how surveillance technologies have been used to target activists, journalists, and dissidents—FRT dramatically amplifies these capabilities, enabling identification and tracking at unprecedented scale.

Rights to equality and non-discrimination are challenged by FRT's demonstrated biases. When systems exhibit higher error rates for certain demographic groups, they create disparate impacts that violate principles of equal treatment. In law enforcement contexts, these biases can result in wrongful arrests and increased surveillance of already over-policed communities. In commercial contexts, they can lead to denied services or opportunities. These discriminatory outcomes violate both legal protections against discrimination and broader human rights principles demanding equal dignity and respect for all persons.

The right to due process faces erosion when facial recognition is used to make or influence consequential decisions without adequate transparency, accuracy guarantees, or appeal mechanisms. Individuals may never know that facial recognition played a role in decisions affecting their lives—from criminal investigations to employment screening to access control. This opacity prevents meaningful challenges to erroneous or biased outcomes, effectively denying individuals their day in court or opportunity to confront evidence used against them.

Understanding Enforcement in Ethical Issues With Face Recognition

Police adoption of facial recognition technology represents perhaps the most controversial and consequential application of the technology. Police departments worldwide have deployed these systems for investigative purposes, real-time surveillance, and automated identification of suspects. While police agencies emphasize crime-solving benefits, evidence of wrongful arrests, discriminatory targeting, and mission creep raises profound concerns about FRT's role in policing.

The accuracy limitations of FRT technologies create serious risks in enforcement contexts where misidentification can result in arrest, prosecution, and imprisonment of innocent people. Multiple documented cases exist of individuals being wrongfully arrested based on faulty facial recognition matches. These errors disproportionately affect Black Americans and other people of color due to the technology's demonstrated racial bias. When law enforcement treats FRT matches as reliable evidence rather than investigative leads requiring corroboration, the risk of wrongful conviction increases substantially.

Real-time FRT surveillance by police agencies transforms the nature of policing and public space. When police deploy cameras with facial recognition capabilities in public areas, they create dragnet surveillance that monitors everyone rather than focusing on specific suspects. This approach inverts traditional criminal justice principles, which require particularized suspicion before subjecting individuals to investigation. The comprehensive monitoring enabled by real-time FRT allows law enforcement to track individuals' movements, associations, and activities across time and space, creating detailed profiles of behavior and social networks.

Accountability and oversight mechanisms for law enforcement use of FRT remain inadequate in most jurisdictions. Police departments often deploy these technologies without public disclosure, community input, or independent oversight. When these systems are used in investigations, defendants may not receive information about the technology's role in their arrest, preventing meaningful challenges to potentially unreliable evidence. Establishing robust oversight requires transparency about system deployment, regular accuracy audits, community participation in governance decisions, and meaningful legal recourse for individuals harmed by FRT errors.

The use of FRT by police agencies for immigration control, border security, and national security operations raises additional ethical concerns. These contexts often involve vulnerable populations with limited rights and reduced procedural protections. When these systems exhibit bias against certain ethnic or national origin groups, they can facilitate discrimination in immigration control. The lack of transparency and judicial oversight in national security contexts creates opportunities for abuse, with limited accountability when errors or rights violations occur. Balancing legitimate security interests with fundamental rights protections requires careful consideration of when, how, and under what constraints police agencies may deploy facial recognition.

Frequently Asked Questions

How does implications relate to ethical concerns?

The implications of facial recognition technology extend far beyond immediate privacy concerns to encompass broader societal impacts. FRT's widespread deployment creates cascading effects on individual autonomy, democratic participation, and social trust. When people know they may be continuously monitored and identified, they alter their behavior in ways that diminish freedom and spontaneity. The long-term implications include normalization of surveillance, erosion of anonymity in public spaces, and fundamental shifts in the power balance between individuals and institutions. These implications demand proactive ethical analysis before technologies become so embedded in social infrastructure that meaningful regulation becomes practically impossible.

How does face recognition may be particularly prone relate to ethical concerns?

Face recognition may be particularly prone to several categories of ethical problems due to its unique characteristics. Unlike other biometric systems, facial recognition can operate at a distance without subject cooperation or awareness, enabling covert surveillance. The technology is particularly prone to accuracy disparities across demographic groups, with documented higher error rates for women and people of color. FRT is particularly prone to mission creep, where systems deployed for limited purposes gradually expand to broader applications without adequate oversight. These vulnerabilities make facial recognition particularly prone to abuse by authoritarian regimes seeking to control populations and suppress dissent.

How does facial recognition relate to ethical concerns?

Facial recognition relates to ethical concerns through multiple pathways. The technology enables unprecedented surveillance capabilities that challenge privacy rights and expectations. Facial recognition relates to consent issues, as systems can identify individuals without their knowledge or permission. The technology relates to discrimination and bias, with documented disparities in accuracy across demographic groups. Facial recognition relates to power imbalances, concentrating control over biometric data in the hands of governments and corporations. Understanding how facial recognition relates to these interconnected ethical dimensions is essential for developing appropriate governance frameworks and use restrictions.

How does privacy violations, racial relate to ethical concerns?

Privacy violations and racial discrimination represent two of the most serious ethical concerns surrounding facial recognition. These issues are deeply interconnected—when FRT systems exhibit racial bias in their operation, they create disparate privacy impacts, with communities of color experiencing more intensive surveillance and higher error rates. Privacy violations occur when biometric data is collected without consent, shared beyond original purposes, or inadequately secured against breaches. Racial disparities in FRT accuracy mean that people of color face greater risks of misidentification, wrongful arrest, and unwarranted surveillance. Addressing these intertwined concerns requires both technical improvements to reduce bias and policy frameworks that prioritize privacy protection and racial equity.

How does lack relate to ethical concerns?

The lack of transparency, accountability, and regulation surrounding facial recognition creates foundational ethics problems that demand attention. Lack of transparency about how these technologies operate, where they are deployed, and how they make decisions prevents meaningful public oversight and individual redress. Lack of accountability mechanisms means organizations can deploy error-prone systems without facing consequences for harms caused. Lack of informed consent in data collection violates individual autonomy. Lack of independent audits allows biased systems to remain in use. Lack of comprehensive legal frameworks creates regulatory gaps that enable problematic deployments. Addressing this lack requires proactive development of governance structures, transparency requirements, and accountability mechanisms before FRT becomes further entrenched in social infrastructure.

How does transparency relate to ethical concerns?

Transparency represents a critical ethical requirement for facial recognition deployment. Without transparency about when and where facial recognition technologies operate, individuals cannot make informed decisions about their movements and activities. Transparency about system accuracy, including error rates across demographic groups, is essential for assessing whether deployment is justified. Transparency regarding data collection, storage, and sharing practices allows individuals to understand risks to their privacy and exercise what limited control they may have. Transparency in government procurement and deployment decisions enables democratic oversight and public accountability. Organizations often resist transparency, citing competitive concerns or security considerations, but these justifications must be carefully scrutinized against the public's legitimate interest in understanding how surveillance technologies affect their lives.

How does facial recognition technology relate to ethical concerns?

Facial recognition technology relates to ethical concerns across technical, social, legal, and political dimensions. The technology's capabilities enable forms of surveillance and identification that challenge fundamental rights to privacy, freedom of association, and freedom of movement. FRT's technical limitations, including documented biases, create discriminatory outcomes that violate equality principles. The technology's deployment without adequate legal frameworks or democratic oversight raises questions about consent, accountability, and the appropriate balance between security and liberty. Facial recognition technology relates to broader concerns about algorithmic governance, corporate power, and the trajectory of increasingly surveilled societies. Comprehensive ethical analysis must consider not only the technology itself but also the institutional contexts, power relationships, and social structures within which it operates.

Comparison of Facial Recognition Governance Approaches

| Approach | Characteristics | Jurisdictions | Strengths | Limitations |

|---|---|---|---|---|

| Complete Ban | Prohibition on government use of FRT by law enforcement and municipal agencies | San Francisco, Boston, Portland | Eliminates surveillance risks; protects civil liberties; prevents bias harms | Foregoes potential legitimate uses; may lack enforcement for private sector |

| Warrant Requirement | Judicial authorization required before law enforcement can use FRT | Several U.S. states | Preserves judicial oversight; requires probable cause; maintains accountability | May be circumvented through exceptions; relies on judicial expertise with technology |

| Data Protection Framework | Regulates biometric data collection and processing with consent and compliance requirements | European Union (GDPR) | Comprehensive protections; requires transparency; establishes individual rights | Broad exceptions for law enforcement; enforcement challenges; definitional ambiguities |

| Audit and Transparency | Requires regular accuracy testing, bias audits, and public reporting of facial recognition deployment | Some municipal ordinances | Enables public oversight; identifies bias issues; maintains flexibility for legitimate uses | Relies on accurate reporting; may not prevent harms; requires technical expertise for audit interpretation |

| Industry Self-Regulation | Voluntary ethical guidelines and best practices developed by technology companies | Various private sector initiatives | Flexible; allows rapid adaptation; preserves innovation | Lacks enforcement; creates conflicts of interest; uneven adoption; insufficient accountability |

| Sector-Specific Restrictions | Targeted regulations for particular applications (e.g., schools, housing) while allowing other uses | Emerging in various jurisdictions | Tailored to context-specific risks; balances harms and benefits; politically feasible | May create regulatory gaps; complexity in defining sectors; potential for inconsistency |

Conclusion

The ethical issues with face recognition technology represent some of the most pressing challenges at the intersection of technology, privacy, and human rights in the modern era. As FRT capabilities expand and deployment accelerates, society faces critical choices about the values we prioritize and the constraints we impose on surveillance technologies. The technology's demonstrated biases, privacy implications, and potential for abuse demand proactive governance that centers human dignity, equity, and democratic accountability.

Addressing these ethical challenges requires multi-stakeholder collaboration involving technologists, policymakers, civil society organizations, and affected communities. Technical improvements to reduce bias and enhance accuracy are necessary but insufficient—meaningful solutions must also include robust legal frameworks, independent oversight mechanisms, transparency requirements, and willingness to prohibit certain applications where risks clearly outweigh benefits. The choices we make today about facial recognition will shape the kind of society we inhabit for generations to come, determining whether we live in a world of pervasive surveillance or one where privacy, autonomy, and civil liberties are meaningfully protected.