Face Recognition and Privacy Concerns: FRT Law and Rights

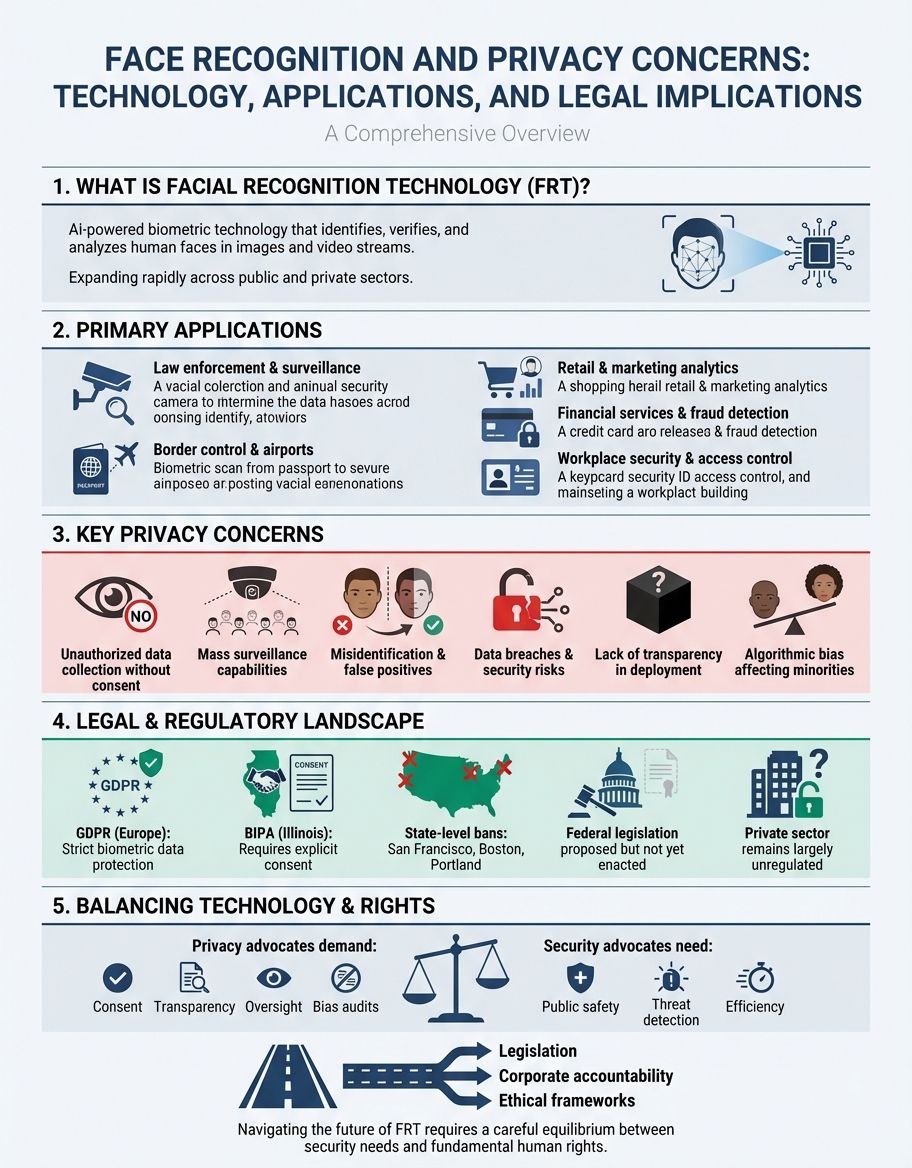

Face recognition and privacy concerns have become increasingly urgent as facial recognition technology (FRT) expands across public and private sectors. This recognition technology raises significant concerns about individual rights, data security, and regulatory frameworks. Understanding how FRT systems work, the law surrounding their use, and the ethical implications is essential for protecting privacy in an era of widespread surveillance.

Privacy and security challenges emerge as government agencies and private companies deploy facial recognition technology without adequate safeguards. From Clearview AI's controversial practices to state-level restrictions, the intersection of these systems and fundamental freedoms demands careful examination. This comprehensive guide explores the privacy implications, legal frameworks, oversight structures, and ethical considerations surrounding face recognition systems.

Understanding FRT in Face Recognition and Privacy Concerns

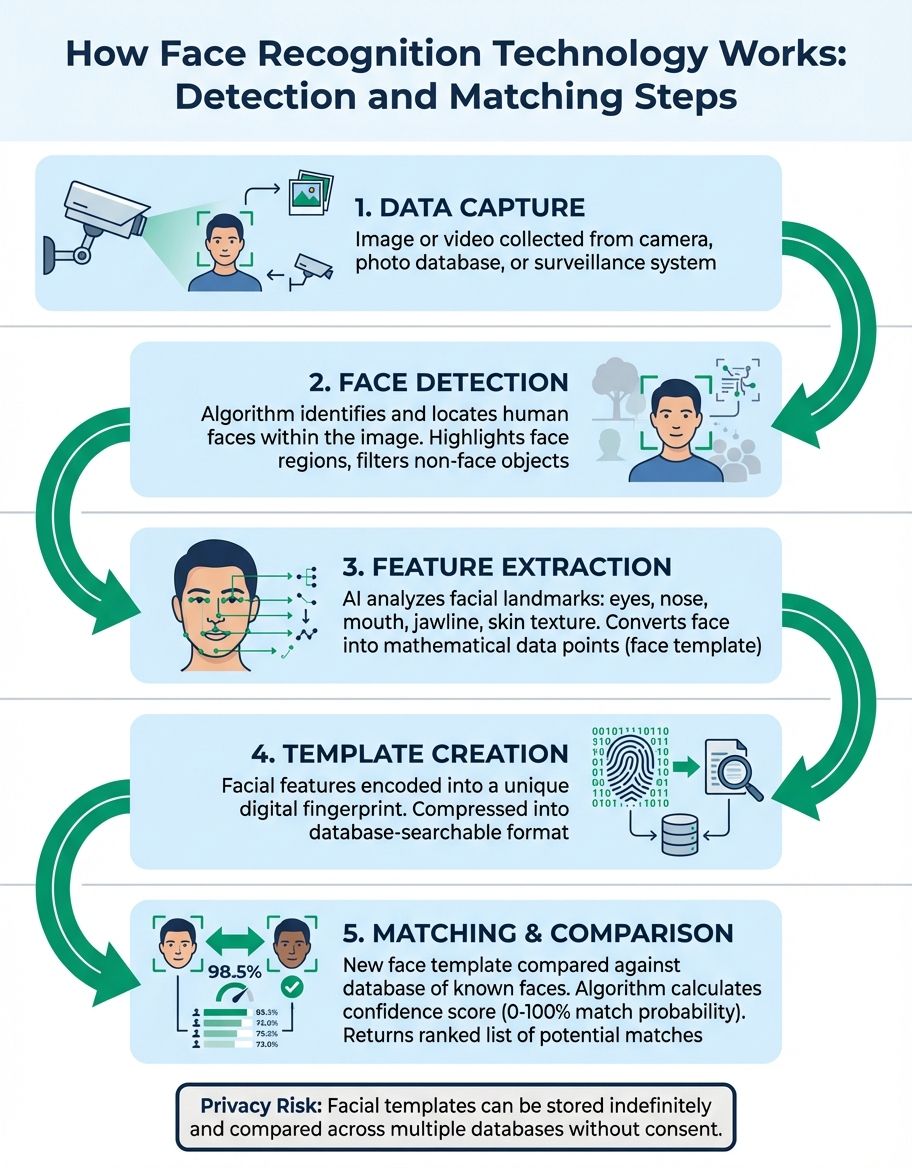

Facial recognition technology (FRT) represents a sophisticated form of biometric identification that analyzes unique facial features. These systems capture facial data through cameras and convert this biometric information into digital templates for matching against databases. The technology's rapid deployment across jurisdictions has amplified privacy concerns.

FRT operates by identifying facial landmarks, measuring distances between features, and creating mathematical representations of faces. This biometric data becomes permanently linked to individuals, raising fundamental questions about consent, retention, and potential misuse. Unlike passwords or access cards, faces cannot be encrypted or changed if compromised, making biometric surveillance particularly invasive from a privacy perspective.

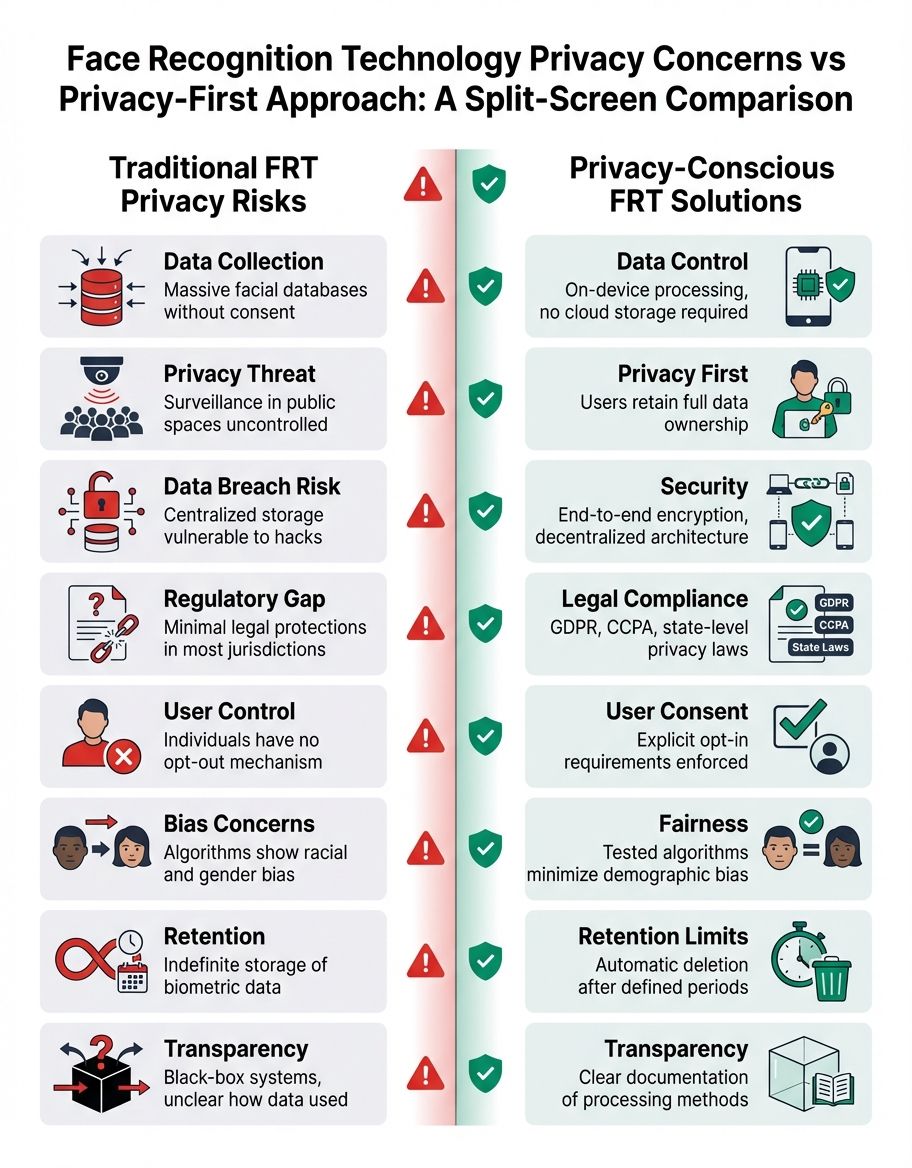

The widespread adoption by entities and corporations has outpaced legal frameworks designed to protect individual rights. Deployment often occurs without transparent oversight or accountability mechanisms. This gap between technological capability and legal controls creates serious privacy concerns that affect millions of people daily.

These systems demonstrate varying accuracy rates, with documented bias against certain demographic groups. Such technical limitations compound privacy concerns, as false positives can lead to wrongful identification, discrimination, and erosion of trust. The ethical implications of deploying flawed biometric systems in high-stakes environments like law enforcement demand rigorous examination.

Understanding Law in Face Recognition and Privacy Concerns

The legal landscape governing facial recognition technology remains fragmented, with federal, state, and local jurisdictions taking divergent approaches to regulation. Several states have enacted biometric privacy laws that restrict how organizations collect, store, and use data. Illinois' BIPA established groundbreaking legal protections requiring explicit consent before capturing biometric information.

Legal frameworks addressing FRT must balance safety interests against fundamental privacy rights. Law enforcement agencies increasingly rely on facial recognition technology for investigations, but this use raises Fourth Amendment questions about unreasonable searches. Courts continue to grapple with whether deployment constitutes surveillance that requires warrants or other legal safeguards.

State legislatures have responded to privacy concerns by proposing various models for these systems. Some jurisdictions have banned use entirely, while others require transparency reports, accuracy testing, and independent audits. These legal approaches reflect growing recognition that existing privacy law may be insufficient to address the unique challenges posed by modern biometric surveillance.

International standards, including the European Union's GDPR, classify facial data as sensitive personal information requiring enhanced protection. These frameworks mandate purpose limitation, data minimization, and individual rights to access and delete records. As FRT capabilities expand globally, harmonizing standards across jurisdictions becomes increasingly critical for protecting privacy and ensuring ethical oversight.

Understanding Recognition Technology in Face Recognition and Privacy Concerns

Recognition technology encompasses various systems that identify individuals through facial features, iris patterns, fingerprints, and other unique characteristics. Facial recognition technology represents the most visible and controversial application, particularly when deployed for mass surveillance in public settings. The technical architecture directly impacts privacy, accuracy, and potential for misuse.

Modern systems leverage artificial intelligence and machine learning algorithms to improve accuracy and speed. These platforms analyze facial data in real-time, comparing captured images against databases containing millions of records. The scale and automation enable surveillance capabilities previously impossible, fundamentally altering the balance between security and privacy.

Privacy concerns intensify as these systems integrate with other data sources, creating comprehensive profiles that track individuals' movements, associations, and behaviors. This aggregation raises questions about function creep—the gradual expansion of FRT beyond its original purpose. Without robust frameworks, such tools risk becoming instruments for pervasive monitoring incompatible with democratic freedoms.

The technical limitations, including bias, false positives, and security vulnerabilities, amplify privacy and ethical concerns. Cybersecurity experts warn that centralized databases present attractive targets for hackers and hostile actors. The permanent nature of facial data means breaches could compromise individuals' privacy indefinitely, as faces cannot be reissued like passwords or credit cards.

Understanding Facial Recognition Technology in Face Recognition and Privacy Concerns

Facial recognition technology has evolved from experimental systems to ubiquitous tools deployed by government agencies, retailers, social media platforms, and law enforcement. This rapid proliferation of FRT occurs despite unresolved questions about accuracy, fairness, and respect for individual rights. Understanding how the technology functions and its limitations is essential for evaluating privacy implications and developing appropriate safeguards.

Facial recognition technology operates through several stages: face identification locates faces within images, feature extraction maps distinctive characteristics, and matching compares extracted features against stored templates. Each stage introduces potential errors that compound throughout the process. Research demonstrates that these systems exhibit higher error rates for women, people of color, and elderly individuals, raising serious concerns about bias and discriminatory impact.

The deployment of facial recognition technology in public spaces creates environments of constant surveillance that chill free expression and association. When individuals know their movements are tracked, they may avoid lawful activities like protests, religious gatherings, or medical facilities. This surveillance effect represents a profound privacy concern that extends beyond individual identification to affect fundamental democratic freedoms.

Government use often lacks transparency, making it difficult for citizens to understand when and how their data is being captured and analyzed. Agencies have partnered with companies like Clearview to access databases compiled without consent, circumventing traditional processes. These practices demonstrate how facial recognition technology can operate outside established frameworks, undermining privacy protections and accountability mechanisms.

Understanding Clearview in Face Recognition and Privacy Concerns

Clearview AI exemplifies the most controversial applications of facial recognition technology, having scraped billions of images from social media and websites without user consent. The company's biometric database enables law enforcement clients to identify individuals from a single photograph, raising unprecedented privacy concerns. These practices have prompted legal challenges, regulatory investigations, and bans in multiple jurisdictions.

The controversy highlights fundamental questions about consent, data ownership, and the boundaries of biometric collection. While the company claims its scraping activities are protected under free speech principles, privacy advocates argue that aggregating facial data at this scale creates surveillance infrastructure incompatible with democratic values. Courts are beginning to address whether existing law adequately regulates such comprehensive biometric databases.

State attorneys general and international regulators have ordered cessation of operations in their jurisdictions, citing violations of biometric privacy law and data protection standards. These legal actions reflect growing recognition that traditional frameworks may not encompass the unique threats posed by mass-scale systems. The case has catalyzed discussions about whether new governance models are necessary to protect individuals from pervasive biometric surveillance.

Beyond regulatory concerns, the technology demonstrates the ethical challenges of deploying facial recognition without meaningful consent or oversight. The permanent nature of biometric data means individuals cannot opt out once their faces are in the database. This irreversibility compounds privacy concerns and underscores the need for robust legal safeguards before deploying such systems at scale.

Understanding Biometric Information in Face Recognition and Privacy Concerns

Biometric information refers to unique physical or behavioral characteristics used to identify individuals, with facial features representing one of the most commonly captured biometric forms. Unlike traditional identifiers like Social Security numbers or passwords, biometric data derives from inherent human attributes that cannot be changed if compromised. This permanence makes biometric identifiers particularly sensitive from a privacy and security perspective.

The collection and storage of biometric information through facial recognition technology creates long-term privacy risks that extend beyond immediate identification. Agencies and private companies maintain biometric databases for years or decades, raising questions about retention periods, access controls, and eventual deletion of biometric records. Legal frameworks increasingly recognize that biometric information requires enhanced protection compared to other personal data categories.

Governance of biometric data varies significantly across jurisdictions, with some states enacting strict consent and disclosure requirements while others impose minimal restrictions. This patchwork creates compliance challenges and uneven protection for biometric privacy. Privacy advocates argue for comprehensive federal law that would establish baseline protections for biometric information across all recognition applications.

The cybersecurity implications of centralized biometric databases raise serious concerns about data breaches and unauthorized access. When hackers compromise systems containing biometric information, the impact persists indefinitely because individuals cannot change their faces. This unique vulnerability of biometric data demands robust security measures, encryption protocols, and incident response procedures that many current systems lack.

Understanding Detection Systems in Face Recognition and Privacy Concerns

Detection systems form the foundational layer of facial recognition technology, identifying the presence and location of faces within images or video streams. These capabilities enable real-time surveillance across public spaces, commercial venues, and facilities. The accuracy and reliability directly impact privacy, with false positives and negatives creating risks of misidentification and erosion of trust.

Advanced algorithms leverage deep learning to identify faces under various conditions, including partial occlusion, poor lighting, and angled perspectives. While these technical improvements enhance accuracy, they also enable more pervasive surveillance that compounds privacy concerns. The ability to track individuals across multiple cameras and locations creates comprehensive movement records that reveal sensitive information about behaviors, associations, and beliefs.

The integration with other technologies, including license plate readers, mobile device tracking, and social media analysis, enables fusion of multiple data streams. This aggregation magnifies privacy implications, as these systems become part of broader surveillance ecosystems that monitor virtually every aspect of public life. Frameworks struggle to address these integrated platforms that exceed traditional notions of privacy invasion.

Public deployment often occurs without notice, consent, or meaningful opportunity for individuals to opt out. This lack of transparency and control represents a fundamental challenge to privacy in an era of ubiquitous facial recognition technology. Ethical governance requires clear disclosure of when and where systems operate, what is captured, how long it's retained, and who has access.

Privacy Law and Governance Frameworks for FRT

Effective governance of facial recognition technology requires comprehensive legal frameworks that address consent, transparency, accountability, and individual rights. Current privacy law in many jurisdictions predates modern FRT and fails to account for its unique capabilities and risks. Legislative efforts at state and federal levels aim to establish legal boundaries while preserving legitimate safety and security applications.

Privacy and security experts advocate for governance models that include mandatory impact assessments, accuracy testing, bias audits, and disclosure requirements before deploying systems. These procedural safeguards help ensure that FRT implementations consider individual rights, minimize unnecessary biometric data collection, and establish accountability when systems fail or are misused.

Legal frameworks must also address the retention and sharing of biometric facial data, establishing clear limits on how long biometric records may be stored and under what circumstances they can be transferred to third parties. Without such legal restrictions, these systems create permanent digital records that persist indefinitely, compounding privacy concerns and enabling mission creep beyond original purposes.

International cooperation on standards becomes increasingly important as facial recognition technology operates across borders. The development of common principles around consent, transparency, accuracy, and accountability would strengthen privacy protection globally while enabling responsible innovation. Harmonization efforts must balance cultural differences in privacy expectations with universal recognition of fundamental rights.

Frequently Asked Questions About Face Recognition and Privacy Concerns

What are the serious privacy concerns with facial recognition technology?

Serious privacy concerns include mass surveillance capabilities, lack of consent for data collection, potential for government overreach, and the permanence of facial information. FRT enables tracking of individuals across public spaces without their knowledge, creating comprehensive records of movements and associations. The technology's deployment often lacks transparency and accountability, allowing uses that may violate individual rights. Cybersecurity risks compound these concerns, as breaches of databases expose information that cannot be changed like passwords.

How does privacy law address facial recognition technology?

Privacy law addressing FRT varies by jurisdiction, with some states enacting specific statutes requiring consent and limiting retention periods. Federal law provides limited protection, creating gaps that allow widespread deployment without consistent safeguards. Frameworks are evolving to require transparency reports, accuracy testing, and restrictions on use. Courts continue interpreting constitutional privacy protections in light of capabilities, establishing precedents that will shape future oversight.

Why are privacy concerns compounded with facial recognition systems?

Privacy concerns are compounded because facial recognition combines multiple threats: data permanence, potential for mass surveillance, integration with other tracking technologies, and demonstrated bias affecting marginalized communities. Unlike traditional surveillance, FRT operates continuously and automatically, analyzing millions of faces without human oversight. The aggregation of facial data with location, social media, and transaction records creates comprehensive profiles that reveal intimate details about individuals' lives, beliefs, and relationships.

Why can't faces be encrypted to protect privacy?

Faces cannot be encrypted in the traditional cybersecurity sense because facial recognition technology requires access to actual features to function. While stored templates can be encrypted at rest, the recognition process necessitates decryption for comparison. More fundamentally, faces are publicly visible and cannot be hidden or changed like passwords when compromised. This inherent visibility means individuals cannot control exposure of their information in public spaces where FRT operates.

How do privacy and security intersect in facial recognition systems?

Privacy and security intersect critically in FRT systems, as cybersecurity breaches expose information that persists indefinitely. Strong security measures are necessary but insufficient for privacy protection—even perfectly secured systems raise concerns when deployed for mass surveillance. Frameworks must address both technical security against unauthorized access and limitations on authorized uses that threaten individual rights. Balancing security benefits against privacy costs requires careful evaluation.

What problems arise from improper data storage of facial recognition information?

Improper data storage creates multiple risks: unauthorized access by hackers or insiders, indefinite retention beyond legitimate purposes, lack of encryption enabling easy compromise, and sharing with third parties without consent. Government and commercial databases often lack adequate security controls, audit trails, or deletion procedures. When information is stored improperly, breaches can expose millions of individuals to identity theft, surveillance by foreign actors, and permanent compromise of their facial data.

Why does facial recognition raise significant concerns for civil liberties?

Facial recognition raises significant concerns because it enables surveillance at a scale and efficiency previously impossible, fundamentally altering the balance between individual freedom and state power. The technology facilitates tracking of lawful activities like protests, religious observances, and political organizing, chilling free expression and association. Without robust limits, FRT could enable authoritarian governance incompatible with democratic values. The demonstrated bias in systems also threatens equal protection, disproportionately affecting communities already subjected to excessive surveillance.

Comparison: FRT Regulation Approaches

| Jurisdiction | Regulatory Approach | Key Privacy Protections | Enforcement Mechanism | Data Rights |

|---|---|---|---|---|

| Illinois (BIPA) | Comprehensive privacy law | Explicit written consent required; strict retention limits; private right of action | Individual lawsuits with statutory damages | Right to know, consent, and deletion |

| European Union (GDPR) | General data protection with provisions | Sensitive data classification; purpose limitation; data minimization | Regulatory fines up to 4% of revenue | Access, correction, erasure, and objection rights |

| San Francisco | Municipal ban on government FRT use | Prohibition on city agency deployment | Administrative enforcement and public oversight | Protection from government surveillance in public spaces |

| Federal (U.S.) | Sector-specific and limited restrictions | Minimal baseline protections; agency-specific policies; proposed legislation pending | Varies by agency and context | Limited federal recognition of privacy rights |

| Texas | Privacy statute with business focus | Notice and consent requirements; destruction obligations | State attorney general enforcement only | Notice rights but no private enforcement mechanism |

| China | Extensive deployment with minimal protection | State security prioritized over individual privacy; limited consent requirements | Government discretion; no independent oversight | Minimal recognition of privacy rights against state surveillance |

Conclusion: Balancing Innovation and Privacy in Facial Recognition Technology

Face recognition and privacy concerns will continue intensifying as FRT capabilities expand and deployment becomes more pervasive. Effective governance requires comprehensive frameworks that protect individual rights while enabling responsible innovation. The development of robust privacy law, transparency requirements, accuracy standards, and accountability mechanisms represents essential safeguards against the risks of unchecked surveillance.

Stakeholders including policymakers, technology companies, agencies, and organizations must collaborate to establish ethical principles for deployment. These principles should prioritize consent, minimize unnecessary collection, ensure system accuracy across demographic groups, and create meaningful remedies when FRT causes harm. The challenges posed by these systems demand proactive engagement rather than reactive responses to crises.

Protecting privacy in an era of advanced systems requires vigilance, strong protections, and commitment to fundamental values. As capabilities improve and applications multiply, society must decide what level of surveillance is compatible with democratic freedoms. The choices made today will shape privacy, security, and individual autonomy for generations. Understanding these issues enables informed participation in debates that will determine the balance between technological capability and human rights in the digital age.