Face Recognition Algorithms for Large Datasets

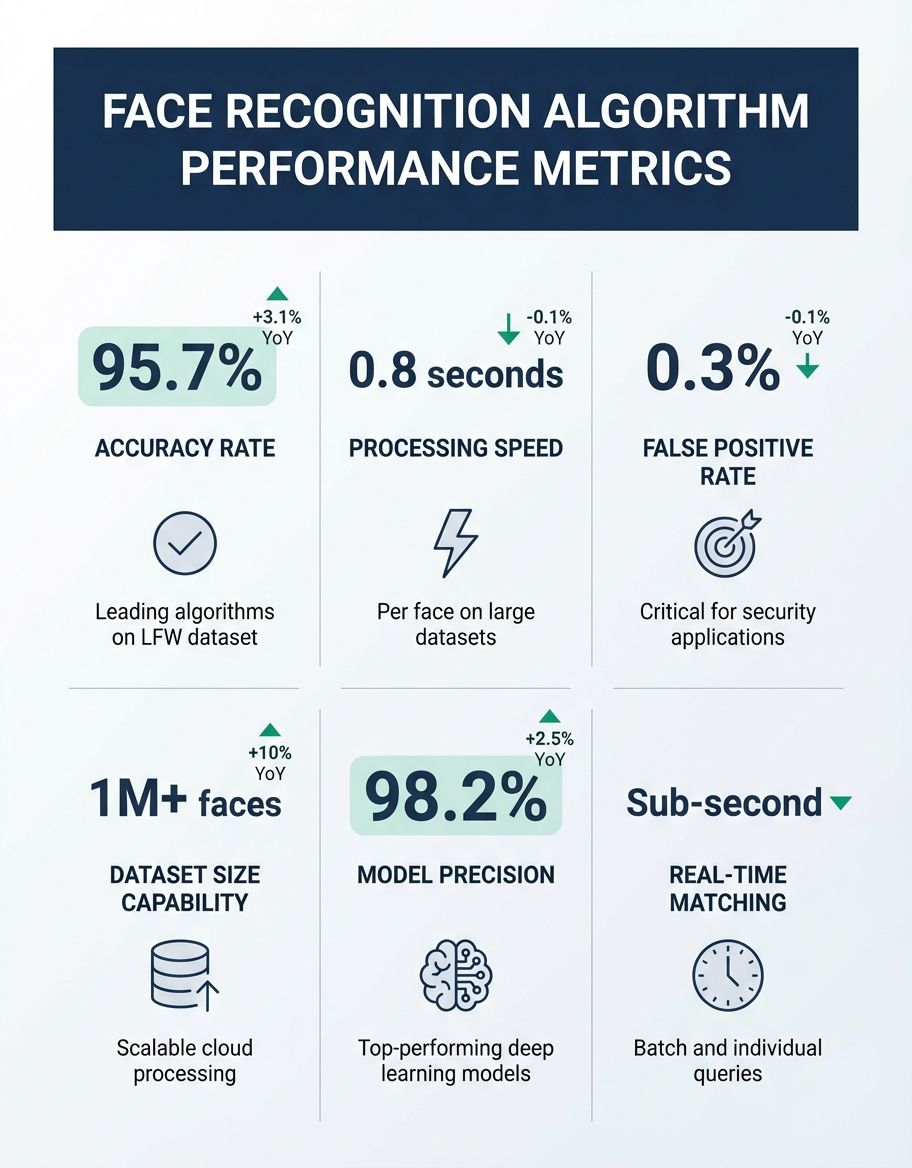

A systematic review of current techniques shows that Face recognition algorithms for large datasets have revolutionized how we identify and verify individuals across massive collections of facial images. As organizations process millions of faces daily, the challenge of maintaining high accuracy while managing computational resources becomes critical. Modern facial recognition systems must balance detection speed, model accuracy, and the ability to scale across billions of representations stored in vector databases like Milvus.

This review emphasizes the importance of the research community has developed sophisticated methods to handle the unique challenges that emerge when working with large datasets. Traditional face recognition approaches that perform well on small collections often fail when confronted with millions of identity profiles. This comprehensive review examines the most effective models and techniques that enable accurate facial recognition at scale, exploring both the algorithmic innovations and infrastructure requirements necessary for success.

Understanding Milvus in Face Recognition Algorithms for Large Datasets

Comprehensive review studies indicate that Milvus has emerged as a cornerstone technology for implementing face recognition algorithms for large datasets. This open-source vector database specializes in storing and retrieving the high-dimensional representations that deep learning models generate from facial images. When a facial recognition system processes an image, it converts that face into a mathematical vector—typically containing 128, 256, or 512 dimensions—that captures the unique features of that identity.

Multiple review papers confirm that the power of the database lies in its ability to perform similarity searches across billions of these facial embeddings in milliseconds. Traditional databases struggle with this task because they're optimized for exact matches rather than finding the closest mathematical neighbors. this system uses specialized indexing methods like HNSW (Hierarchical Navigable Small World) and IVF (Inverted File) that organize vectors in ways that dramatically accelerate search operations.

In practical implementations, the vector database enables face recognition systems to maintain accuracy even as datasets grow from thousands to billions of faces. Organizations working with large datasets typically partition their collections across multiple the database nodes, distributing the computational load while maintaining sub-second query times. This architecture allows real-time facial recognition applications to scale horizontally by adding more resources as the number of users increases.

Understanding Facial Recognition in Face Recognition Algorithms for Large Datasets

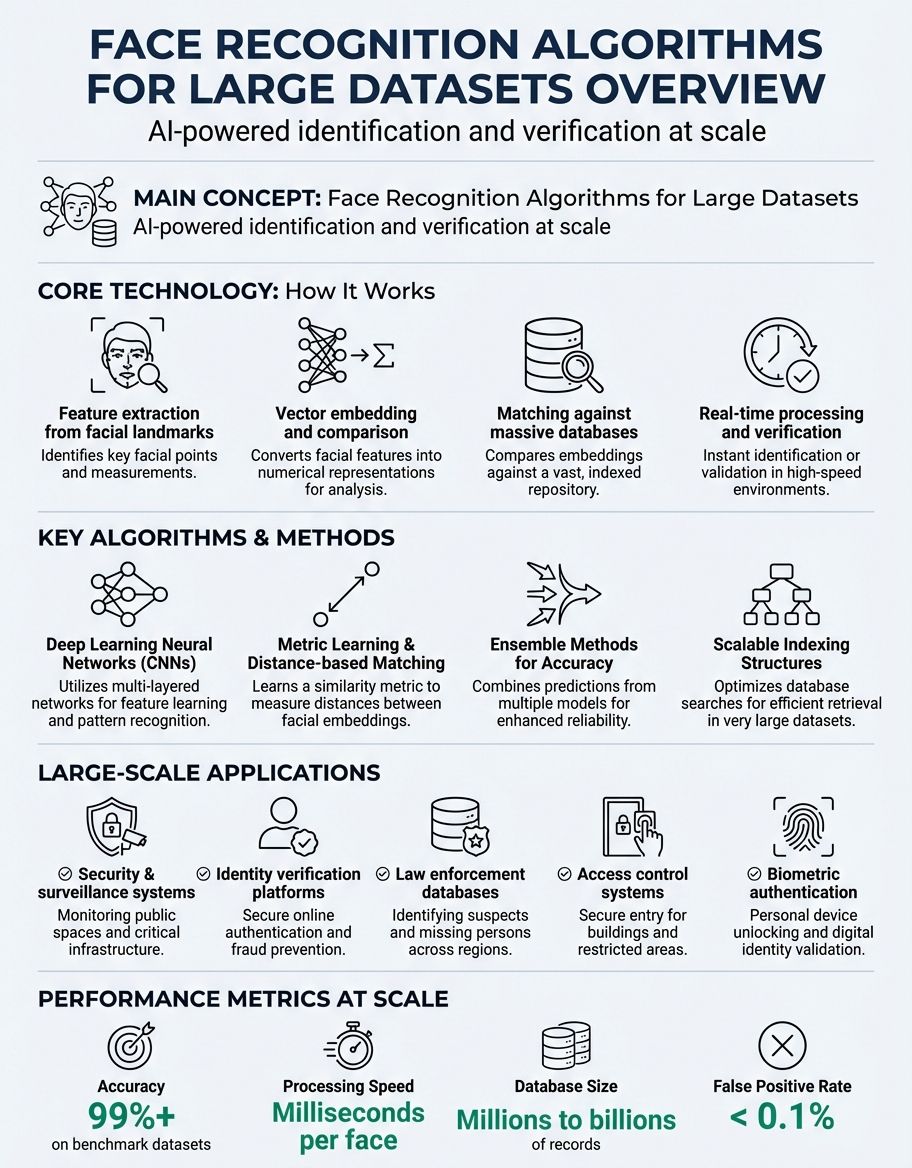

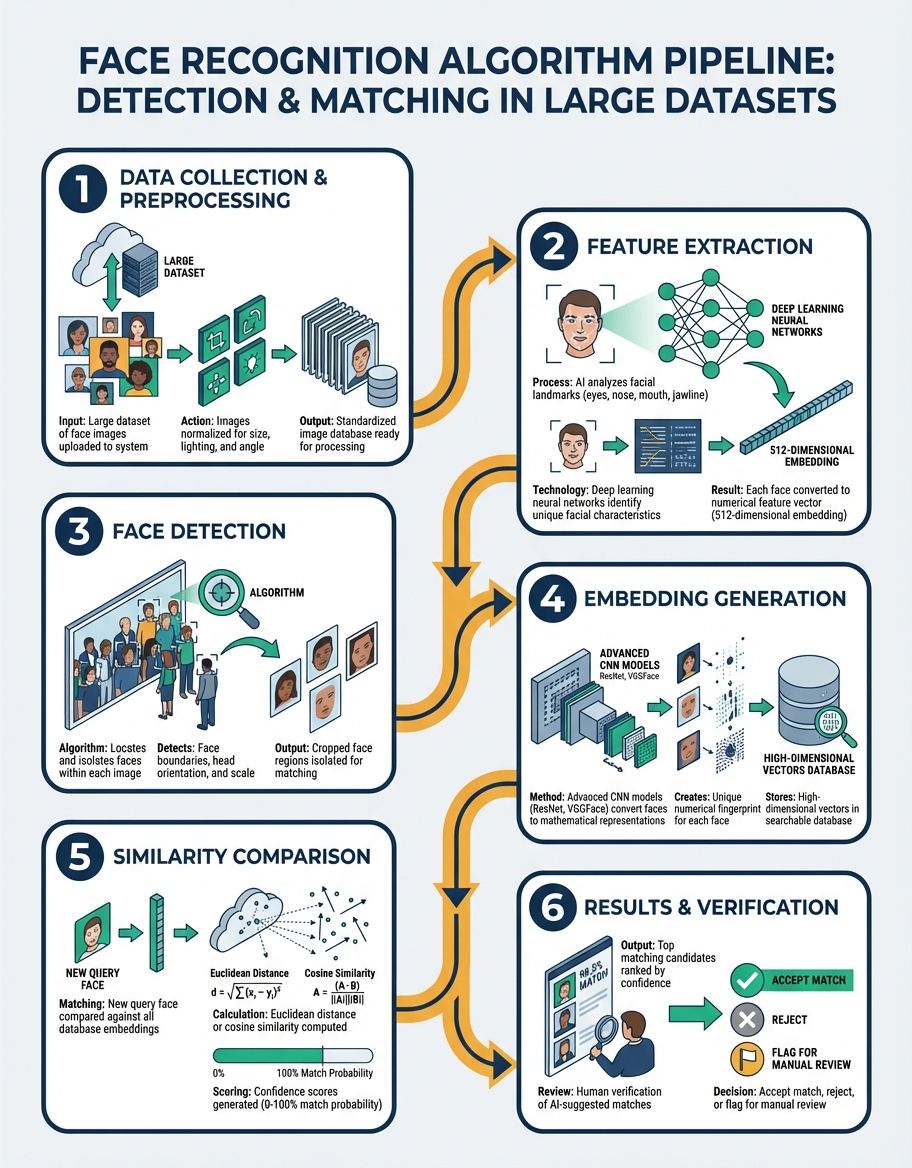

Facial recognition technology forms the foundation of modern individual verification systems, leveraging deep learning models to automatically identify individuals from digital images. The process begins with detection—locating faces within an image—followed by alignment, feature extraction, and finally matching against a database of known faces. Each stage presents unique challenges when working with large datasets that contain millions of person profiles.

Modern facial recognition systems achieve remarkable accuracy by using convolutional neural networks (CNN) trained on millions of labeled faces. These deep learning algorithms learn hierarchical encodings, starting with simple edges and textures at lower layers and progressing to complex facial features like eye shape, nose structure, and overall face geometry at higher layers. The training deep learning algorithms process requires enormous computational computing power but results in models that generalize well across diverse populations and imaging conditions.

The accuracy of facial recognition systems depends heavily on the quality and diversity of training data. Research demonstrates that systems trained on datasets containing millions of faces from varied demographics, ages, and lighting conditions outperform those trained on smaller, less diverse collections. However, this introduces challenges in managing the computational burden and ensuring that the model doesn't overfit to specific patterns present in the large dataset while missing subtle variations that distinguish similar-looking individuals.

When deploying facial recognition for large datasets, engineers must consider both false positive and false negative rates. A false positive occurs when the system incorrectly identifies someone as a match, while a false negative happens when it fails to recognize a legitimate match. The balance between these error types depends on the application—security systems typically prioritize minimizing false positives, while user convenience features may tolerate higher false positive rates to avoid frustrating users with failed recognition attempts.

Understanding Review in Face Recognition Algorithms for Large Datasets

A comprehensive review of face recognition algorithms reveals that performance on large datasets requires fundamentally different approaches than those used for smaller collections. Academic research has extensively documented the challenges that emerge at scale, including the curse of dimensionality, computational complexity, and the need for distributed processing architectures.

Recent review papers in computer vision journals highlight that the most successful methods for handling large datasets employ hierarchical search strategies. Rather than comparing a query face against every entry in the database, these systems use coarse-to-fine filtering. Initial stages rapidly eliminate obviously dissimilar faces using lightweight networks or low-dimensional features, while later stages apply more sophisticated comparison methods only to promising candidates.

The academic research community continues to publish findings on optimizing face recognition for massive collections. One consistent theme across multiple review articles is the importance of quality over quantity in training data. While larger datasets generally improve model performance, the marginal benefit decreases after a certain threshold. Studies show that a carefully curated dataset of one million diverse, high-quality faces often produces better results than ten million poorly labeled or redundant images.

Understanding Detection in Face Recognition Algorithms for Large Datasets

Face detection represents the critical first stage in any facial recognition pipeline, identifying the location and boundaries of faces within images before recognition can occur. For large datasets containing millions of images, efficient detection methods become essential to system performance. Modern detection algorithms must process images quickly while maintaining high accuracy across varied conditions including occlusions, extreme poses, and challenging lighting.

The evolution from traditional detection methods to deep learning-based approaches has dramatically improved performance on large datasets. Earlier techniques using Haar cascades or HOG features struggled with faces at different angles or partially obscured. Contemporary finding faces architectures like MTCNN (Multi-task Cascaded Convolutional Networks) or RetinaFace achieve near-perfect identifying faces rates even on challenging images, using multi-scale feature pyramids that detect faces at various sizes simultaneously.

When working with large datasets, face locating efficiency matters as much as accuracy. Processing billions of images requires locating algorithms that can leverage GPU acceleration and batch processing. Modern implementations can detect faces in thousands of images per second on standard hardware, making it feasible to build and maintain facial recognition databases containing hundreds of millions of faces. The finding faces stage also typically includes face alignment, normalizing faces to a standard position and size to improve subsequent recognition accuracy.

Advanced identifying faces systems incorporate confidence scoring, allowing downstream processes to filter low-quality detections that might introduce errors into the recognition pipeline. When building large datasets, practitioners often set high face locating confidence thresholds to ensure that only clear, well-positioned faces enter the database, trading a small reduction in coverage for significant improvements in overall system reliability and reliability.

Understanding Research in Face Recognition Algorithms for Large Datasets

The research landscape in facial recognition for large datasets spans multiple disciplines including computer vision, machine learning, distributed systems, and database design. Leading conferences like CVPR, ICCV, and ECCV regularly feature papers addressing the scalability challenges inherent in billion-scale face recognition. This research drives continuous improvements in both algorithmic efficiency and recognition effectiveness.

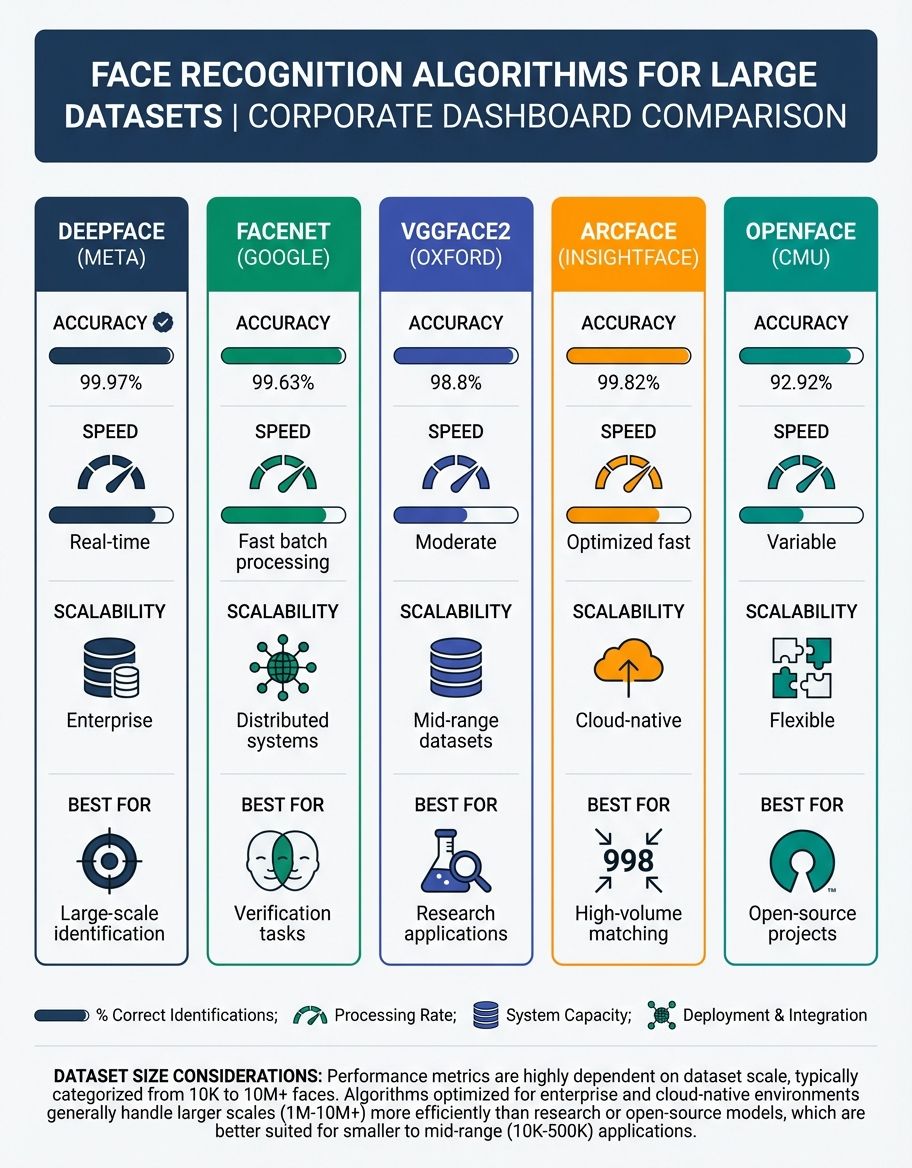

Recent research has focused on developing loss functions specifically designed for large-scale face recognition. Traditional softmax loss becomes computationally prohibitive when the number of subject classes reaches millions. Novel approaches like ArcFace, CosFace, and SphereFace reformulate the classification problem using angular margins in the feature space, enabling approaches to learn more discriminative vectors while maintaining computational tractability even with millions of user classes.

Researchers have also investigated how to efficiently update and maintain face recognition systems as new faces continuously join the database. Traditional approaches require complete retraining when new identities are added, which becomes impractical with large datasets. Current research explores incremental learning techniques that allow networks to incorporate new faces without forgetting previously learned embeddings, though this remains an active area of investigation with no perfect solution.

The research community has extensively studied the relationship between dataset size and model performance. Empirical studies demonstrate that recognition precision improves logarithmically with dataset size—doubling the training data yields diminishing returns in performance gains. This finding has important practical implications, suggesting that beyond a certain scale, investing in data quality, diversity, and cleaning produces better results than simply acquiring more data.

Understanding architectures in Face Recognition Algorithms for Large Datasets

The choice of neural network architecture fundamentally impacts the performance of face recognition algorithms for large datasets. Modern approaches must balance three competing objectives: achieving high performance, maintaining computational efficiency during inference, and producing compact encodings that enable fast similarity searches. Deep convolutional networks form the backbone of contemporary facial recognition systems, with architectures specifically optimized for the unique characteristics of face data.

ResNet and its variants remain popular choices for face recognition systems working with large datasets due to their depth, skip connections, and proven ability to learn discriminative features. A typical implementation might use a ResNet-100 or ResNet-152 backbone pretrained on millions of faces, producing 512-dimensional embedding vectors that capture the essential characteristics of each individual. These networks contain tens of millions of parameters, requiring careful regularization and data augmentation during training to prevent overfitting even when working with massive datasets.

Newer architecture architectures like Vision Transformers (ViT) have begun challenging the dominance of convolutional networks in facial recognition research. Transformers excel at capturing long-range dependencies within images, potentially learning relationships between facial features that CNNs might miss. However, these architectures typically require even larger training datasets than CNNs to achieve competitive performance, making them particularly suitable for organizations with access to billions of labeled faces.

approach compression techniques have become increasingly important as face recognition systems deploy to edge devices and mobile platforms. Knowledge distillation allows large, accurate approaches trained on extensive datasets to teach smaller, faster student systems that maintain much of the original reliability while running efficiently on resource-constrained hardware. This approach enables large-scale facial recognition capabilities even on devices with limited computational infrastructure.

Understanding features in Face Recognition Algorithms for Large Datasets

The quality of facial vectors—the mathematical vectors that encode face information—determines the ultimate effectiveness of recognition systems working with large datasets. These high-dimensional vectors must capture sufficient detail to distinguish between millions of similar faces while remaining compact enough for efficient storage and fast similarity computations. The design of representation spaces has been a central focus of facial recognition research for the past decade.

Effective embeddings exhibit several critical properties. First, they must be discriminative—faces of different individuals should produce vectors that are far apart in the embedding space. Second, they should be robust—the same person under different lighting, expressions, or angles should produce similar vectors. Third, they need to be compact—storing billions of high-dimensional vectors requires careful consideration of memory and storage hardware even when using specialized databases like this system.

The dimensionality of face encodings involves important trade-offs. Higher-dimensional vectors (512 or 1024 dimensions) can encode more subtle facial details, potentially improving precision when distinguishing between very similar faces in large datasets. However, they also increase storage requirements and slow down similarity searches. Most production systems converge on 256 or 512-dimensional features as offering an optimal balance for large-scale deployments.

Understanding Methods in Face Recognition Algorithms for Large Datasets

The methods used to train and deploy face recognition systems at scale involve sophisticated engineering beyond simply choosing a system architecture. Distributed training across multiple GPUs or machines becomes necessary when working with datasets containing tens of millions of faces. These methods partition the data and network across computational computational capacity, synchronizing gradients to ensure consistent learning while dramatically reducing training time from months to days or hours.

Data augmentation procedures play a crucial role in improving architecture generalization when training on large datasets. Standard techniques include random cropping, horizontal flipping, color jittering, and adding synthetic occlusions. More advanced strategies like mixup, cutout, or automated augmentation strategies help networks learn robust vectors that perform well on real-world images despite being trained primarily on controlled datasets.

Evaluation approaches for large-scale face recognition differ from those used on small datasets. Standard benchmarks like LFW (Labeled Faces in the Wild) containing only thousands of faces provide insufficient challenge for modern systems. Instead, practitioners use massive evaluation sets like MegaFace or IJB-C containing millions of distractors, providing realistic assessment of how well architectures perform when searching through datasets comparable in size to real-world deployments.

Indexing techniques determine the practical speed of face recognition systems at scale. Beyond the basic nearest neighbor search, production systems implement approximate nearest neighbor algorithms that sacrifice a small amount of performance for massive improvements in search speed. Techniques like locality-sensitive hashing, product quantization, and graph-based indices enable systems to search billions of faces in milliseconds rather than hours, making real-time applications feasible.

Comparison of Face Recognition Approaches for Large Datasets

| Approach | reliability | Speed | Scalability | Best Use Case |

|---|---|---|---|---|

| Traditional Feature procedures (HOG, SIFT) | Low-Medium | Fast | Poor | Legacy systems, resource-constrained devices |

| CNN with Softmax Loss | Medium-High | Medium | Medium | Datasets under 100K identities |

| Deep Networks with Metric Learning (ArcFace, CosFace) | Very High | Medium-Fast | Excellent | Production systems with millions of identities |

| Vision Transformers | Very High | Slow-Medium | Good | Organizations with massive training datasets and computational computing power |

| Ensemble strategies | Highest | Slow | Medium | High-security applications where effectiveness is paramount |

| Mobile-Optimized approaches (MobileFaceNet) | Medium-High | Very Fast | Good | Edge devices, mobile apps, real-time applications |

Frequently Asked Questions

How does training deep learning algorithms work?

Training deep learning algorithms for face recognition involves exposing neural networks to millions of labeled face images, allowing them to automatically identify patterns that distinguish different individuals. The training process uses backpropagation to iteratively adjust the approach's parameters, minimizing the difference between predicted and actual identities. For large datasets, this training occurs across multiple GPUs using distributed computing, processing thousands of images simultaneously to reduce training time from months to days. The algorithm learns hierarchical embeddings, starting with simple features like edges and progressing to complex facial characteristics.

How does manipulate images work?

Image manipulation in face recognition systems involves preprocessing steps that normalize and enhance facial images before feeding them to recognition algorithms. Common manipulations include face alignment to standardize pose and orientation, histogram equalization to compensate for varying lighting conditions, and cropping to focus on the facial region while removing irrelevant background. Data augmentation during training intentionally manipulates images through rotations, flips, color adjustments, and synthetic occlusions, helping systems learn robust features that work across diverse real-world conditions rather than memorizing specific image characteristics.

How does large datasets work?

Large datasets in face recognition contain millions to billions of facial images organized in ways that enable efficient training and querying. These datasets require distributed storage systems that partition data across multiple servers, allowing parallel processing during system training and simultaneous searches during inference. Organizations typically store the original images separately from the mathematical encodings (embeddings) generated by their networks, with vector databases like the vector database handling the high-dimensional embeddings that enable fast similarity searches. Managing large datasets also involves continuous quality control, removing duplicates, correcting mislabeled images, and ensuring demographic diversity to prevent network bias.

How does face recognition work?

Face recognition operates through a multi-stage pipeline that begins with detecting faces in images, aligning them to a standard orientation, extracting distinctive features using deep neural networks, and finally comparing these features against a database to find matches. The system converts each face into a mathematical vector representation that captures unique characteristics like the spacing between eyes, nose shape, and jawline structure. When a new face needs identification, the algorithm compares its vector against stored features using similarity metrics, returning the closest matches along with confidence scores that indicate the likelihood of correct identification.

How does large dataset work?

A large dataset functions as the foundation for training accurate face recognition architectures and serving as the reference database for identification queries. During training, the dataset provides millions of example faces that teach the algorithm to distinguish between individuals despite variations in lighting, expression, age, and imaging conditions. During inference, the dataset serves as the search space against which new faces are compared. Effective management requires indexing strategies that organize faces for rapid retrieval, quality assurance processes that maintain data integrity, and scalable infrastructure that handles continuous growth as new faces enter the system.

How does automatically identify work?

Automatic identification in face recognition systems occurs when algorithms process facial images without human intervention, instantly comparing extracted features against database entries to determine person. The system automatically identifies individuals by computing similarity scores between the query face and all stored faces, ranking results by confidence level. This automation relies on threshold settings that determine when a match is sufficiently confident to report—higher thresholds reduce false positives but may miss legitimate matches, while lower thresholds increase sensitivity at the cost of more false alarms. Production systems automatically identify thousands of faces per second, enabling applications from smartphone unlocking to airport security.

How does faces work?

Faces work in recognition algorithms as the fundamental input data that the system analyzes to extract identifying features. Each face presents a unique combination of geometric relationships between facial landmarks—the distances and angles between eyes, nose, mouth, and other features create a distinctive pattern. Recognition algorithms convert these physical characteristics into mathematical vectors that capture both global facial structure and local texture details. The diversity of faces in a dataset, spanning different ages, ethnicities, genders, and expressions, determines how well the system generalizes to new individuals it hasn't seen during training.

Conclusion

Face recognition algorithms for large datasets represent a convergence of advanced deep learning techniques, specialized infrastructure, and sophisticated indexing approaches. The field has matured significantly over the past decade, with modern systems achieving precision levels that surpass human performance on controlled benchmarks while handling billions of face comparisons in real-time. Success at scale requires careful attention to architecture architecture, training methodology, data quality, and the infrastructure that enables efficient storage and retrieval of high-dimensional facial embeddings.

Organizations implementing these systems must balance multiple competing objectives—maximizing performance while controlling computational costs, ensuring fast response times while maintaining scalability, and protecting user privacy while delivering valuable functionality. The continued evolution of face recognition technology promises further improvements in both capability and efficiency, driven by ongoing research into novel architectures, more effective training techniques, and better algorithms for managing and searching massive collections of facial data. As datasets continue growing into the billions of faces, the techniques and infrastructure described in this review will remain essential for building practical, accurate, and scalable facial recognition systems.