Deep Learning For Face Recognition: Image Processing & Neural Networks Guide

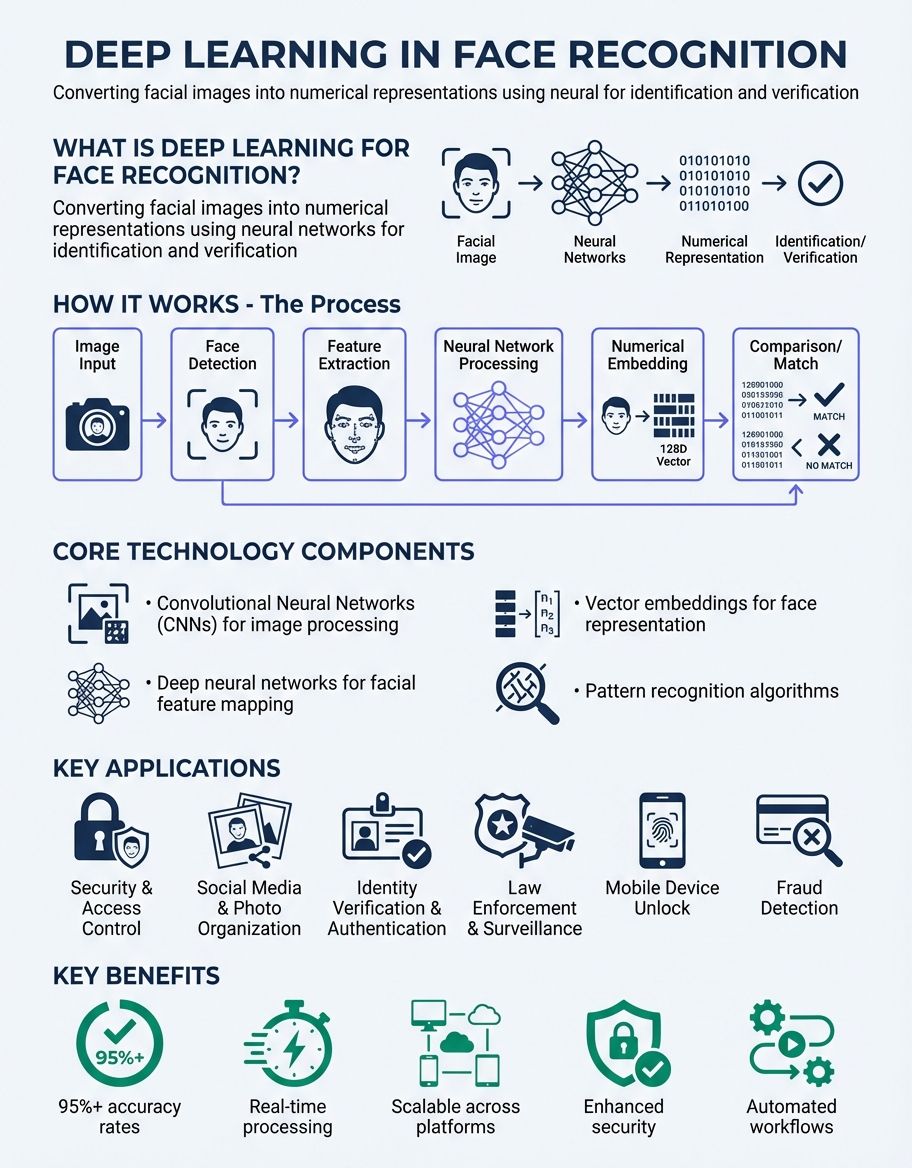

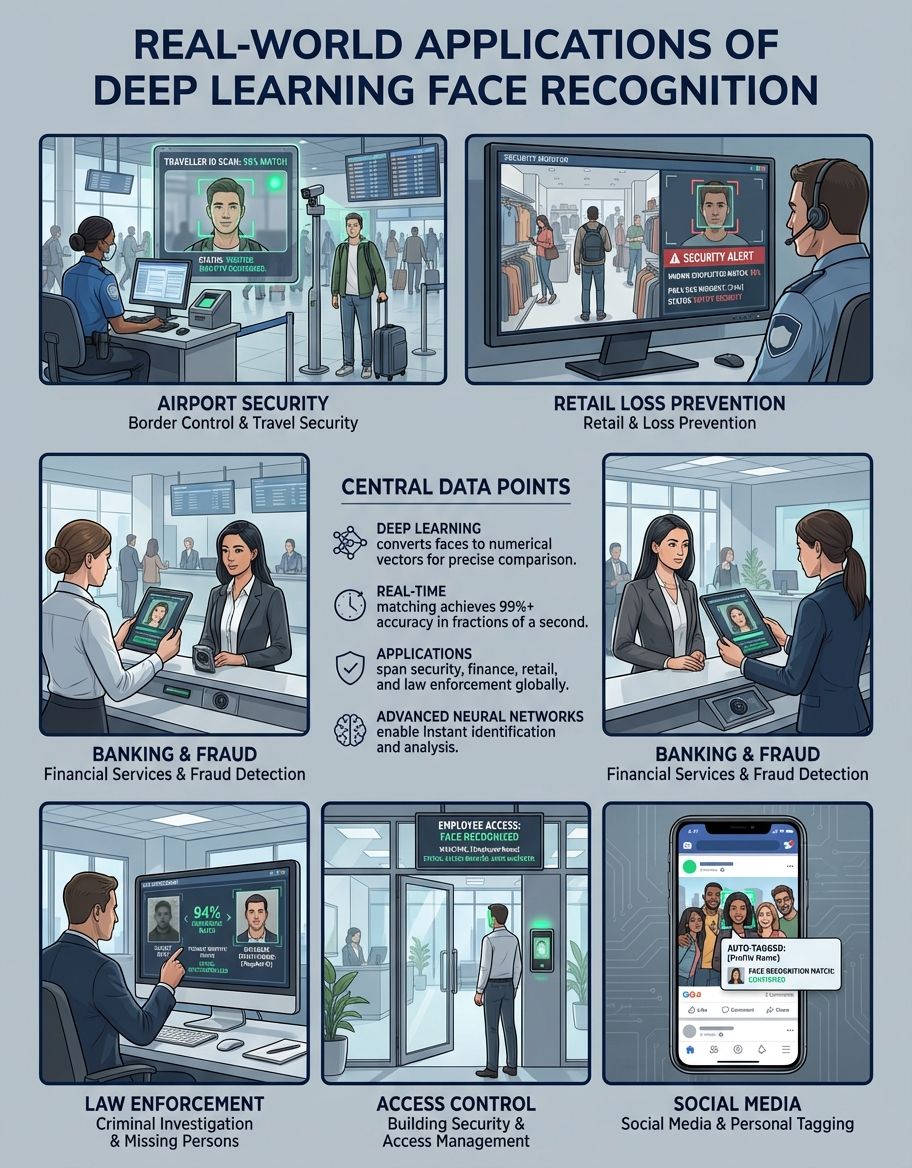

Deep learning for face recognition has revolutionized how systems identify and verify individuals across security, social media, and access control applications. Modern deep learning models convert each face into a numerical representation that enables accurate matching even in challenging conditions. This comprehensive guide explores the technical foundations, implementation approaches, and practical applications that make deep learning the dominant method for face recognition tasks.

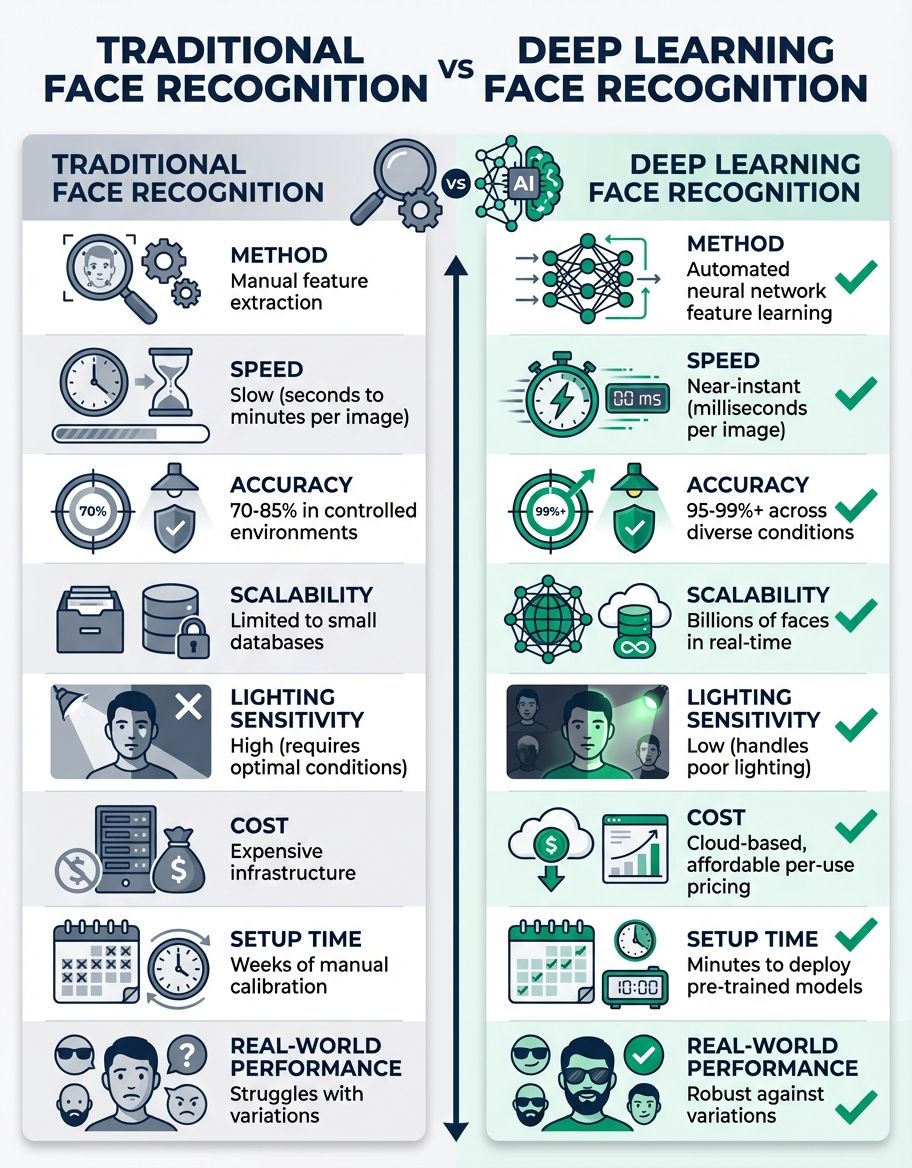

The evolution from traditional machine learning techniques to deep neural networks has dramatically improved accuracy rates. Where conventional methods relied on hand-crafted features, deep learning architectures automatically extract hierarchical patterns from image data. Understanding how these systems work—from initial face detection through final verification—enables developers and organizations to implement robust recognition systems that balance performance with privacy considerations.

Understanding Image Processing in Deep Learning For Face Recognition

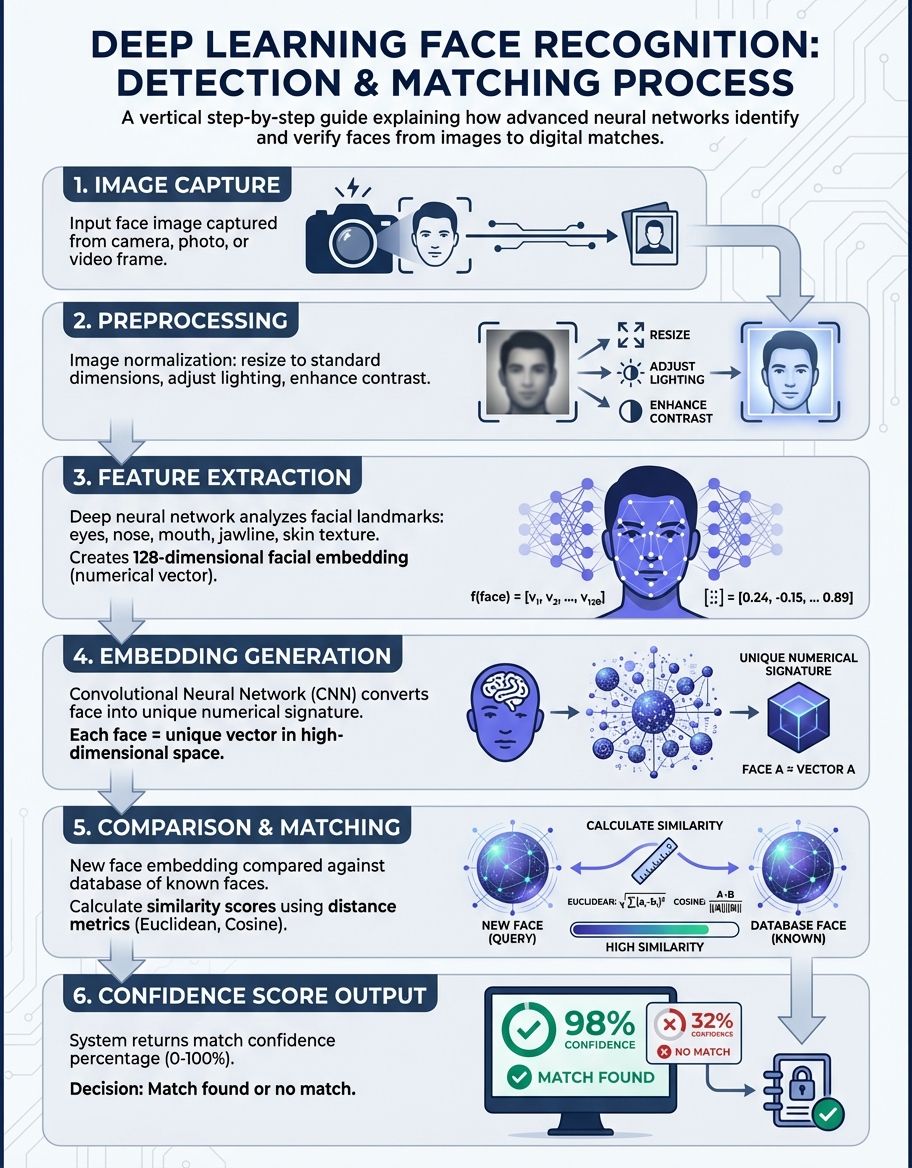

Image preprocessing forms the critical foundation for effective face recognition. Each image must be standardized before neural network processing begins. The input image undergoes normalization to ensure consistent pixel value ranges, typically scaling values between 0 and 1 or -1 and 1. This standardization prevents certain features from dominating the learning process due to arbitrary scaling differences.

Face alignment represents another essential preprocessing step. The system detects facial landmarks—eyes, nose, mouth—and applies geometric transformations to orient each face consistently. This alignment ensures that the neural network receives images in a standard pose, reducing variability that could confuse the recognition process. Modern networks handle rotation, scaling, and translation automatically through learned affine transformations.

Resolution requirements vary by architecture, but most deep learning models require square input dimensions. Common sizes include 224×224 pixels for VGG and ResNet architectures, or 160×160 for FaceNet implementations. The image quality directly impacts recognition accuracy—higher resolution captures more facial detail but requires greater computational resources for processing.

Color space conversion also plays a role in optimization. While some architectures process RGB images directly, others convert to grayscale to reduce computational complexity. The choice depends on the specific model architecture and whether color information provides meaningful discriminative features for the recognition task at hand.

Understanding Measurements and Feature Extraction

Facial measurements in deep learning differ fundamentally from traditional geometric approaches. Instead of manually defined distances between facial landmarks, neural networks learn learned measurements through training on millions of faces. These learned metrics capture subtle variations in facial structure that human-designed features might miss.

The embedding space represents faces as high-dimensional vectors, typically ranging from 128 to 512 dimensions. Each dimension captures different aspects of facial appearance—some may encode eye spacing, others nose shape, and still others complex combinations that defy simple interpretation. The features extracted by deep neural networks create a geometric space where similar faces cluster together.

Distance metrics quantify similarity between facial embeddings. Euclidean distance measures straight-line proximity in embedding space, while cosine similarity computes the angle between vectors. These metrics determine whether two faces belong to the same person. Threshold values establish the decision boundary—pairs with features below the threshold are considered matches.

Understanding DeepFace Architecture and Implementation

DeepFace, introduced by Facebook researchers, pioneered the application of deep convolutional networks to face recognition. The architecture achieves human-level accuracy by processing faces through nine stages of neural computations. The deepface model learns hierarchical representations, with early stages detecting edges and textures while deeper stages recognize complete facial structures.

The preprocessing pipeline includes 3D alignment that corrects for pose variations. This frontalization step transforms each face to a canonical frontal view, eliminating perspective distortions. The aligned image then passes through the deep network, which applies convolution operations to extract increasingly complex features at each layer.

Training this model requires massive datasets—the original Facebook implementation used four million labeled faces. This scale enables the network to learn robust features that generalize across ethnicities, ages, and imaging conditions. This architecture demonstrated that sufficient data combined with deep architectures could surpass previous recognition methods by substantial margins.

Understanding Layers and Neural Network Architecture

Convolutional neural network architecture forms the backbone of face recognition. Each level applies learned filters to detect patterns at different scales and abstraction levels. Initial processing identifies edges, curves, and simple textures. Intermediate stages combine these elements to recognize facial features like eyes, noses, and mouths. Deep processing synthesizes complete face structures and high-level identity information.

Pooling operations reduce spatial dimensions while preserving important features. Max pooling selects the strongest activation in each region, making the representation invariant to small translations. This hierarchical reduction creates increasingly compact representations that capture essential identity information while discarding irrelevant details.

Fully connected architecture near the network's end integrates features from across the entire face. This processing learns to combine spatial information into a unified embedding. The final output produces the facial encoding—a fixed-length vector that serves as the face's numerical signature for recognition tasks.

Activation functions introduce non-linearity enabling networks to learn complex decision boundaries. ReLU (Rectified Linear Unit) activations are common in modern architectures, allowing efficient training while preventing gradient vanishing problems.

Normalization techniques stabilize training and improve generalization. Batch normalization standardizes activations accelerating convergence and reducing sensitivity to initialization.

Understanding Code Implementation and Practical Development

Python dominates deep learning for face recognition development, with frameworks providing high-level abstractions over complex neural operations. TensorFlow and PyTorch offer flexible Python APIs for building custom architectures or fine-tuning pre-trained models. Python code libraries enable loading models and processing faces with just a few lines.

A typical Python implementation begins with importing necessary libraries and loading a pre-trained model. The code implementation initializes the neural network architecture and loads learned weights from a checkpoint file. Then code preprocessing transforms input into the format expected by the network—resizing, normalization, and any required augmentation.

Inference code processing feeds the preprocessed input through the network to extract the embedding. This forward pass executes computations sequentially, producing the final face vector. Comparison code logic then computes distances between embeddings to determine identity matches. Threshold selection balances false acceptances against false rejections.

Training code requires additional complexity—defining loss functions, optimizers, and training loops. Code implementations must handle batch processing, gradient computation, and weight updates. Data augmentation generates variations of training material to improve generalization. Validation monitoring tracks performance on held-out data to prevent overfitting.

Production deployments add error handling, logging, and performance optimization. Implementations must manage GPU memory efficiently, batch requests for throughput, and handle edge cases like poor quality or failed detection. Well-structured design separates concerns—detection, alignment, encoding, and matching as distinct modules.

Open-source libraries like face_recognition simplify development by providing complete pipelines. These tools abstract technical details, allowing developers to implement face recognition with minimal deep learning expertise. However, custom development offers greater control over architecture choices and optimization strategies for specialized applications.

The install process for deep learning frameworks has become increasingly streamlined. Package managers like pip install dependencies with single commands. However, GPU acceleration requires additional setup—CUDA libraries, cuDNN, and compatible driver versions. The install documentation guides users through platform-specific configurations.

Understanding the Face_Recognition Library and Tools

This library provides a high-level Python interface built on dlib's deep learning models. Python developers can implement complete workflows without managing low-level neural network operations through this abstraction of detection, landmark extraction, and encoding complexity.

Under the hood, face_recognition uses a ResNet-based architecture trained on millions of faces. The library handles preprocessing automatically—detecting faces, aligning them based on landmarks, and extracting 128-dimensional embeddings. These embeddings support fast comparison operations for real-time applications.

The face_recog API supports both single and sequential processing. For streams, the library can process frames sequentially, tracking faces across time. This capability enables applications like security monitoring or automatic photo tagging in media libraries.

Understanding Images Dataset and Training Considerations

Training data quality determines recognition model performance. Large-scale datasets containing millions of examples from thousands of identities enable networks to learn generalizable features. Samples should represent diverse demographics, ages, poses, and lighting conditions to ensure robust operation across real-world scenarios.

Data augmentation artificially expands training datasets by applying transformations to existing images. Techniques include rotation, scaling, brightness adjustment, and horizontal flipping. These augmented images help networks learn invariance to common variations encountered during deployment.

The images in training sets require careful labeling—each face must be associated with the correct identity. Mislabeled images introduce noise that degrades model performance. Quality control processes verify label accuracy before training begins. Clean, well-curated image datasets produce more reliable recognition models.

Test data provide unbiased performance evaluation. These images must never appear in training data to ensure realistic accuracy estimates. Standard benchmarks like LFW (Labeled Faces in the Wild) and MegaFace enable comparison across different architectures and training approaches.

The number of images per identity affects recognition accuracy. Models trained on many examples per person generalize better to new photos of those individuals. However, practical deployments often must recognize people from just one or two enrollment photos, requiring architectures optimized for few-shot learning scenarios.

Resolution in training data should match deployment conditions. Networks trained on high-resolution studio photos may fail when processing low-resolution surveillance material. Matching training characteristics to real-world conditions improves operational performance.

Privacy considerations govern dataset collection and use. Regulations like GDPR impose requirements on obtaining consent, limiting retention, and enabling data deletion. Responsible curation balances the need for diverse training data against individual privacy rights.

Synthetic images generated by GANs (Generative Adversarial Networks) offer privacy-preserving alternatives to real photos. These artificial faces enable training without collecting personal data. However, ensuring synthetic image diversity and realism remains an active research challenge.

Transfer learning leverages images from large public datasets to bootstrap training for specialized applications. Pre-trained networks learn general facial features from millions of images, then fine-tune on smaller domain-specific datasets. This approach reduces the image volume required for effective training.

Data efficiency techniques like metric learning optimize how networks learn from limited images. Triplet loss and other specialized objectives encourage the network to separate different identities while grouping photos of the same person, maximizing information extracted from each training image.

Understanding Machine Learning Foundations and Deep Learning Advances

Traditional machine learning approaches to face recognition relied on hand-crafted features like eigenfaces, Fisherfaces, or Local Binary Patterns. These methods required expert domain knowledge to design effective feature extractors. Performance plateaued as manually designed features couldn't obtain the full complexity of facial variation.

Deep learning transcends these limitations through learned representations. Rather than programming specific features, neural networks discover optimal representations directly from data. This data-driven approach has proven more effective than human-designed features across virtually all computer vision tasks.

The machine learning pipeline for face recognition includes several stages: data collection, preprocessing, feature extraction, and classification. Deep learning automates the feature extraction component, learning hierarchical representations that traditional machine learning required humans to specify.

Supervised machine learning requires labeled training examples—faces tagged with identity information. The network learns to map images to identities by minimizing prediction errors on this labeled data. The quality and quantity of labeled examples fundamentally limit what the machine learning system can achieve.

Unsupervised machine learning techniques can augment face recognition through clustering or dimensionality reduction. These methods discover structure in unlabeled image collections, potentially identifying useful groupings or representations without manual annotation effort.

Semi-supervised machine learning combines small labeled datasets with large unlabeled collections. The network learns general visual features from unlabeled data, then fine-tunes on labeled examples. This approach reduces annotation costs while achieving performance comparable to fully supervised methods.

Reinforcement learning has explored applications in active face recognition—models that determine optimal camera positioning or image acquisition strategies. Though less common than supervised approaches, reinforcement learning offers potential for optimizing the complete recognition pipeline including data acquisition.

Ensemble machine learning combines multiple models to improve accuracy and robustness. Different architectures or training procedures produce diverse recognition models whose predictions can be aggregated. Ensembles often outperform individual models, especially on challenging cases where single networks might fail.

Comparison of Deep Learning Face Recognition Approaches

| Architecture | Embedding Size | Training Dataset | Accuracy | Speed |

|---|---|---|---|---|

| DeepFace | 4096 dimensions | 4 million images | 97.35% on LFW | Moderate |

| FaceNet | 128 dimensions | 200 million images | 99.63% on LFW | Fast |

| VGGFace | 2622 dimensions | 2.6 million examples | 98.95% on LFW | Moderate |

| ArcFace | 512 dimensions | 5.8 million samples | 99.83% on LFW | Fast |

| MobileFaceNet | 128 dimensions | Varied | 99.55% on LFW | Very Fast |

| SphereFace | 512 dimensions | CASIA-WebFace | 99.42% on LFW | Moderate |

Advanced Topics: VGGFace, implementations Integration, and Real-World Deployment

VGGFace adapts the VGG architecture originally designed for classification to face recognition tasks. The deep network uses small 3×3 convolution filters stacked in sequences, creating networks with 16 or 19 weight levels. VGG demonstrates that classification architectures can be effectively repurposed for metric learning in verification.

Production platforms integrate face recognition into broader security or user experience workflows. The recognition component must interface with cameras, databases for identity storage, and application logic for access decisions. API design determines how easily the recognition capability integrates into existing infrastructure.

Scalability challenges emerge when platforms must handle thousands of simultaneous recognition requests or search against databases containing millions of identities. Distributed computing architectures, efficient indexing structures, and approximate nearest neighbor search enable real-time operation at scale.

Edge deployment moves recognition processing to cameras or mobile devices rather than cloud servers. This approach reduces latency, preserves privacy by avoiding transmission, and operates without network connectivity. However, edge deployments face constraints on computational resources and must use optimized models.

Video Processing and Real-Time Recognition

Sequential face recognition processes temporal streams rather than individual inputs. Tracking faces across frames enables accumulation of evidence over time, improving accuracy compared to single-frame recognition. Multi-frame processing provides multiple viewing angles and expressions, offering richer identity information.

Real-time requirements demand efficient algorithms that extract embeddings within frame duration constraints. Implementations must balance accuracy against processing speed—simpler models execute faster but may sacrifice precision. Hardware acceleration through GPUs or specialized AI chips enables real-time analysis.

Motion blur and compression artifacts degrade recognition accuracy. Preprocessing techniques attempt to restore quality, but fundamental limitations exist when conditions are poor. Design must account for realistic quality rather than assuming pristine inputs.

Face Detection Integration and Pipeline Architecture

Face detection precedes recognition in most pipelines—locating faces before encoding them. Modern detectors use deep learning architectures like MTCNN or RetinaFace that simultaneously locate faces and predict landmark positions. Localization accuracy directly impacts overall recognition performance.

Multi-stage pipelines first propose candidate regions, then refine locations and filter false positives. This coarse-to-fine approach balances speed and accuracy. The localization component must handle faces at various scales, poses, and occlusions to feed quality inputs to the recognition network.

End-to-end architectures jointly optimize both objectives. These unified designs share feature extraction across both tasks, reducing computational redundancy. However, modular pipelines with separate stages offer greater flexibility for upgrading individual components.

Failure modes arise when localization misses faces or produces poor results. Recognition workflows must handle failures gracefully—either requesting better inputs or flagging low-confidence results. Robust design anticipates and manages errors at each stage.

Localization performance in unconstrained environments remains challenging. Extreme poses, partial occlusions, or unusual lighting can cause misses that humans easily perceive. Research continues improving robustness to handle the full range of real-world variations.

Speed-accuracy tradeoffs in algorithms parallel those in recognition. Lightweight approaches enable real-time processing on resource-constrained devices but may miss difficult cases. Application requirements determine the appropriate balance between thoroughness and efficiency.

Frequently Asked Questions

How does mobiface work?

MobiFace optimizes face recognition for mobile devices using efficient neural architectures. The system employs depthwise separable convolutions that dramatically reduce computational requirements while maintaining recognition accuracy. This enables real-time face recognition on smartphones and embedded models without requiring cloud connectivity or powerful GPUs.

How does face recognition work?

Face recognition works by converting facial examples into numerical representations called embeddings. A deep neural network processes the image through multiple layers, extracting increasingly abstract features. The final embedding vector serves as a compact numerical signature for that face. Recognition compares embeddings using distance metrics—faces with similar embeddings likely belong to the same person. Training on millions of labeled faces teaches the network to create embeddings where matching faces cluster together.

How does deep learning models convert each face?

Deep learning models convert each face through a sequence of mathematical transformations. The input image passes through convolutional layers that detect visual patterns at different scales. Early layers identify edges and textures, while deeper layers recognize facial features and overall structure. Final layers map these patterns into a fixed-dimensional embedding space. This conversion process learns to emphasize identity-relevant features while ignoring irrelevant variations like lighting or expression.

How does real-time deep learning-based face recognition algorithms work?

Real-time deep learning-based face recognition algorithms optimize for speed through efficient architectures and hardware acceleration. MobileNet-style networks use lightweight operations that execute quickly on standard CPUs or mobile processors. GPUs parallelize computations across many faces simultaneously. architectures process video frames continuously, tracking detected faces and updating recognition results as new information arrives. Caching strategies avoid redundant computations for faces that persist across multiple frames.

How does detecting human faces work?

Detecting human faces uses specialized neural networks trained to locate faces. Multi-task cascaded networks first scan at multiple scales to identify candidate regions. Subsequent refinement stages improve localization accuracy and predict facial landmark positions. The detector outputs bounding boxes indicating locations plus landmark coordinates for eyes, nose, and mouth. These results feed into alignment and recognition stages of the complete workflow.

How does deep learning work?

Deep learning works by building hierarchical representations through multiple processing stages. Each layer applies learned transformations—convolutions, activations, pooling—that extract increasingly high-level features from input data. Training adjusts millions of parameters using gradient descent to minimize prediction errors on labeled examples. The depth of these networks enables learning complex patterns that shallow models cannot acquire. Backpropagation efficiently computes gradients through all stages, enabling optimization of very deep architectures.

How does numerical vector called embedding represent faces?

The numerical vector called an embedding represents faces as points in high-dimensional space. Each dimension captures specific facial characteristics learned during training. The embedding condenses all identity-relevant information from the image into a compact representation, typically 128 to 512 numbers. Faces of the same person map to nearby points in this space, while different people occupy distant regions. This geometric organization enables efficient similarity computation and identity matching.