Facial Matches Aren't Yes or No. They're Scores. | Podcast

Facial Matches Aren't Yes or No. They're Scores. | Podcast

This episode is based on our article:

Read the full article →Facial Matches Aren't Yes or No. They're Scores. | Podcast

Full Episode Transcript

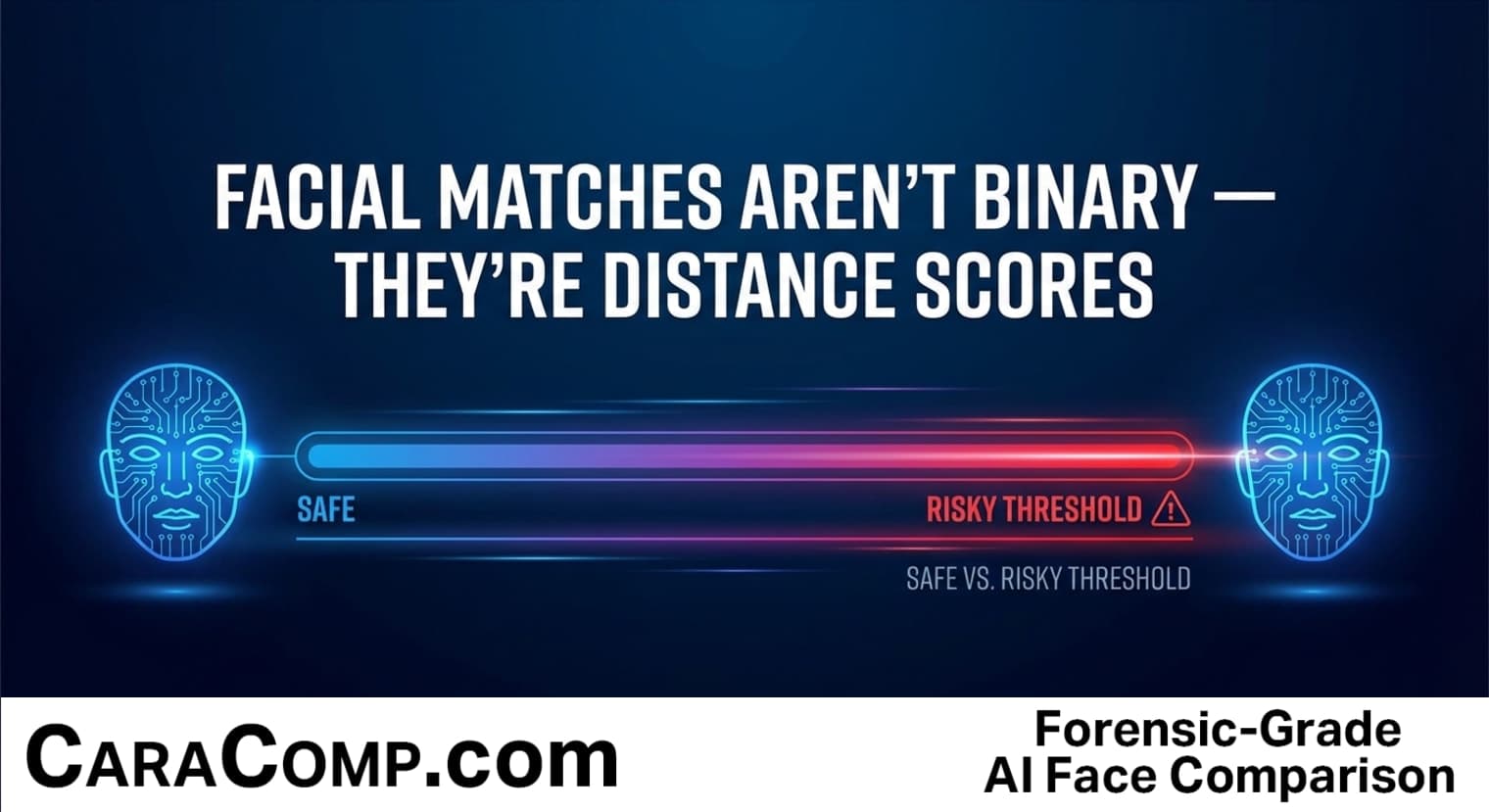

What if I told you facial recognition never actually says yes or no? It doesn't deal in certainty. Every single facial "match" is really just a number on a sliding scale — and someone, somewhere, decided where to draw the line.

If you've ever unlocked your phone with your face,

If you've ever unlocked your phone with your face, you've trusted this system. If you've ever been tagged in a photo automatically, you've seen it work. But most people assume the technology is giving a definitive answer. So here's the question that threads through today's episode — who decides what counts as a match, and how much does that decision actually matter?

Let's start with how a face becomes something a computer can work with. A facial recognition system measures the geometry of your face — the distance between your eyes, the shape of your jaw, the angles of your cheekbones. Then it converts all of that into a list of about a hundred and twenty-eight numbers. Think of it like turning your face into a unique coordinate on a map. Except this map doesn't have two dimensions — it has a hundred and twenty-eight. That list of numbers is called a face embedding. It's basically a numerical fingerprint for your face.

So what happens when the system compares two faces? It measures the distance between their two coordinates on that massive map. The closer the two points, the more alike the faces. It's the same distance formula you learned in school — just stretched across way more dimensions. The result is a distance score. A small distance means the faces look very similar. A large distance means they don't. But here's the thing — that score is just a number on a continuum. By itself, it doesn't say "match" or "no match."

The Bottom Line

Now here's where it gets clever — and a little unsettling. Someone has to pick a cutoff point. That cutoff is called the threshold. Think of it like a blood alcohol limit for driving. Just below the legal limit, you're fine. Just above it, you're facing charges. The biological difference is basically nothing — but the consequence is enormous. Facial recognition thresholds work the same way. Set the threshold strict, and you'll miss some real matches — but you'll rarely flag the wrong person. Set it loose, and you'll catch more true matches — but you'll also accuse more innocent people. Research has shown that shifting this threshold by a tiny amount can change the false positive rate by roughly ten times. That's not a glitch. That's how the system is designed to work. And unlike blood alcohol limits, these thresholds are rarely made public.

Now here's what most people get wrong. When a system reports a "high confidence" match, most folks assume it means the system is sure. But that confidence number is really just how far below the threshold the score landed. A match labeled ninety-something percent under an aggressive threshold can actually be less reliable than a lower-scoring match under a strict one.

So here's the bottom line. Facial recognition doesn't give yes-or-no answers. It gives distance scores — and a human-chosen cutoff decides what counts as a match. That cutoff is a tradeoff between catching the right person and falsely flagging the wrong one. Next time you hear that facial recognition "confirmed" someone's identity, remember — the number behind the match matters more than the match itself. Worth thinking about the next time this technology shows up in a courtroom or a headline.

Ready to try AI-powered facial recognition?

Match faces in seconds with CaraComp. Free 7-day trial.

Start Free TrialMore Episodes

27 Million Gamers Face Mandatory ID Checks for GTA 6 — Your Cases Are Next

Twenty-seven million people. That's how many gamers in Australia may need to hand over a photo I.D. or a face scan just to play Grand Theft Auto 6 online. One video game title, one country, and sudden

PodcastA 0.78 Match Score on a Fake Face: How Facial Geometry Stops Deepfake Wire Scams

A deepfake video call can reduce a human face to a string of a hundred and twenty-eight numbers in under two hundred milliseconds. And according to a report by Resemble.ai, deepfake fraud damage hit three hundred and fif

PodcastDeepfakes Force New Identity Rules — And Investigators’ Evidence Is on the Line

Nudification apps — tools that use A.I. to digitally undress people in photos — have been downloaded more than seven hundred million times. That's not a typo. Seven hundred million downloads of softwa