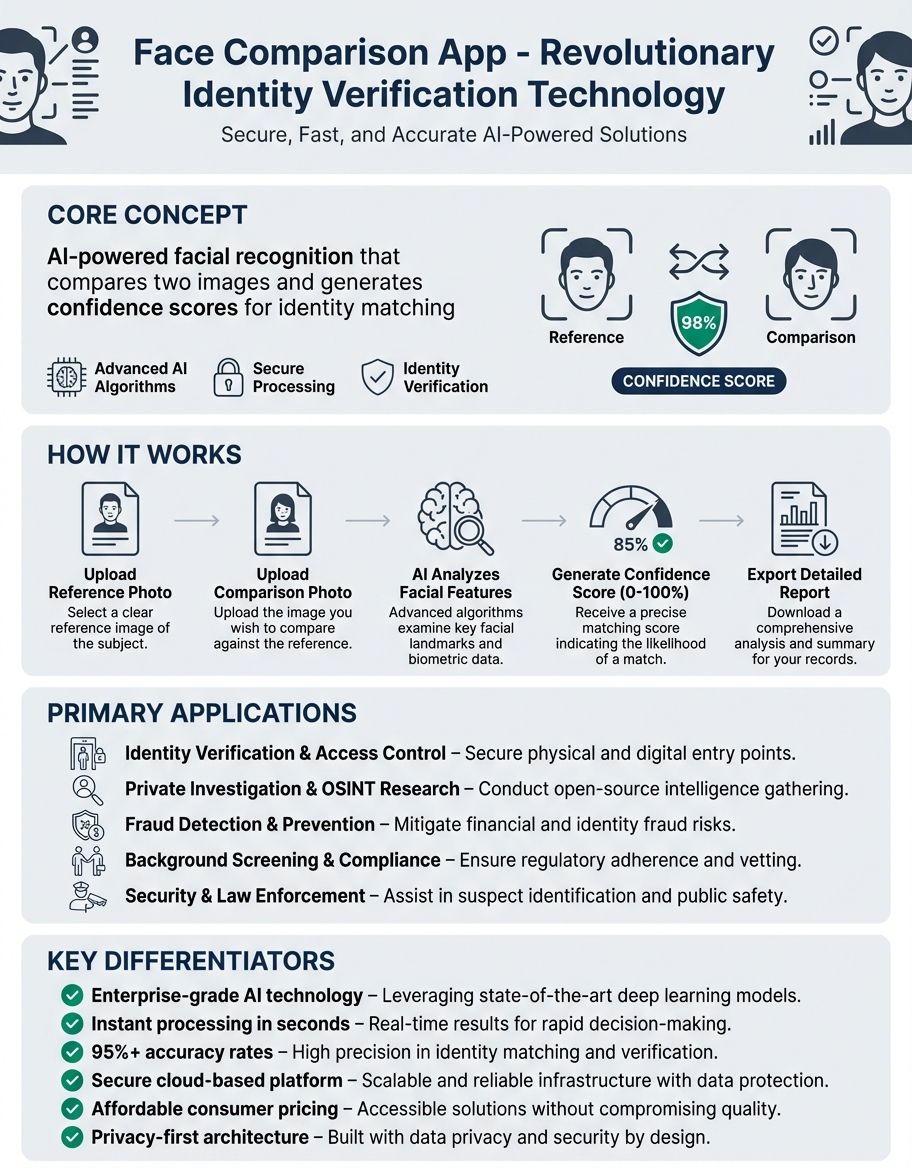

Face Comparison App - Advanced Face Recognition Technology

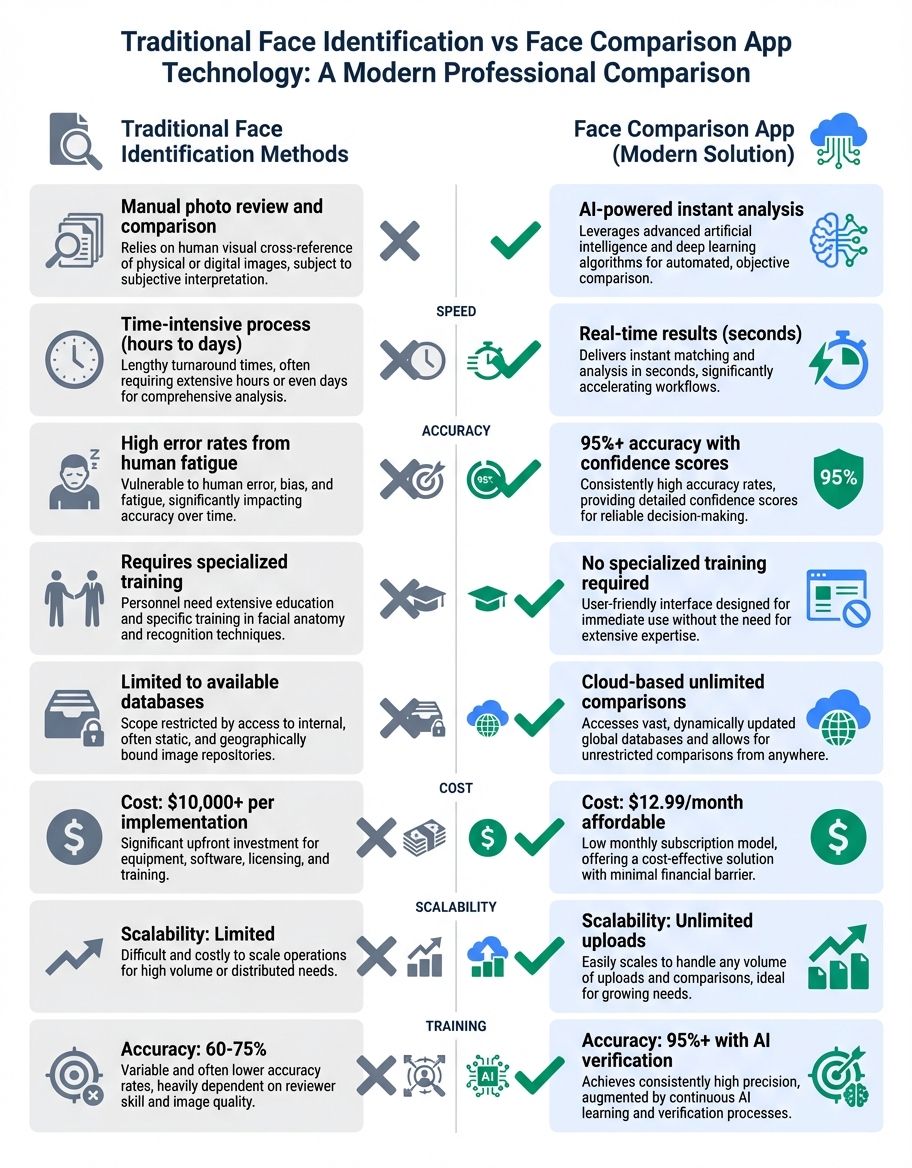

Face comparison technology has revolutionized how we verify identities, enhance access systems, and create engaging user experiences across digital platforms. Whether you're looking to compare face similarity between pictures, implement identification algorithms in your application, or understand how modern visual processing works, face comparison solutions offer powerful capabilities that extend far beyond simple matching.

Face comparison applications analyze facial characteristics within images to determine similarity scores, verify identities, and enable a wide range of free applications from safety to entertainment. These tools process submitted images using sophisticated identification algorithms that map face landmarks, compare geometric patterns, and calculate similarity score and match percentages with remarkable accuracy. As privacy concerns grow and information safety becomes paramount, understanding how these applications work and what safeguards they implement has never been more important.

Understanding Face Comparison Technology and Similar Faces Analysis

Face comparison technology represents one of the most recognizable implementations of face matching capabilities. True face comparison functionality goes beyond simple filters and transformations to include sophisticated comparison algorithms that analyze face structure, proportions, and facial characteristics. These systems use neural networks trained on millions of images to identify distinguishing characteristics and calculate match level scores between different images. For a comprehensive overview of face comparison technology and its applications, explore our face comparison guide. You may also find our guide on AI face comparison helpful for understanding how artificial intelligence enhances facial recognition accuracy.

Modern face processing implementations leverage deep learning models that can identify faces regardless of lighting conditions, angles, or partial obstructions. The technology processes each image to extract feature vectors representing unique characteristics, then compares these vectors to determine match likelihood. Learn more about specific techniques to compare two faces for similarity and understand match scores. This approach enables applications to handle real-world scenarios where images may vary in quality, resolution, or capture conditions.

The computational requirements for face processing have decreased significantly, allowing mobile devices to perform complex comparisons locally without sending information to external servers. This local processing addresses privacy concerns while maintaining fast response times. Developers implementing face comparison elements can choose between online cloud-based interfaces that offer greater accuracy and on-device processing that prioritizes privacy and offline functionality.

Face analysis technology also incorporates liveness verification to prevent spoofing attempts using images or videos of photographs. These anti-spoofing measures analyze texture patterns, depth information, and micro-movements to verify that a live person is present rather than a static photo. Such safety attributes make face processing technology suitable for identity verification applications where accuracy and fraud prevention are critical.

How Face Detection and Face Features Power Face Comparison

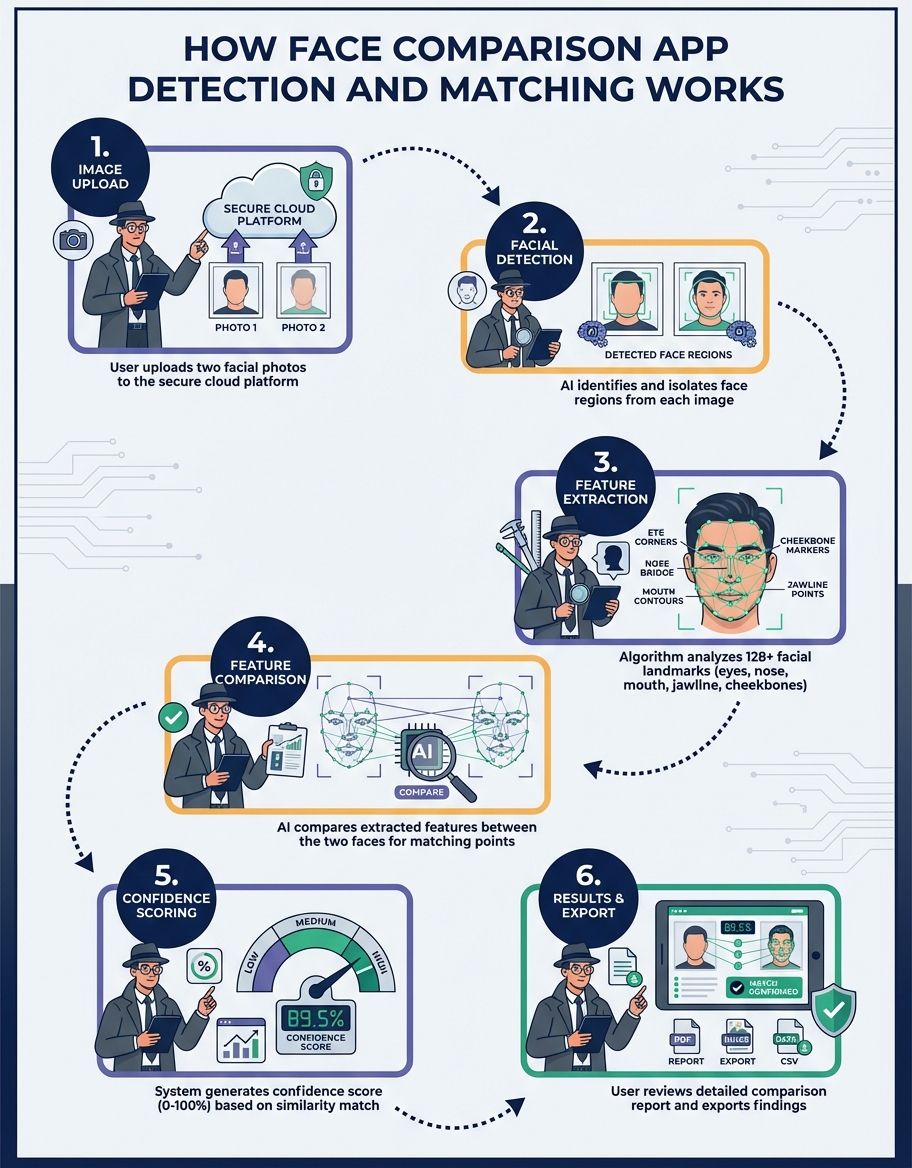

Face detection forms the foundation of all face comparison functionality, identifying face regions within images before any comparison can occur. Advanced face detection algorithms locate faces in complex scenes, handling multiple faces, varying poses, and challenging lighting conditions. The detection phase extracts bounding boxes around face regions and identifies key landmarks such as eyes, nose, mouth, and jawline that serve as reference points for subsequent analysis.

Modern face finding systems employ cascade classifiers and convolutional neural networks that achieve near-perfect accuracy even in crowded scenes or partially obscured faces. These algorithms process images in milliseconds, making real-time face detection feasible for video streams and live camera feeds. The detection stage filters out non-face elements and prepares normalized face regions for the comparison algorithms that follow.

Detection quality directly impacts comparison accuracy, as poorly detected face boundaries lead to incomplete feature extraction and unreliable match scores. High-quality face finding systems handle edge cases like profile views, tilted heads, and faces partially hidden by objects or other people. They also implement scale invariance, detecting faces regardless of their size within the image, which proves essential when comparing pictures taken at different distances or resolutions.

The evolution of face detection technology has enabled face comparison applications to work with historical images, low-resolution images, and even artistic renderings where traditional pixel-based approaches would fail. By focusing on structural relationships between unique characteristics rather than raw pixel values, modern detection algorithms maintain reliability across diverse input types and quality levels.

Image Processing and Face Compare Techniques

Image processing transforms raw images into normalized representations suitable for accurate comparison. This preprocessing stage handles variations in lighting, color balance, contrast, and resolution that would otherwise interfere with accurate matching. Advanced capture processing pipelines apply histogram equalization, noise reduction, and edge enhancement to extract maximum information from each image while minimizing irrelevant variations.

Color space conversions play a crucial role, as many comparison algorithms work more effectively in grayscale or specialized color spaces that separate luminance from chrominance information. Photograph normalization ensures that faces occupy similar regions within processed frames, removing perspective distortions and aligning face landmarks to standard positions. This geometric normalization allows comparison algorithms to focus on intrinsic face-based characteristics rather than pose differences.

Quality assessment algorithms automatically evaluate picture suitability for comparison, flagging photographs with excessive blur, poor lighting, or insufficient resolution. These quality checks prevent false matches caused by comparing one high-quality photo against a degraded snapshot where facial characteristics cannot be reliably extracted. Smart capture processing can enhance marginal-quality pictures to make them usable, applying sharpening, denoising, and dynamic range adjustments that recover lost detail.

Modern photograph processing pipelines also handle format conversions, supporting submissions in various file formats from JPEG to PNG to RAW camera files. They optimize file sizes for efficient transmission and storage while preserving the face-related detail needed for accurate comparisons. Batch processing capabilities enable applications to compare entire image libraries, processing thousands of images to find matches or identify duplicates.

Developer Resources and API Integration

Resources for implementing face comparison functionality span from comprehensive interfaces to open-source libraries and cloud platforms offering managed services. Major providers offer face comparison interfaces that handle the entire pipeline from detecting faces through matching, returning confidence scores and identified faces via simple REST endpoints. These interfaces abstract away the complexity of model training and infrastructure management, allowing developers to integrate sophisticated face comparison with minimal code.

Documentation quality varies significantly across providers, with leading platforms offering interactive tutorials, code samples in multiple languages, and sandbox environments for testing without production costs. Developer communities around popular face comparison tools provide troubleshooting assistance, share optimization techniques, and contribute extensions that address specific use cases. Forums and support channels help developers navigate rate limits, understand pricing tiers, and optimize their implementations for speed and accuracy.

Open-source alternatives appeal to developers who need full control over their implementation or face regulatory requirements around content handling. Libraries like OpenCV, Dlib, and face_recognition provide pre-trained models and processing pipelines that run entirely on developer-controlled infrastructure. While these tools require more expertise to implement effectively, they avoid vendor lock-in and recurring service costs while maintaining complete information privacy.

SDKs and client libraries simplify API integration across platforms, offering native implementations for iOS, Android, web applications, and server-side environments. These libraries handle authentication, request formatting, error handling, and response parsing, reducing implementation time and preventing common integration mistakes. Version control and backward compatibility commitments from major providers ensure that applications continue functioning as APIs evolve.

Developer pricing models range from free trial accounts suitable for small applications to enterprise contracts with dedicated support and custom SLAs. Free pricing tiers let developers start small and scale costs with usage, while committed-use discounts reward applications with predictable traffic patterns. Understanding pricing structures and optimization opportunities helps developers control costs as their applications grow.

Using Photos for Accurate Face Comparison

Self-captured images have become the primary photo type for face comparison applications, offering consistent framing, good lighting, and full-face visibility. The selfie format provides natural face presentation with minimal distortion, though front-camera optics can introduce slight wide-angle effects. Applications optimized for self-taken captures provide guidance overlays that help users position their faces correctly, ensuring captured photos meet quality standards for reliable comparison.

Photo-based verification systems typically request multiple images captured in sequence to verify liveness and prevent spoofing attempts using static photographs. Random challenges like head turns, blinks, or smiles ensure that a live person is present rather than someone holding a photo or playing a video. These dynamic verification flows balance safeguards with user experience, completing checks in seconds without requiring specialized equipment.

Lighting conditions in self-captures can vary dramatically depending on environment, with harsh overhead lighting or backlighting creating shadows that obscure face elements. Advanced processing algorithms compensate for these variations, normalizing lighting across the face and recovering detail from shadowed regions. Some applications provide real-time feedback during capture, alerting users to move toward better lighting or adjust their phone angle for optimal results.

The front-facing camera quality on modern smartphones has improved dramatically, with many devices now offering high-resolution sensors, wide dynamic range, and computational photography attributes that enhance face-based detail. However, older devices and budget phones may produce lower-quality self-portraits that challenge comparison algorithms. Adaptive quality thresholds account for device capabilities, adjusting acceptance criteria based on the camera specifications detected. For another perspective, check out our face similarity checker resource.

Key Characteristics That Define Modern Face Comparison Applications

Modern face comparison applications distinguish themselves through aspects that balance accuracy, speed, privacy protection, and user experience. Real-time processing capabilities enable instant feedback during photo capture and immediate comparison results, eliminating frustrating wait times that plague older implementations. Advanced applications process comparisons locally on-device when possible, avoiding network latency and keeping sensitive biometric information under user control.

Batch comparison elements allow users to compare one reference image against an entire album or collection, automatically identifying all images containing a match. This functionality proves invaluable for organizing photograph libraries, finding duplicates, or locating all pictures of a specific person across years of accumulated images. Progress indicators and cancelable operations ensure users remain in control during lengthy batch processes.

Privacy attributes have become non-negotiable for reputable face comparison applications, with transparent records handling policies and user controls over capture retention. Leading applications delete submitted images immediately after processing, maintain encryption during transmission and storage, and provide audit logs showing exactly how user content has been accessed. Compliance with regulations like GDPR and CCPA requires explicit consent flows and easy information deletion options.

Integration characteristics extend face comparison functionality beyond standalone applications, offering plugins for photo management software, safety measures camera systems, access control platforms, and custom business applications. Webhook notifications alert other systems when matches occur, enabling automated workflows that respond to face comparison events. These integration capabilities transform face comparison from an isolated feature into a building block for comprehensive solutions.

Accuracy reporting gives users confidence in comparison results by displaying match percentages, confidence scores, and quality assessments for input captures. Advanced applications explain why certain comparisons may be unreliable due to poor lighting, obstructions, or extreme pose variations. This transparency helps users understand the technology's capabilities and limitations, setting appropriate expectations for different use cases.

API Solutions for Scalable Face Comparison

Face comparison APIs enable developers to implement sophisticated matching capabilities without building and training their own models. Cloud-based web service services handle the computational overhead of running deep learning models, providing elastic scalability that automatically adjusts to traffic patterns. These managed services eliminate infrastructure concerns, allowing development teams to focus on application logic and user experience rather than model optimization and server management.

Authentication and protection mechanisms protect service endpoint access through token-based systems, OAuth flows, and IP allowlisting that prevent unauthorized usage. Rate limiting prevents abuse while ensuring fair resource allocation across customers, with burst allowances accommodating temporary traffic spikes. Comprehensive monitoring dashboards track interface usage, response times, error rates, and costs, giving developers visibility into their integration's health and performance.

Response formats from face comparison APIs typically include match confidence scores, detected face coordinates, quality assessments, and any warnings or errors encountered during processing. Structured JSON responses make integration straightforward across programming languages and platforms. Webhook callbacks enable asynchronous processing for large batches, notifying applications when results become available rather than requiring continuous polling.

Versioning strategies from providers balance innovation with stability, offering long-term support for established web service versions while introducing enhanced capabilities in newer releases. Deprecation timelines give developers adequate notice to migrate to updated versions, with side-by-side comparison tools demonstrating differences in behavior and accuracy. This evolutionary approach lets providers improve their services without breaking existing integrations.

Online Face Comparison Solutions vs Local Processing

Online cloud-based face comparison solutions leverage cloud computing resources to deliver state-of-the-art accuracy using the latest models and algorithms. These cloud-based services continuously improve through model updates, incorporating advances in machine learning research without requiring application updates. The elastic scalability of services handles usage spikes gracefully, from holiday image submissions to viral social media aspects that drive sudden traffic surges.

Network connectivity requirements for internet-connected solutions create dependency on reliable internet access, potentially limiting functionality in areas with poor coverage or during outages. Latency between submission, processing, and response impacts user experience, particularly for real-time applications where immediate feedback is essential. Bandwidth consumption becomes a concern for applications processing large numbers of high-resolution photographs, especially for users on metered connections or in regions with expensive plans.

Local processing approaches run face comparison algorithms directly on user devices, eliminating network dependencies and ensuring functionality even offline. Privacy-conscious users appreciate that their pictures never leave their devices, addressing concerns about unauthorized records collection or breaches of cloud storage. On-device processing also avoids ongoing service endpoint costs, making local solutions more economical for high-volume applications despite potentially higher upfront development costs.

Performance tradeoffs between online and local processing depend on device capabilities and model complexity. Modern smartphones include specialized neural processing units that accelerate machine learning tasks, enabling sophisticated face comparison in real-time. However, devices more than a few years old may struggle with computationally intensive models, resulting in slow processing or reduced accuracy compared to cloud-based alternatives.

Hybrid architectures combine the benefits of both approaches, performing initial screening and quality checks locally before sending promising candidates to cloud services for detailed analysis. This strategy minimizes content transmission, reduces interface costs, and maintains personal safety for images that don't require cloud processing, while still leveraging cloud-based resources when maximum accuracy is needed. Smart fallback logic ensures applications remain functional even when network connectivity is unavailable.

Comparison Table: Face Comparison Application Capabilities

| Capability | Description | Use Case | Accuracy Level | Privacy Protection |

|---|---|---|---|---|

| Real-time Detection | Instant face identity verification in live camera feeds | Access systems, access control | 95-99% | Local processing available |

| Batch Comparison | Compare one photo against thousands in seconds | Image organization, duplicate detection | 90-95% | Submission encryption required |

| Liveness Detection | Verify live person vs photo or video | Identity verification, fraud prevention | 98-99.5% | Biometric information handling |

| Multi-face Processing | Identify and compare multiple faces in one image | Event photos, crowd analysis | 85-92% | Group image consent issues |

| Cross-age Comparison | Match faces across different life stages | Historical photo matching, missing persons | 80-88% | Sensitive information considerations |

| Low-light Enhancement | Process photographs taken in poor lighting conditions | Surveillance, outdoor events | 75-85% | Image quality affects privacy |

Frequently Asked Questions

How does face comparison work in modern applications?

Face comparison works by extracting unique facial characteristics from pictures and calculating similarity scores between them. Applications use neural networks to identify landmarks like eye positions, nose shape, and face contours, creating mathematical representations called feature vectors. These vectors are then compared using distance metrics, with smaller distances indicating higher resemblance. Modern systems achieve accuracy above 95% under good conditions, though factors like lighting, angle, and photo quality affect results. The technology processes faces in milliseconds, enabling real-time comparison for safety and verification applications.

How does face compare functionality differ across platforms?

Face compare functionality varies by platform based on processing power, privacy requirements, and intended use cases. Mobile applications prioritize speed and battery efficiency, often using optimized models that trade some accuracy for faster performance. Cloud platforms leverage powerful servers to run more sophisticated algorithms, achieving higher accuracy but requiring network connectivity and image submissions. Desktop applications balance these approaches, offering local processing with optional cloud enhancement. Each platform implements different safety measures based on its records handling requirements and regulatory environment.

How does face matching technology ensure accuracy?

Face matching technology ensures accuracy through multiple validation stages and quality checks. Systems first assess photo quality, rejecting images with excessive blur, poor lighting, or insufficient resolution. Detection algorithms locate face-related regions with high confidence before proceeding to feature extraction. Comparison results include confidence scores that indicate match reliability, allowing applications to set thresholds appropriate for their protection requirements. Liveness detection prevents spoofing with static images or videos. Regular model updates incorporate the latest research advances, continuously improving accuracy as technology evolves.

How does the face comparison application handle privacy concerns?

Face comparison applications handle privacy concerns through information minimization, encryption, and transparent policies. Leading applications delete submitted captures immediately after processing, retaining only necessary metadata. End-to-end encryption protects information during transmission, while secure deletion ensures removed content cannot be recovered. Privacy-focused implementations offer local processing options where photographs never leave the user's device. Compliance with GDPR, CCPA, and other regulations requires explicit consent, records access rights, and straightforward deletion procedures. Applications disclose exactly what content is collected, how it's used, and how long it's retained.

How does face recognition integrate with face comparison?

Face recognition integrates with face comparison by using comparison algorithms to match detected faces against known identities in a database. Recognition systems first perform face detection and feature extraction, then compare the extracted characteristics against stored templates for registered individuals. When comparison scores exceed confidence thresholds, the system returns the matched identity. This combination enables applications to not just determine if two pictures match, but to automatically identify individuals from images. Access control systems use this integration to grant entry to recognized personnel, while image management applications automatically tag and organize images by person.

How do face recognition applications process multiple faces?

Face recognition applications process multiple faces by detecting all face regions in a photo, then running comparison algorithms on each detected face independently. Advanced systems identify overlapping faces in crowded scenes and handle partial occlusions where one person blocks another. Processing pipelines prioritize faces by size or position, handling prominent faces first before moving to smaller or peripheral ones. Batch processing enables simultaneous comparison of all detected faces against a database of known individuals, returning results for each match. Performance optimization techniques like parallel processing ensure that multi-face images process quickly despite the increased computational requirements.

How do recognition algorithms improve over time?

Recognition algorithms improve over time through continuous model training on larger, more diverse datasets. Machine learning systems learn from errors, adjusting their internal parameters to reduce misclassifications. Regular updates incorporate architectural innovations from academic research, such as improved neural network designs or more efficient feature extraction methods. Feedback loops capture real-world performance information, identifying scenarios where accuracy drops and prioritizing improvements in those areas. Hardware advances enable deployment of more sophisticated models that were previously too computationally expensive. This continuous evolution means that face comparison accuracy has improved dramatically over the past decade and continues advancing.