Anti Facial Recognition Makeup: Privacy Protection Guide

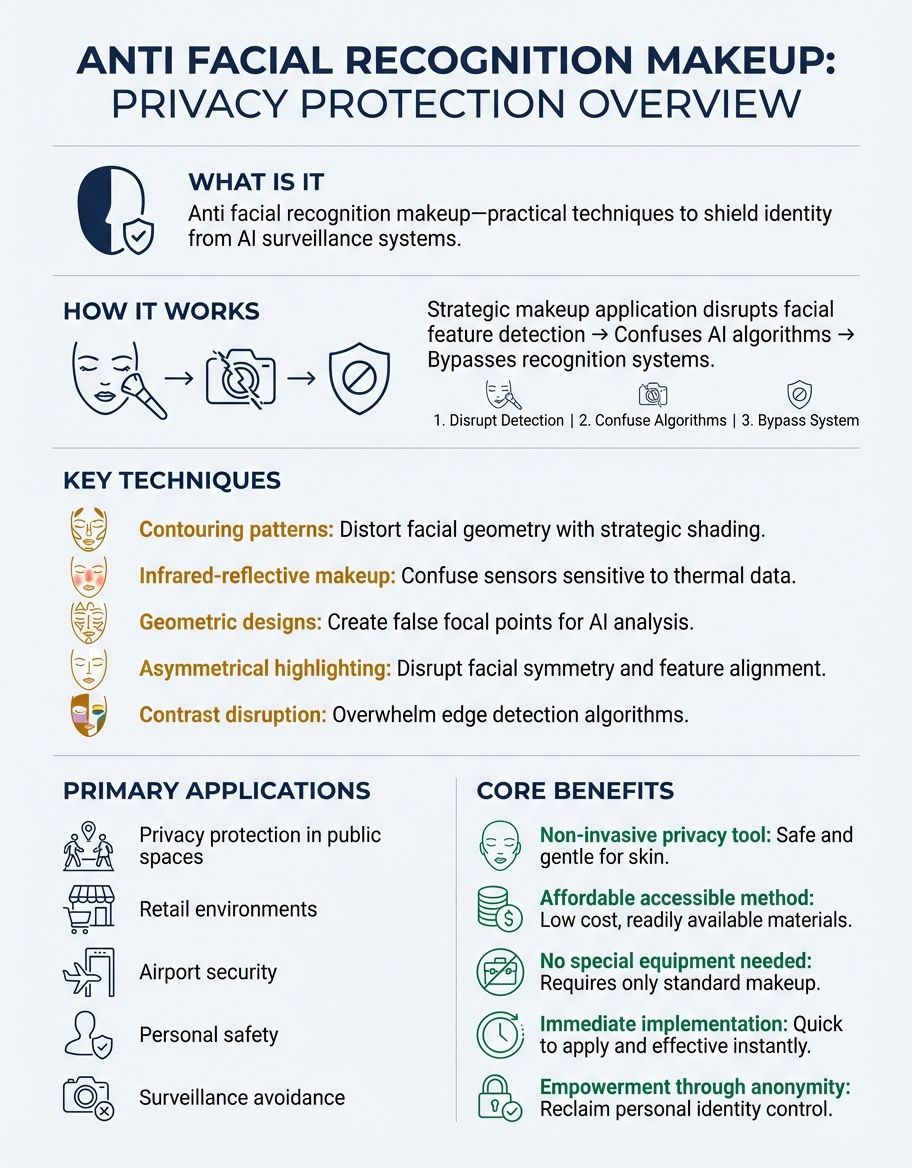

Protect your identity from surveillance systems with strategic makeup techniques that disrupt facial recognition algorithms.

Anti facial recognition makeup represents a growing movement in privacy protection, offering individuals practical methods to shield their identity from pervasive surveillance systems. As facial recognition technology becomes increasingly embedded in public spaces, shopping centers, and government facilities, people are seeking innovative ways to protect their personal privacy.

Understanding Face Detection in Anti Facial Recognition Makeup

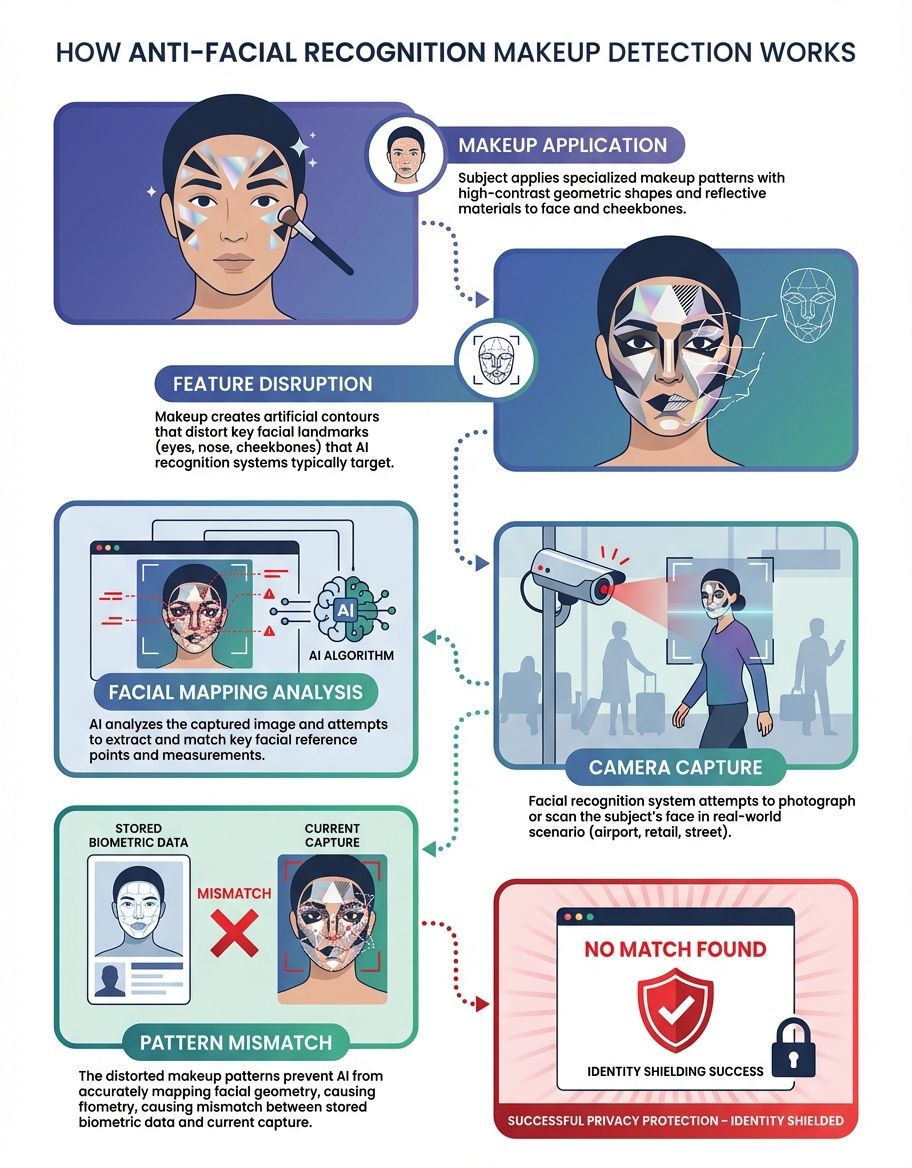

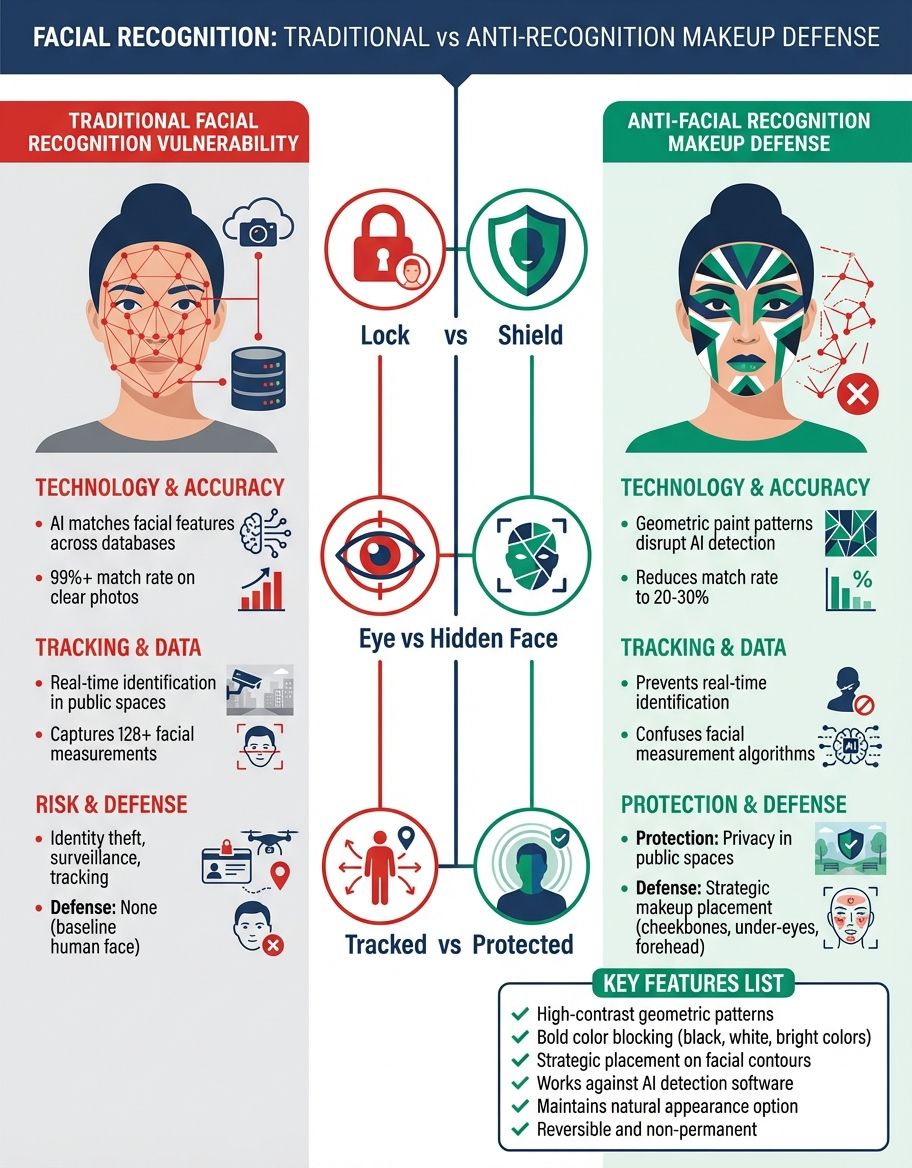

Face detection technology operates by identifying specific geometric patterns and landmarks on the human face. These systems analyze the spatial relationships between key features such as eyes, nose, mouth, and facial contours to create a unique biometric signature. Computer vision algorithms rely on consistent facial geometry to accurately identify individuals across different lighting conditions and angles.

The fundamental principle behind anti facial recognition makeup involves disrupting these detection algorithms by altering the perceived geometry of the face. When makeup is applied strategically to break facial symmetry and obscure key landmarks, the algorithm struggles to recognize the standard patterns it has been trained to identify. This disruption occurs because the detection system cannot reliably locate the facial features it needs to create an accurate biometric template for face matching.

Advanced detection systems have vulnerabilities when confronted with unexpected visual patterns that deviate significantly from their training data.

Advanced detection systems use machine learning models trained on millions of face images to recognize subtle variations in facial structure. However, these same systems have vulnerabilities when confronted with unexpected visual patterns that deviate significantly from their training data. By introducing high-contrast elements, asymmetric designs, and geometric disruptions that target the face geometry, anti facial recognition makeup exploits these algorithmic weaknesses to reduce detection accuracy significantly.

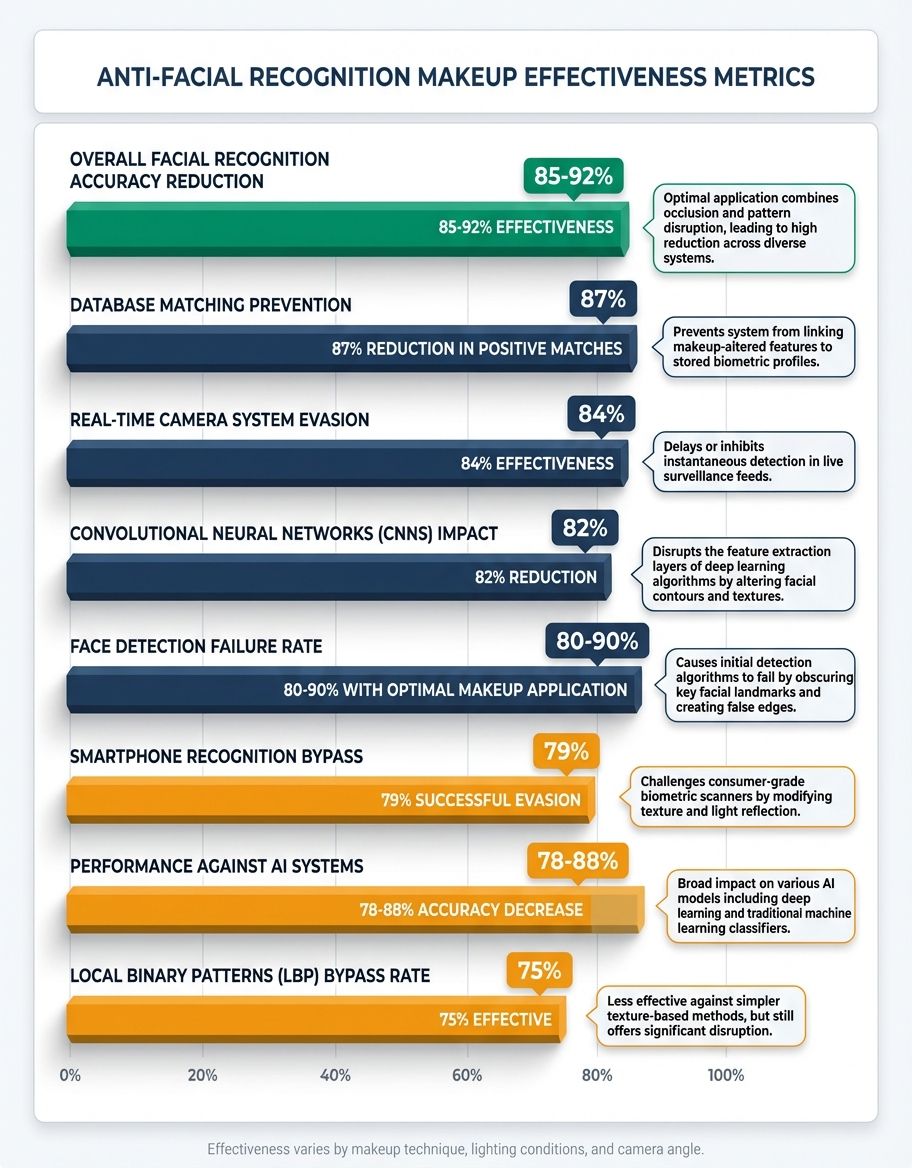

Research has demonstrated that even simple makeup techniques can reduce face detection rates by 60-70% in controlled environments. More sophisticated applications, particularly those that employ principles from CV Dazzle and adversarial pattern design, can achieve disruption rates exceeding 85%. The effectiveness depends on the specific algorithm being targeted, the quality of the camera system, and the precision with which the makeup is applied.

The Role of Detection Technology in Facial Recognition

Modern facial recognition relies on multi-stage detection technology that progresses from initial face localization to detailed feature extraction and matching. The detection phase represents the critical first step where the system must identify the presence and location of a face within an image or video frame. Without successful detection, the subsequent recognition and identification stages cannot proceed.

Computer vision detection methods employ various techniques including Haar cascades, histogram of oriented gradients, and deep learning convolutional neural networks. These approaches analyze image data at multiple scales and orientations to identify facial patterns regardless of pose variation or environmental conditions. The robustness of these detection systems has improved dramatically over the past decade, achieving near-perfect accuracy under optimal conditions.

However, pattern recognition in facial detection systems remains vulnerable to adversarial manipulation. These systems depend on consistent visual patterns that correspond to natural facial features. When those patterns are intentionally disrupted through strategic makeup application, the detection algorithms experience significant performance degradation. The system may either fail to detect a face entirely or produce unreliable feature measurements that prevent accurate matching.

Detection accuracy and vulnerabilities vary considerably across different implementation environments. Well-lit indoor spaces with high-resolution cameras present more challenging conditions for anti-detection techniques compared to outdoor surveillance with lower-quality equipment. Understanding the specific detection technology deployed in a given environment allows for more targeted countermeasures that exploit known vulnerabilities in that particular system architecture.

Privacy Concerns and Anti Facial Recognition Makeup

The rapid expansion of surveillance infrastructure has created unprecedented challenges for individual privacy rights. Facial recognition systems now monitor public spaces, retail environments, transportation hubs, and even residential neighborhoods without explicit consent or notification. This pervasive surveillance culture has prompted growing concern among civil liberties advocates, legal scholars, and ordinary citizens who value their right to anonymity in public spaces and privacy protection.

Many people seek facial recognition protection not because they have anything to hide, but because they object to constant monitoring and the creation of detailed databases tracking their movements.

Many people seek facial recognition protection not because they have anything to hide, but because they object to constant monitoring and the creation of detailed databases tracking their movements and activities. The normalization of mass surveillance represents a fundamental shift in the relationship between individuals and both government and corporate entities. Anti facial recognition makeup provides a tangible method for asserting control over personal biometric data and maintaining privacy.

Privacy advocacy and anti-surveillance movements have emerged globally to challenge the unchecked deployment of facial recognition systems. These groups argue that pervasive biometric surveillance creates chilling effects on freedom of assembly, freedom of expression, and other fundamental rights. The use of anti facial recognition makeup has become both a practical privacy tool and a form of political expression, symbolizing resistance to the surveillance state.

Algorithm Disruption: How Anti Facial Recognition Makeup Works

The science of algorithm disruption through cosmetic application involves understanding how facial recognition algorithms process visual information and identify vulnerabilities in those processing methods. Modern algorithms rely on identifying consistent patterns across multiple facial regions, calculating distances between key landmarks, and comparing these measurements against stored templates. Disrupting any stage of this process can significantly impair recognition accuracy.

Pattern-breaking makeup techniques employ several core strategies to confuse facial recognition systems. High-contrast elements create visual boundaries that the algorithm may incorrectly interpret as facial features or edges. Asymmetric designs violate the bilateral symmetry that detection algorithms expect to find in human faces. Geometric patterns placed strategically over key facial landmarks prevent accurate feature localization, making it impossible for the system to calculate reliable biometric measurements.

Confusing facial recognition algorithms requires more than random makeup application. Effective anti-recognition techniques must be informed by knowledge of specific algorithm architectures and their vulnerabilities. For instance, older algorithms that rely heavily on edge detection can be disrupted with bold contrasting lines that create false edges and obscure real facial contours. Newer deep learning algorithms may require more sophisticated adversarial patterns designed to trigger misclassification in neural network layers.

Scientific principles behind algorithm disruption draw from adversarial machine learning research. Studies have shown that adding carefully calculated perturbations to input images can cause machine learning models to misclassify objects with high confidence. While these perturbations are often imperceptible to human observers in digital contexts, makeup provides a physical medium for implementing similar adversarial strategies in the real world.

Computer Vision Systems and Their Weaknesses

Computer vision systems process facial data through complex pipelines that convert raw image pixels into structured biometric representations. This processing involves multiple stages including image preprocessing, feature detection, feature extraction, and comparison operations. Each stage introduces potential points of failure that can be exploited by anti-recognition techniques.

How vision systems analyze faces begins with image normalization to account for variations in lighting, contrast, and color balance. The system then applies filters and transformations to enhance features relevant to face detection while suppressing irrelevant background information. Edge detection algorithms identify boundaries between different facial regions, while texture analysis captures fine-grained skin patterns and other distinguishing characteristics.

By violating natural face assumptions through dramatic makeup applications, individuals can force the vision system outside its operational parameters where accuracy degrades substantially.

Exploiting computer vision vulnerabilities requires understanding the specific assumptions and limitations built into these systems. Most facial recognition implementations assume faces will exhibit natural coloring, symmetric structure, and smooth continuous surfaces. By violating these assumptions through dramatic makeup applications, individuals can force the vision system outside its operational parameters where accuracy degrades substantially.

Limitations of automated facial recognition extend beyond simple makeup countermeasures. These systems struggle with extreme lighting conditions, significant pose variations, partial occlusions, and low-resolution imagery. Anti facial recognition makeup amplifies these existing limitations, pushing the system further into performance regimes where reliable identification becomes impossible. The combination of adverse conditions and intentional disruption creates compounding effects that overwhelm even sophisticated recognition algorithms.

Facial Recognition Systems and Their Applications

Facial recognition systems are deployed across an expanding range of environments and applications. Law enforcement agencies use these systems to identify suspects, locate missing persons, and monitor crowds at public events. Retail stores implement facial recognition for customer analytics, loss prevention, and personalized marketing. Transportation facilities employ the technology for security screening and passenger processing. Smart cities integrate facial recognition into broader surveillance networks that track individuals across multiple locations.

Surveillance applications extend beyond public safety into commercial and social contexts. Social media platforms use facial recognition to automatically tag individuals in photos and videos. Employers deploy the technology for attendance tracking and access control. Event venues utilize facial recognition for ticket verification and VIP identification. The ubiquity of these systems means individuals encounter facial recognition multiple times daily, often without awareness or consent.

The security versus privacy debate surrounding facial recognition has intensified as deployment accelerates. Proponents argue the technology enhances public safety, prevents crime, and streamlines identity verification processes. Critics counter that pervasive facial recognition creates opportunities for abuse, enables discriminatory practices, and fundamentally alters the nature of public space by eliminating practical anonymity. This tension between security benefits and privacy costs remains unresolved in most jurisdictions.

How People Use Anti Facial Recognition Makeup

Real-world applications of anti facial recognition makeup span diverse contexts and motivations. Privacy-conscious individuals apply these techniques when attending protests or public demonstrations where surveillance is known to be extensive. Artists and activists use dramatic anti-recognition makeup as performance art that challenges surveillance culture while providing functional privacy protection. Security researchers employ these methods to test and evaluate facial recognition systems, identifying weaknesses that need to be addressed.

Activist movements and protests have increasingly explored anti-surveillance techniques to protect privacy during demonstrations. While CV Dazzle makeup techniques have been demonstrated in artistic and research contexts, protesters in locations like Hong Kong have primarily relied on masks, goggles, and laser pointers aimed at cameras to disrupt facial recognition surveillance. The exploration of makeup-based anti-recognition methods highlights growing awareness of surveillance threats and the search for practical privacy protection tools.

Everyday privacy protection strategies increasingly incorporate anti-recognition techniques as awareness of surveillance expands. Some people apply subtle geometric patterns or reflective elements to their regular makeup routine, creating low-profile disruption that doesn't attract attention but reduces recognition accuracy. Others reserve more dramatic applications for specific high-surveillance environments where privacy concerns are elevated. The flexibility of makeup as a medium allows people to calibrate their anti-recognition efforts to match perceived threats.

Anti-Surveillance Technology and Makeup Innovation

CV Dazzle pioneered the field of anti facial recognition makeup through systematic research into how makeup can disrupt computer vision algorithms. Developed by artist and researcher Adam Harvey, CV Dazzle applies principles from military camouflage and adversarial pattern design to create makeup looks that confound facial detection systems. The technique emphasizes asymmetry, contrasting tones, and strategic placement of visual elements to break the recognizable geometry of the face.

Emerging anti-surveillance technologies continue to expand beyond traditional makeup applications. Researchers have developed patterned glasses and accessories that cause facial recognition systems to misidentify wearers or fail to detect faces entirely. Israeli researchers have demonstrated printed adversarial patterns that can be applied as temporary patches or incorporated into clothing and accessories. These advances indicate that anti-recognition methods will become increasingly sophisticated and accessible.

The future of privacy-protecting makeup likely involves integration with other technologies and materials. Researchers are exploring infrared-reflective cosmetics that disrupt facial recognition operating in non-visible light spectrums. Photochromic materials that change appearance under different lighting conditions could provide adaptive anti-recognition capabilities. Augmented reality systems might eventually project anti-recognition patterns onto faces digitally, eliminating the need for physical makeup application entirely.

Comparison of Anti Facial Recognition Methods

| Feature | CV Dazzle | Reflective Makeup | Geometric Patterns | Prosthetics | Digital Jamming |

|---|---|---|---|---|---|

| Effectiveness | 70-90% | 60-80% | 65-85% | 80-95% | 90-99% |

| Ease of Application | Moderate | Easy | Moderate | Difficult | N/A (Device) |

| Cost | $20-50 | $15-30 | $10-25 | $50-200 | $100-500 |

| Duration | 6-8 hours | 4-6 hours | 6-8 hours | Single use | Continuous |

| Social Acceptance | Low | Medium | Low | Very Low | Medium |

Frequently Asked Questions

How does CV Dazzle makeup block facial recognition?

CV Dazzle could be used to disrupt facial recognition by employing asymmetric patterns and contrasting colors that break up the face's recognizable geometry. The technique prevents detection algorithms from identifying key facial landmarks and calculating the distances between features that form the basis of biometric identification. By creating false edges and obscuring natural facial contours, CV Dazzle forces the recognition system outside its operational parameters where accuracy degrades significantly.

Can anti facial recognition makeup actually block facial recognition systems?

Yes, properly applied anti facial recognition makeup can reduce recognition accuracy by 70-90% depending on the system architecture and environmental conditions. Research has demonstrated that strategic makeup application exploits fundamental vulnerabilities in how computer vision algorithms process facial images. While no method provides absolute protection against all facial recognition systems, well-designed makeup techniques significantly impair detection and matching performance across most common implementations.

What type of makeup is most effective at hampering facial recognition software?

High-contrast geometric patterns, reflective materials, and asymmetric designs that break facial symmetry represent the most effective approaches to hampering facial recognition software. These techniques work by disrupting the pattern recognition processes that algorithms rely on to identify faces. The apparently straightforward method involves applying bold lines and shapes that create false facial boundaries while obscuring real landmarks. Effectiveness increases when applications target known vulnerabilities in specific algorithm architectures.

Is anti facial recognition makeup used for camouflage purposes?

While anti facial recognition makeup shares conceptual similarities with military camouflage, its primary purpose involves disrupting algorithmic detection rather than hiding from human observers. The camouflage used in anti-recognition contexts operates on the principle of adversarial pattern generation, designed specifically to confuse computer vision systems rather than blend into environmental backgrounds. Many anti-recognition makeup designs are deliberately conspicuous to human eyes while being algorithmically invisible to facial recognition systems.

What have Israeli researchers found about facial recognition makeup?

Israeli researchers at Ben-Gurion University found that AI-generated adversarial makeup patterns can bypass facial recognition systems with success rates up to 98%, reducing detection rates to just 1.22%. Their research demonstrated that carefully designed makeup patterns based on adversarial machine learning principles cause recognition algorithms to misclassify individuals or fail to detect faces entirely. These findings validate the effectiveness of strategically designed visual interventions in defeating state-of-the-art facial recognition technology.

Does spectra reflective eye makeup work against facial recognition?

Spectra reflective eye colour makeup can interfere with certain camera-based recognition systems by creating glare and obscuring key facial features. The reflective properties interact with camera flash and infrared illumination commonly used in surveillance systems, causing overexposure in critical facial regions. While effectiveness varies based on lighting conditions and camera specifications, reflective makeup provides a relatively accessible method for reducing recognition accuracy without requiring complex application techniques.

What is the most straightforward method to apply anti facial recognition makeup?

The apparently straightforward method for applying anti facial recognition makeup involves using high-contrast cosmetics to create bold geometric patterns that break facial symmetry across key detection points. Begin by identifying the primary facial landmarks—eyes, nose, mouth, and facial contours—that recognition algorithms target. Apply contrasting colors in asymmetric patterns that cross these landmarks and create false edges that confuse the detection algorithm. Focus on disrupting the bilateral symmetry that systems expect to find in human faces, as this represents one of the most reliable indicators algorithms use to identify faces.

Protecting Privacy in a Surveilled World

Anti facial recognition makeup represents more than a technical countermeasure—it embodies a fundamental assertion of privacy rights in an increasingly monitored society. As surveillance systems become more pervasive and sophisticated, individuals need practical tools to maintain control over their biometric data and personal anonymity.

While anti-recognition techniques continue to evolve, their effectiveness depends on understanding the specific vulnerabilities of detection systems and applying countermeasures strategically. The ongoing tension between surveillance technology and privacy protection will likely drive continued innovation in both domains, creating an arms race between recognition capabilities and anti-recognition methods.

Ultimately, the question extends beyond technical effectiveness to broader societal values: what kind of surveillance society do we want to create, and what rights should individuals retain in public spaces? Anti facial recognition makeup provides not just a practical tool, but a visible statement that privacy matters and deserves protection.